Artificial Intelligence (AI)

Summary:

Artificial Intelligence (AI)

- Pradeep Aswal

- February 24, 2025

Summary

- AI refers to the simulation of human intelligence in machines that are programmed to think and learn like humans.

- It encompasses various technologies such as machine learning, natural language processing, computer vision, and robotics.

- ML is a subset of AI that focuses on algorithms and statistical models to enable machines to learn from data and make predictions or decisions.

- DL is a specialized form of ML that uses artificial neural networks with multiple layers to learn complex patterns and representations.

- NLP enables machines to understand and interact with human language, including speech recognition, language generation, and sentiment analysis.

- Techniques like named entity recognition, part-of-speech tagging, and sentiment analysis are used in NLP applications.

- CV involves training machines to understand and interpret visual data, such as images and videos.

- Techniques like object detection, image segmentation, and facial recognition are used in CV applications.

- AI plays a crucial role in robotics and automation, enabling machines to perform tasks autonomously and adapt to changing environments.

- Robotic process automation (RPA) automates repetitive tasks, while autonomous robots can perform complex actions with minimal human intervention.

- AI raises ethical concerns regarding privacy, bias, transparency, and accountability.

- It is important to address these issues and ensure AI systems are developed and deployed responsibly and ethically.

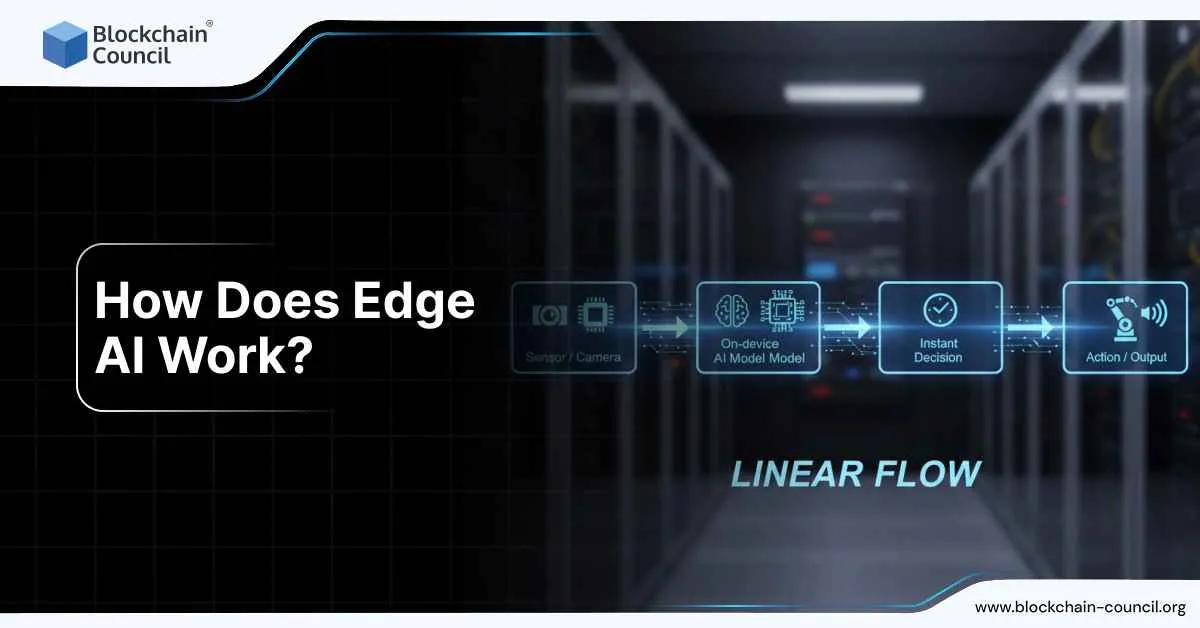

- AI is rapidly evolving, with emerging trends like explainable AI, federated learning, and edge computing gaining traction.

- AI finds applications in various domains, including healthcare, finance, transportation, cybersecurity, and personalized marketing.

- AI is expected to have a profound impact on society, transforming industries, creating new job roles, and revolutionizing how we live and work.

- Continued research, collaboration, and responsible development will shape the future of AI and its potential benefits.

In today’s rapidly advancing world, the realm of Artificial Intelligence (AI) stands as a testament to human ingenuity. From sci-fi novels to real-life applications, AI has captured our imaginations and transformed various industries. That is why a guide to artificial intelligence is essential.

Artificial Intelligence stands as a testament to human innovation and technological prowess. Its evolution from its early beginnings to the complex systems we witness today is awe-inspiring. The impact of AI is far-reaching, transforming industries, amplifying human potential, and opening doors to unprecedented opportunities.

In this comprehensive guide to artificial intelligence, we will embark on an enlightening journey to understand the essence of Artificial Intelligence, its historical evolution, and its profound impact on modern society.

What is Artificial Intelligence?

At its core, Artificial Intelligence refers to the simulation of human intelligence in machines. It encompasses the development of intelligent systems capable of perceiving, reasoning, learning, and problem-solving, mimicking human cognitive abilities. By employing complex algorithms and advanced computing power, AI unlocks a myriad of possibilities, revolutionizing the way we live, work, and interact.

The History and Evolution of Artificial Intelligence

The early years (1950s-1970s)

- The term “artificial intelligence” was coined in 1956.

- Early AI research focused on creating programs that could solve specific problems, such as playing chess or proving mathematical theorems.

- These early programs were often brittle and could not handle unexpected inputs.

The AI winter (1970s-1980s)

- The field of AI experienced a period of decline in the 1970s, due to a number of factors, including the lack of real-world applications for AI and the difficulty of scaling up AI programs.

The resurgence of AI (1980s-present)

- The field of AI experienced a resurgence in the 1980s, due to the development of new algorithms and the availability of more powerful computers.

- AI has since made significant progress in a number of areas, including natural language processing, machine learning, and computer vision.

The following are some of the key milestones in the history of AI:

- 1950: Alan Turing publishes “Computing Machinery and Intelligence,” which proposes the Turing test as a way to measure machine intelligence.

- 1952: Arthur Samuel develops the first checkers-playing program that can learn to improve its own play.

- 1956: The Dartmouth Conference is held, which is considered to be the birthplace of AI research.

- 1969: Shakey, the first general-purpose mobile robot, is built.

- 1997: Deep Blue, a chess-playing computer developed by IBM, defeats world champion Garry Kasparov.

- 2002: The Roomba, the first commercially successful robotic vacuum cleaner, is introduced.

- 2011: Watson, a question-answering computer developed by IBM, defeats two human champions on the game show Jeopardy!.

- 2016: AlphaGo, a Go-playing computer developed by Google DeepMind, defeats world champion Lee Sedol.

- 2021: ChatGPT and Bard come into the market.

- 2023: The development of ChatGPT 4 represents the latest milestone in the evolution of AI.

Deeper Insight into Artificial Intelligence

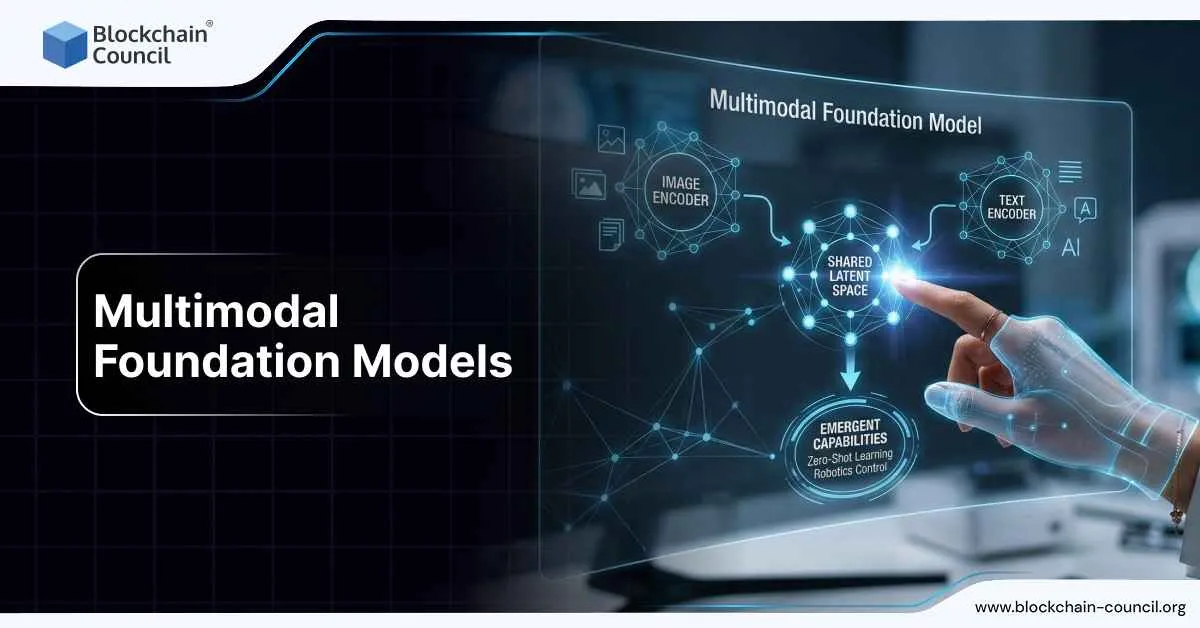

- Machine Learning and Deep Learning: Machine Learning (ML), a prominent subset of AI, empowers machines to learn from data without explicit programming. ML algorithms analyze vast datasets to uncover patterns, make predictions, and generate insights. For instance, recommendation systems on platforms like Netflix employ ML to analyze user behavior and suggest personalized content.

- Deep Learning (DL) takes ML to a more advanced level by utilizing neural networks with multiple layers. DL algorithms excel in processing complex data and extracting intricate features. An excellent example is autonomous vehicles that employ DL to recognize and interpret road signs, pedestrians, and other crucial elements for safe navigation.

- Natural Language Processing (NLP): Natural Language Processing enables machines to understand, interpret, and generate human language. NLP algorithms process and comprehend textual data, enabling applications like speech recognition, language translation, sentiment analysis, and chatbots. For instance, voice assistants like Siri and Google Assistant utilize NLP to understand and respond to user queries.

- Computer Vision: Computer Vision focuses on teaching machines to interpret and comprehend visual information from images or videos. AI systems employ techniques such as image processing, pattern recognition, and deep learning to analyze visual data. Notable applications include facial recognition systems used for identity verification and object detection algorithms employed in autonomous surveillance systems.

- Robotics and Automation: AI-driven Robotics and Automation aim to create intelligent machines capable of performing physical tasks with human-like precision and adaptability. These machines employ AI algorithms to perceive their environment, process sensory information, and execute precise movements. In manufacturing industries, robots equipped with AI can automate repetitive assembly line tasks, enhancing efficiency and productivity.

Types of Artificial Intelligence

Narrow AI: Focused Intelligence for Specific Tasks

Narrow AI, also known as Weak AI, represents the current state of AI technology. It specializes in performing specific tasks with remarkable proficiency, often surpassing human abilities. From voice assistants like Siri and Alexa to recommendation algorithms and autonomous vehicles, Narrow AI is revolutionizing industries across the board.

- Task-Specific Expertise: Narrow AI excels in solving well-defined problems within a limited domain. It leverages machine learning algorithms, such as convolutional neural networks (CNNs) for image recognition, natural language processing (NLP) for text analysis, and reinforcement learning for gaming.

- Real-World Applications: Narrow AI finds practical applications in areas like healthcare, finance, manufacturing, and customer service. Medical diagnosis, fraud detection, quality control, and personalized recommendations are just a few examples where Narrow AI enhances efficiency and accuracy.

General AI: The Quest for Human-Level Intelligence

General AI, often referred to as Strong AI, aims to replicate human-like intelligence and comprehension. Unlike Narrow AI, General AI possesses the ability to understand, learn, and apply knowledge across diverse domains. Achieving General AI remains an ongoing endeavor, but its implications are profound.

- Adaptive Learning: General AI exhibits adaptive learning capabilities, enabling it to accumulate knowledge from various sources, reason logically, and apply that knowledge in novel situations. It is not limited to predefined tasks and possesses a more comprehensive understanding of the world.

- Ethical Considerations: As General AI becomes more sophisticated, ethical considerations gain significance. Questions regarding AI’s decision-making processes, accountability, and potential consequences must be addressed to ensure responsible development and deployment.

Superintelligence: The Future of AI

Superintelligence represents the apex of AI development—an intellect that surpasses human capabilities in every aspect. It embodies a hypothetical scenario where AI reaches an unprecedented level of cognitive ability, surpassing human understanding and decision-making capacity.

- Unfathomable Potential: Superintelligence possesses the ability to outperform humans in virtually every cognitive task. It can rapidly process vast amounts of data, predict outcomes, and provide insights far beyond our comprehension.

- Implications and Challenges: While Superintelligence holds immense promise, its development comes with inherent risks. Ensuring its alignment with human values, preventing malicious use, and maintaining control are essential challenges that demand careful consideration and ethical guidelines.

Key Concepts and Techniques in Artificial Intelligence

Supervised Learning

Training Machines with Labeled Data Supervised learning forms the backbone of many AI applications. In this technique, machines are provided with labeled data, where each data point is associated with a corresponding label or output. The goal is for the machine to learn the underlying patterns and relationships between the input data and the desired output. By utilizing algorithms such as decision trees, support vector machines, or neural networks, supervised learning models can make predictions or classify new, unseen data accurately.

The beauty of supervised learning lies in its ability to tackle a wide range of problems. Whether it’s spam detection in emails, sentiment analysis of customer reviews, or even predicting stock prices, supervised learning algorithms excel at extracting insights from labeled data. With careful feature engineering, where relevant characteristics of the data are selected and transformed, these models can achieve remarkable accuracy and generalize well to unseen examples.

Unsupervised Learning

Discovering Patterns in Unlabeled Data While supervised learning relies on labeled data, unsupervised learning takes a different approach. In this technique, machines are given unlabeled data, without any predefined outcomes or targets. The objective is to uncover the inherent structure and patterns within the data, allowing for valuable insights and knowledge discovery.

Clustering is a prominent application of unsupervised learning, where similar data points are grouped together based on their shared characteristics. This technique has been instrumental in customer segmentation, anomaly detection, and recommendation systems. Another powerful tool in unsupervised learning is dimensionality reduction, which simplifies complex data by capturing its essential features while minimizing information loss.

Reinforcement Learning

Teaching Machines Through Trial and Error Reinforcement learning takes inspiration from how humans learn through trial and error. Here, an agent interacts with an environment and learns to make optimal decisions by receiving feedback in the form of rewards or penalties. The goal is to maximize cumulative rewards over time, leading to intelligent behavior and decision-making.

Consider an autonomous driving system learning to navigate through a city. Through reinforcement learning, the system learns from its actions, receiving positive feedback for safe and efficient driving and negative feedback for traffic violations or accidents. Over time, the agent refines its policies, enabling it to make informed decisions and adapt to changing environments.

Neural Networks

Building Blocks of Artificial Intelligence Neural networks are the foundational building blocks of artificial intelligence. They are designed to replicate the behavior of biological neurons in the human brain, enabling machines to learn and make decisions. A neural network typically comprises three main layers: the input layer, hidden layers, and the output layer.

The input layer receives raw data, which is then processed through the hidden layers using weighted connections and activation functions. Each neuron in the hidden layers performs computations based on the inputs it receives, adjusting the weights associated with them through a process called backpropagation. Finally, the output layer produces the desired results or predictions.

The strength of neural networks lies in their ability to learn complex, non-linear relationships between inputs and outputs. With advancements in network architectures, such as convolutional neural networks (CNNs) for image processing and recurrent neural networks (RNNs) for sequential data, these models have achieved remarkable performance across various domains.

Data Preprocessing and Feature Engineering for AI Models

Data preprocessing and feature engineering play a vital role in building effective AI models. Raw data is often messy, inconsistent, or incomplete, making it necessary to clean and transform it before feeding it to the learning algorithms.

Data preprocessing involves tasks such as removing duplicates, handling missing values, and normalizing data to ensure consistency and improve model performance. Feature engineering focuses on selecting or creating relevant features that best represent the underlying patterns in the data.

Feature engineering techniques may involve extracting statistical measures, transforming variables, or creating new features through domain knowledge. This process greatly influences the performance of AI models, as it helps the algorithms uncover the most informative aspects of the data and improve their predictive capabilities.

By combining data preprocessing and feature engineering with powerful machine learning techniques, such as deep learning and neural networks, we can unlock the full potential of artificial intelligence and achieve groundbreaking results.

Model Evaluation and Selection

Once we have trained our machine learning models, it becomes vital to evaluate their performance and select the most suitable one for our specific task. Model evaluation allows us to assess how well our models generalize to unseen data and make reliable predictions. Various evaluation metrics such as accuracy, precision, recall, and F1 score help us quantify the performance of our models.

Cross-validation is a widely used technique for model evaluation, where the dataset is divided into multiple subsets or “folds.” The model is then trained on a combination of these folds while being tested on the remaining fold. This process is repeated several times, allowing us to obtain a robust estimate of the model’s performance.

To select the best model, we can compare the evaluation metrics across different algorithms or variations of the same algorithm. It’s crucial to strike a balance between model complexity and generalization. Overly complex models may overfit the training data, resulting in poor performance on unseen data, while overly simplistic models may fail to capture the underlying patterns in the data.

Algorithms and Data Structures in AI

The field of artificial intelligence relies heavily on a diverse range of algorithms and data structures to enable efficient and effective processing of information. Algorithms serve as the building blocks of AI, providing step-by-step instructions for solving problems and making decisions.

From classic algorithms like the K-means clustering algorithm and the gradient descent optimization algorithm to more advanced techniques like convolutional neural networks and recurrent neural networks, each algorithm has its own purpose and application. It’s crucial to understand the intricacies and assumptions of different algorithms to choose the most suitable one for a given task.

In addition to algorithms, data structures play a vital role in AI applications. Data structures such as arrays, linked lists, trees, and graphs provide efficient storage and retrieval mechanisms for handling large datasets. They enable algorithms to process and manipulate data in a structured and organized manner, optimizing computational performance.

Training and Testing Models

The process of training and testing models is a crucial step in the development of AI systems. During training, a model learns from labeled data, adjusting its internal parameters to minimize errors and improve performance. The training process involves iterative optimization techniques, such as stochastic gradient descent or backpropagation, which fine-tune the model’s parameters based on the provided data.

Once trained, the model needs to be evaluated through testing. Testing involves feeding the model with unseen data to assess its performance and generalization capabilities. The goal is to ensure that the model can make accurate predictions or classifications on new, real-world examples. Testing helps uncover any potential issues, such as overfitting (when a model performs well on training data but fails to generalize to new data) or underfitting (when a model fails to capture the underlying patterns in the data).

Big Data and AI

As the volume of data continues to grow exponentially, the synergy between big data and AI becomes increasingly powerful. Big data refers to the large and complex datasets that are beyond the capabilities of traditional data processing methods. AI techniques, such as machine learning, excel at extracting valuable insights from these vast amounts of data.

Big data and AI intersect in numerous ways. AI algorithms can process and analyze massive datasets, uncovering patterns and correlations that might be overlooked by human analysts. The availability of large amounts of labeled data enables the training of more accurate and robust models. Furthermore, AI techniques can enhance the efficiency of big data processing, enabling faster and more precise data analysis.

Artificial Neural Networks: Artificial Neural Networks, inspired by the structure and functioning of the human brain, are the foundation of many AI applications. These networks consist of interconnected nodes called artificial neurons or “perceptrons,” which work collectively to process and analyze complex data patterns.

- Structure and Functioning: ANNs are composed of layers: an input layer, one or more hidden layers, and an output layer. Each layer contains interconnected neurons, and the connections between them are weighted. Through a process called forward propagation, data flows through the network, and the weights are adjusted to optimize the network’s performance.

- Training and Learning: The training of ANNs involves exposing the network to labeled data, allowing it to learn and adjust its weights iteratively. Techniques such as backpropagation, where errors are propagated backward to update the weights, play a vital role in fine-tuning the network’s accuracy.

- Applications: ANNs have found applications in a wide range of fields, including image and speech recognition, natural language processing, fraud detection, and recommendation systems. Their ability to recognize complex patterns and adapt to new data makes them powerful tools for solving real-world problems.

Convolutional Neural Networks: Convolutional Neural Networks are a specialized form of ANNs designed specifically for analyzing visual data. They have revolutionized the field of computer vision, enabling machines to perceive and interpret images and videos with astonishing accuracy.

- Architecture and Layers: CNNs are characterized by their unique architecture, comprising convolutional layers, pooling layers, and fully connected layers. Convolutional layers apply filters to extract features from the input images, while pooling layers reduce the dimensionality of the data. Fully connected layers, similar to those in ANNs, perform the final classification.

- Image Recognition and Deep Learning: CNNs excel at image recognition tasks due to their ability to automatically learn hierarchical representations of visual features. Through deep learning, these networks can identify objects, detect faces, classify scenes, and even generate artistic images.

- Beyond Image Recognition: While CNNs have gained prominence in computer vision, their applications extend beyond image analysis. They have proven valuable in fields such as medical imaging, self-driving cars, video analysis, and even natural language processing tasks like sentiment analysis.

Narrow AI vs. General AI: Understanding the Difference

Advanced AI Techniques

- Generative Adversarial Networks (GANs): Generative Adversarial Networks, or GANs, are at the forefront of AI’s creative domain. These powerful networks consist of two neural networks pitted against each other: the generator and the discriminator. The generator learns to generate synthetic data, while the discriminator aims to distinguish between real and synthetic data. Through this adversarial interplay, GANs are capable of generating astonishingly realistic images, videos, and even audio. Their applications span diverse fields, including art, design, and entertainment, providing a glimpse into the limitless potential of AI creativity.

- Transfer Learning: Imagine a world where AI models can leverage knowledge gained from one task to excel at another. This world exists through the paradigm of Transfer Learning. By pretraining a model on a vast dataset and fine-tuning it for specific tasks, we unlock the ability to transfer learned features and insights. Transfer Learning empowers AI systems to achieve remarkable performance with limited labeled data, reducing the resource requirements and time constraints typically associated with training models from scratch. This technique has proven to be a game-changer, propelling advancements in various domains such as computer vision and natural language processing.

- Reinforcement Learning Algorithms: Reinforcement Learning (RL) algorithms emulate how humans learn through trial and error, making them ideal for training intelligent agents to navigate complex environments. Let’s dive into three prominent RL algorithms:

- Q-Learning: Q-Learning, a cornerstone of RL, enables agents to learn optimal actions in Markov Decision Processes. By iteratively updating a Q-table that maps state-action pairs to expected rewards, Q-Learning fosters decision-making based on long-term rewards. Its versatility has led to applications in robotics, game playing, and autonomous systems.

- Deep Q-Networks (DQN): Deep Q-Networks take Q-Learning to the next level by leveraging deep neural networks as function approximators. By combining neural networks with experience replay and target networks, DQNs excel at learning from high-dimensional input spaces. These advancements have propelled DQNs to master complex games, such as playing Atari, demonstrating the remarkable potential of deep reinforcement learning.

- Proximal Policy Optimization (PPO): PPO is an algorithm designed for optimizing policy functions in reinforcement learning. By iteratively updating policy parameters while maintaining a trust region, PPO strikes a balance between exploration and exploitation. Its robustness, sample efficiency, and scalability have made PPO a popular choice in real-world applications, such as robotics, autonomous driving, and game AI.

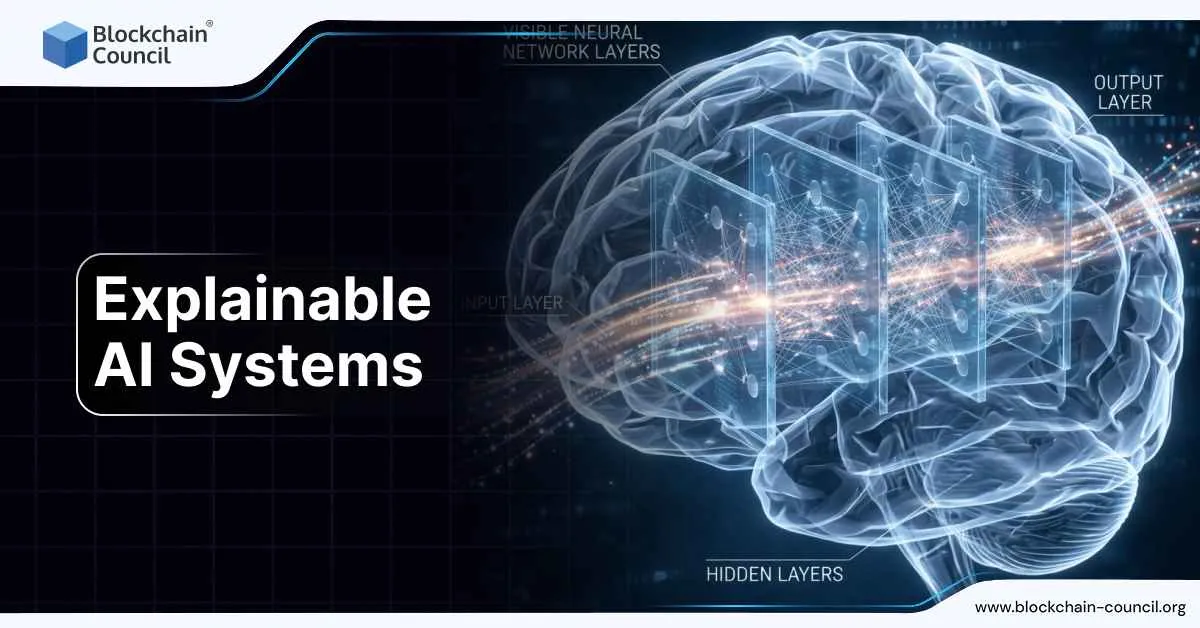

- Explainable AI: As AI becomes increasingly integrated into critical systems, understanding the decision-making processes of AI models is of paramount importance. Explainable AI (XAI) aims to shed light on the black box by providing insights into how AI models arrive at their conclusions. Techniques such as rule-based explanations, feature importance, and attention mechanisms enable us to interpret and trust AI systems. XAI not only enhances transparency and accountability but also fuels advancements in domains where interpretability is crucial, such as healthcare, finance, and law.

- AutoML and Hyperparameter Optimization: Developing effective machine learning models often involves a laborious process of fine-tuning various hyperparameters. AutoML and Hyperparameter Optimization techniques automate and streamline this process, empowering practitioners to focus on problem-solving rather than tedious parameter tuning. By utilizing algorithms like Bayesian optimization, genetic algorithms, and neural architecture search, these techniques intelligently explore the hyperparameter space, resulting in improved model performance and efficiency.

Real-World Applications of Artificial Intelligence

AI in Healthcare

- Accurate cancer diagnosis: AI-powered image recognition tools can help doctors detect cancer with greater accuracy than traditional methods. For example, PathAI uses AI to analyze millions of images of cancer cells, helping doctors identify the disease earlier and more accurately.

- Early diagnosis of fatal blood diseases: AI can also be used to detect fatal blood diseases early. For example, Guardant Health uses AI to analyze blood samples for signs of cancer, helping doctors diagnose the disease in its earliest stages.

- Customer service chatbots: AI-powered chatbots can answer customer questions and resolve issues 24/7, freeing up human customer service representatives to focus on more complex tasks. For example, LivePerson uses AI to power its chatbots, which have handled over 1 billion customer interactions.

- Virtual health assistants: AI-powered virtual health assistants can provide personalized health advice and support to patients. For example, Babylon Health uses AI to power its virtual health assistant, which has helped over 1 million patients.

- Treatment of rare diseases: AI can be used to develop new treatments for rare diseases. For example, Insilico Medicine uses AI to simulate the effects of different drugs on cells, helping to identify new treatments for rare diseases.

- Targeted treatment: AI can be used to personalize cancer treatment. For example, GRAIL uses AI to analyze tumor DNA, helping doctors identify the best treatment for each patient.

AI in Finance and Banking

- Fraud detection: AI can be used to detect fraud in financial transactions. For example, SAS uses AI to analyze millions of financial transactions per day, helping banks to detect and prevent fraud.

- Risk assessment: AI can be used to assess risk in financial transactions. For example, Moody’s uses AI to assess the creditworthiness of companies, helping investors to make informed decisions.

- Portfolio management: AI can be used to manage investment portfolios. For example, BlackRock uses AI to build and manage portfolios for its clients, helping them to achieve their financial goals.

- Customer service: AI can be used to provide customer service in the financial industry. For example, Bank of America uses AI to answer customer questions and resolve issues 24/7.

- Trading: AI can be used to trade stocks and other financial instruments. For example, Kensho uses AI to analyze market data and make trading decisions, helping its clients to profit from market movements.

Also read: The Ultimate AI Glossary: Unraveling the Jargon and Concepts of Artificial Intelligence

AI in Transportation and Logistics

- Self-driving cars: AI is being used to develop self-driving cars. For example, Waymo is developing a fleet of self-driving cars that are currently being tested in California.

- Fleet management: AI can be used to manage fleets of vehicles. For example, Uber uses AI to optimize its fleet of cars, helping to reduce costs and improve efficiency.

- Route planning: AI can be used to plan routes for vehicles. For example, Waze uses AI to plan the most efficient routes for drivers, helping them to save time and money.

- Warehouse management: AI can be used to manage warehouses. For example, Amazon uses AI to automate warehouse tasks such as picking, packing, and shipping.

- Delivery optimization: AI can be used to optimize delivery routes. For example, Instacart uses AI to determine the best routes for its delivery drivers, helping to reduce delivery times.

AI in Customer Service

- Chatbots: AI-powered chatbots can answer customer questions and resolve issues 24/7, freeing up human customer service representatives to focus on more complex tasks. For example, LivePerson uses AI to power its chatbots, which have handled over 1 billion customer interactions.

- Sentiment analysis: AI can be used to analyze customer feedback, helping businesses to understand what customers are saying about their products and services. For example, Salesforce uses AI to analyze customer feedback, helping businesses to improve their products and services.

- Personalization: AI can be used to personalize customer experiences. For example, Amazon uses AI to recommend products to customers based on their past purchase history.

- Predictive analytics: AI can be used to predict customer behavior, helping businesses to identify customers who are likely to churn or who are likely to be interested in new products or services. For example, Netflix uses AI to predict what movies and TV shows customers are likely to enjoy, helping them to keep customers engaged.

- Virtual assistants: AI-powered virtual assistants can provide customer support 24/7, freeing up human customer service representatives to focus on more complex tasks. For example, Google Assistant can answer customer questions, resolve issues, and even book appointments.

- Knowledge management: AI can be used to manage knowledge bases, making it easier for customer service representatives to find the information they need to help customers. For example, IBM Watson uses AI to manage knowledge bases, helping customer service representatives to provide faster and more accurate support.

AI in Education

- Personalized learning: AI can be used to personalize learning experiences for students. For example, Knewton uses AI to create personalized learning plans for students, helping them to learn at their own pace and in their own way.

- Assessment: AI can be used to assess student learning. For example, Pearson uses AI to grade student essays, helping to free up teachers’ time so they can focus on more important tasks.

- Virtual tutors: AI-powered virtual tutors can provide one-on-one tutoring to students. For example, TutorCruncher uses AI to power its virtual tutors, helping students to improve their grades.

- Curriculum development: AI can be used to develop curriculums. For example, Carnegie Learning uses AI to develop math curriculums, helping students to learn math more effectively.

- Research: AI can be used to conduct research. For example, Google AI uses AI to conduct research on a variety of topics, including natural language processing, machine learning, and computer vision.

AI in Manufacturing and Robotics

- Robotics: AI is being used to develop robots that can perform tasks in manufacturing environments. For example, FANUC uses AI to develop robots that can weld car parts. There are also innovators like standardbots.com that provide robotic hardware combined with cutting-edge software that’s entirely adaptable. It can go from carrying out duties on manufacturing lines one day, to working in logistics the next, without breaking a sweat. Visually-driven engagement with the environment, combined with six-axis operation, set a new standard for what businesses can expect from robotics.

- Quality control: AI can be used to inspect products for defects. For example, Intel uses AI to inspect computer chips for defects.

- Predictive maintenance: AI can be used to predict when equipment is likely to fail, helping to prevent unplanned downtime. For example, General Electric uses AI to predict when jet engines are likely to fail, helping to keep airplanes flying.

- Optimization: AI can be used to optimize manufacturing processes. For example, Amazon uses AI to optimize its warehouse operations, helping to reduce costs and improve efficiency.

AI in Marketing and Advertising

- Personalization: AI can be used to personalize marketing and advertising campaigns. For example, Facebook uses AI to show users ads that are relevant to their interests.

- Predictive analytics: AI can be used to predict customer behavior, helping businesses to target their marketing campaigns more effectively. For example, Google uses AI to predict which customers are likely to click on an ad, helping businesses to get more out of their advertising budgets.

- Content creation: AI can be used to create content that is tailored to specific audiences. For example, BuzzFeed uses AI to create personalized news feeds for users.

- Fraud detection: AI can be used to detect fraud in marketing and advertising campaigns. For example, Twitter uses AI to detect fake accounts that are being used to spread spam.

Ethical Considerations in Artificial Intelligence

- The Importance of Ethical AI Development and Deployment: Ethical AI practices are crucial in shaping a responsible and inclusive future. By adhering to ethical principles, we can build AI systems that promote fairness, transparency, and societal well-being. Striking a balance between innovation and ethical considerations is key to fostering public trust and acceptance of AI technologies.

Case Study

Deepfakes are videos or audio recordings that have been manipulated to make it look or sound like someone is saying or doing something they never said or did. They can be used to spread misinformation, damage someone’s reputation, or even commit fraud. There have been several high-profile cases of deepfakes being used for malicious purposes. For example, a deepfake of former US President Barack Obama was used to spread false information about the 2020 US presidential election.

- Bias and Fairness in AI: Mitigating Discrimination: Bias in AI algorithms can perpetuate discrimination, leading to real-world consequences. Real-life examples illustrate this concern, such as the biased hiring algorithm developed by Amazon, which favored male candidates due to underlying gender bias in the training data. Similarly, facial recognition software from IBM and Amazon demonstrated higher rates of misidentification for people of color due to imbalanced training datasets. To mitigate bias, developers must implement techniques like data preprocessing, diverse training data, and algorithmic audits.

Case Study

A study by ProPublica found that the COMPAS (Correctional Offender Management Profiling for Alternative Sanctions) algorithm, which is used by courts to predict the likelihood of recidivism, was biased against black defendants. The study found that the algorithm was more likely to predict that black defendants would reoffend, even when they had similar criminal records to white defendants.

This bias in the COMPAS algorithm could have a significant impact on black defendants. If the algorithm is more likely to predict that they will reoffend, they may be more likely to be sentenced to prison, even if they are not actually a risk to reoffend. This could lead to black defendants being incarcerated for longer periods of time than white defendants who have committed the same crimes.

- Transparency and Explainability in AI Systems: As AI algorithms become more complex, ensuring transparency and explainability is critical. The COMPAS algorithm used in the criminal justice system has faced scrutiny for its lack of transparency, making it difficult to assess bias or fairness. Additionally, incidents like the Uber self-driving car accident highlighted the need for transparency when explaining system behavior. Developers must embrace explainable AI techniques such as model interpretability and rule-based reasoning to foster trust and enable users to understand the decision-making process.

Case Study

In 2022, a self-driving car from Uber crashed into a pedestrian, killing her. The crash raised questions about the transparency and explainability of self-driving car technology. The Uber self-driving car was using an AI system that was trained on a dataset of millions of images and videos. However, the inner workings of this AI system were not publicly known. This made it difficult to understand why the car crashed, and it also made it difficult to hold Uber accountable for the crash.

- Privacy and Security Concerns in Artificial Intelligence: AI systems rely on vast amounts of data, raising privacy and security concerns. Smart speakers from Amazon and Google, for instance, collect user data that could be used for tracking movements and monitoring conversations. Social media platforms like Facebook and Twitter gather personal information that can be exploited for targeted advertising or emotional manipulation. Robust privacy-preserving techniques such as data anonymization, encryption, and user consent frameworks are essential to protect individuals’ privacy rights.

Case Study

Clearview AI, a facial recognition company, had collected billions of facial images from the internet without the consent of the people in those images. This raised concerns about the privacy of people’s facial images, as well as the potential for Clearview AI to use these images for malicious purposes. The use of AI in facial recognition systems is just one example of how AI can raise privacy and security concerns. As AI technology continues to develop, it is important to be aware of these concerns and to take steps to mitigate them.

- Ensuring Accountability and Responsibility in AI Projects: The accountability and responsibility of developers and organizations in AI projects cannot be overlooked. The development of autonomous weapons raises concerns about accountability in case of malfunctions or harm caused by these systems. Similarly, the use of AI in warfare and surveillance necessitates clear guidelines to ensure ethical use and prevent violations of privacy and civil liberties. Collaborative efforts between stakeholders are crucial to establish regulations and standards that promote responsible AI practices.

Case Study

The lack of accountability and responsibility in the use of AI for medical diagnosis is a major concern. A study by the University of California, Berkeley found that AI-powered medical diagnosis tools were more likely to make mistakes in diagnosing black patients than white patients. The study also found that these tools were more likely to recommend unnecessary tests and treatments for black patients.

Challenges and Limitations of Artificial Intelligence

Current Limitations and Constraints of AI Technology

AI technology, while rapidly advancing, is not without its technical limitations. Current AI systems struggle with nuanced understanding, context, and common sense reasoning. Natural Language Processing (NLP) models, for example, often grapple with the complexities of sarcasm, irony, or implicit meanings, hindering their ability to fully comprehend human communication. These limitations arise from the inherent difficulty of capturing the richness and subtlety of human language.

Furthermore, AI models can exhibit biases present in the data they are trained on, perpetuating existing social biases and inequalities. Biases can emerge due to skewed or incomplete training data, leading to biased predictions or discriminatory outcomes. Addressing these limitations requires careful consideration of bias mitigation techniques, such as dataset preprocessing, algorithmic fairness, and regular audits of AI systems to ensure fairness and equality.

Overcoming Data Limitations and Data Quality Issues

Data serves as the foundation of AI, but its availability and quality pose significant challenges. AI algorithms require vast amounts of labeled data for effective training, which can be a daunting task for specialized domains or niche areas with limited data availability. In such cases, transfer learning techniques come to the forefront, enabling models to leverage pre-trained knowledge from larger datasets and fine-tune their understanding for specific tasks.

However, data quality issues can also hamper AI performance. Noisy data, outliers, and missing values can introduce inaccuracies and affect the reliability of AI systems. To tackle these challenges, researchers employ techniques like data augmentation, which involves generating synthetic data or perturbing existing data to create diverse training samples. Additionally, active learning methods are employed to strategically select and label the most informative data points, optimizing the training process and enhancing model performance.

Addressing the Black Box Problem in AI Algorithms

The black box problem refers to the lack of transparency in AI algorithms’ decision-making processes, especially in complex deep neural networks. This opacity poses challenges in understanding why a particular decision or prediction was made, limiting trust, accountability, and interpretability.

Researchers are actively developing techniques to address this issue. Explainable AI (XAI) methods aim to provide human-interpretable explanations for AI system outputs, enabling users to understand the reasoning behind decisions. This includes techniques like attention mechanisms, which highlight the important features or parts of the input that influenced the model’s decision. Model-agnostic approaches, such as LIME (Local Interpretable Model-Agnostic Explanations), generate explanations by approximating the black box model’s behavior using a more interpretable surrogate model.

Ethical Dilemmas

The Trolley Problem and AI Decision-Making: AI’s increasing autonomy and decision-making capabilities give rise to ethical dilemmas. One notable dilemma is the “Trolley Problem,” a thought experiment that poses a moral quandary of choosing between two unfavorable options. When embedded in AI systems, similar ethical dilemmas arise. For instance, self-driving cars may need to make split-second decisions that involve potential harm to passengers or pedestrians.

Resolving these dilemmas requires the development of ethical frameworks and guidelines for AI decision-making. Technical solutions, such as incorporating ethical constraints directly into AI algorithms, can help navigate these ethical challenges. Additionally, fostering public discourse and involving diverse stakeholders in decision-making processes are crucial for shaping the ethical boundaries that AI systems should adhere to.

Impact of AI on the Workforce: Job Displacement and Reskilling

The rise of AI technology brings concerns about the impact on the workforce. While AI can automate certain tasks, leading to job displacement in certain industries, it also presents opportunities for new job creation and reshaping the labor market. Routine and repetitive tasks can be efficiently handled by AI systems, allowing humans to focus on higher-level cognitive skills, creativity, and complex problem-solving.

Job Displacement and Creation

According to a report by the World Economic Forum, AI is projected to displace 75 million jobs globally by 2025. However, the same report suggests that AI will also create 133 million new jobs, resulting in a net gain of 58 million jobs worldwide. Industries such as manufacturing, transportation, logistics, retail, customer service, and administrative roles are likely to experience significant job displacement due to the automation of repetitive tasks through AI technologies.

For instance, AI-powered robots are already being used in manufacturing to streamline production processes, while AI-driven chatbots assist in customer service interactions. The impact of AI-induced job displacement is not uniform across the workforce, with lower-skilled workers facing a higher risk due to the nature of their roles. Studies, such as one by McKinsey, indicate that low-skilled workers hold a majority of the 800 million jobs globally that are at risk of automation by AI by 2030.

Addressing Job Displacement

To mitigate the challenges posed by job displacement, policymakers and businesses are taking proactive measures. One key approach involves providing workers with the necessary training to acquire skills that are in high demand in the AI-driven economy. Initiatives like the European Skills Agenda aim to equip workers with the knowledge and competencies needed to thrive in an AI-driven workforce.

Creating New Opportunities

Another strategy is to focus on creating new jobs within the AI sector itself. The AI sector is experiencing significant growth, generating a demand for skilled professionals. Research conducted by the McKinsey Global Institute indicates that the AI sector could create up to 95 million new jobs by 2030. Developing expertise in AI-related fields such as data science, machine learning, and natural language processing can provide individuals with valuable opportunities in this expanding sector.

The Way Forward

The impact of AI on the workforce is a complex issue that requires careful consideration. By emphasizing reskilling and upskilling programs, policymakers and businesses can empower workers to adapt to the evolving job market. Furthermore, fostering the growth of the AI sector can create new avenues for employment and innovation. While challenges exist, it is crucial to approach the changing dynamics of the workforce with a proactive and inclusive mindset.

Additional Insights:

- A study by the International Labour Organization predicts the loss of 85 million jobs to automation by 2030, while also anticipating the creation of 97 million new jobs during the same period.

- The demand for AI-related skills is projected to grow by 22% between 2020 and 2030, surpassing the average growth rate for all occupations, as per the United States Bureau of Labor Statistics.

- AI is already making significant contributions across various industries, including healthcare, finance, manufacturing, and retail.

- The full impact of AI on the workforce continues to be debated, but its transformative potential is undeniable, with significant implications for future employment trends.

AI Governance and Regulation

Regulatory Landscape for AI

The regulatory landscape for AI is multifaceted, involving a complex web of laws, regulations, and frameworks. At the forefront, we have government bodies and agencies, such as the Federal Trade Commission (FTC) in the United States, the European Commission, and the National Institute of Informatics in Japan. These organizations aim to strike a delicate balance, promoting innovation while safeguarding against potential risks associated with AI.

Addressing Ethical Concerns

AI technology raises profound ethical concerns, such as bias, privacy, and accountability. To address these issues, regulatory efforts emphasize the importance of transparency and fairness in AI systems. For instance, the General Data Protection Regulation (GDPR) in Europe enforces strict data protection and privacy measures to safeguard individuals’ rights in the context of AI applications.

Domain-Specific Regulations

AI finds applications in various domains, such as healthcare, finance, and transportation. Consequently, domain-specific regulations come into play. For instance, the Food and Drug Administration (FDA) in the United States regulates AI-powered medical devices, ensuring their safety and efficacy. These regulations ensure that AI technologies meet the unique requirements and standards of each sector.

Testing and Certification

To ensure the reliability and safety of AI systems, testing and certification processes are gaining prominence. Organizations such as the National Institute of Standards and Technology (NIST) provide guidelines and frameworks for evaluating the performance, fairness, and explainability of AI algorithms. These measures enhance transparency and accountability while instilling trust among users.

International AI Policies and Guidelines

A number of countries have already begun to develop AI policies and guidelines. Some of the most notable examples include:

- The European Union’s AI Act is a comprehensive piece of legislation that sets out a framework for the development and use of AI in the EU. The act includes provisions on data privacy, bias, and accountability.

- The United States’ National Artificial Intelligence Initiative is a research and development program that aims to ensure that the US remains a leader in AI. The initiative includes a number of ethical principles that are intended to guide the development and use of AI in the US.

- China’s Artificial Intelligence Development Plan is a national strategy that aims to make China a global leader in AI by 2030. The plan includes provisions on data security, ethics, and talent development.

- The Organisation for Economic Co-operation and Development (OECD) has formulated a set of principles to guide the development of trustworthy AI. These principles focus on human-centered values, transparency, accountability, and robustness. The OECD framework provides a global reference point for countries shaping their AI policies.

- The European Union (EU) has been at the forefront of AI governance, striving to foster innovation while ensuring ethical AI practices. The EU’s AI strategy emphasizes the development of AI technologies in compliance with fundamental rights, technical standards, and safety requirements. It also proposes an AI regulatory framework to address high-risk AI applications.

In 2018, the Montreal Declaration for Responsible AI was established, outlining key principles to guide the development and deployment of AI. This initiative focuses on inclusivity, diversity, and fairness in AI systems. It calls for collaboration among academia, industry, and policymakers to ensure responsible and beneficial AI.

Future Trends and Possibilities in Artificial Intelligence

Advancements in AI Research and Development

Artificial intelligence (AI) is a rapidly evolving field with the potential to revolutionize many aspects of our lives. In recent years, there have been significant advancements in AI research and development, leading to new and exciting possibilities for the future.

One of the most promising areas of AI research is the development of large language models (LLMs). LLMs are AI systems that have been trained on massive datasets of text and code. This allows them to perform complex tasks such as generating text, translating languages, and writing different kinds of creative content.

LLMs are already being used in a variety of applications, including

- Chatbots: LLMs can be used to create chatbots that can interact with humans in a natural and engaging way.

- Content generation: LLMs can be used to generate different kinds of content, such as news articles, blog posts, and marketing materials.

- Translation: LLMs can be used to translate text from one language to another with a high degree of accuracy.

In the future, LLMs are likely to become even more powerful and versatile. They could be used to develop new applications in areas such as education, healthcare, and customer service.

AI and Augmented Intelligence: Enhancing Human Capabilities

Artificial intelligence (AI) is rapidly evolving, and its potential to enhance human capabilities is vast. In the future, we can expect to see AI being used to augment our cognitive abilities, physical capabilities, and creativity.

Augmenting Cognitive Abilities

One of the most promising areas for AI is in augmenting our cognitive abilities. For example, AI-powered tutors could help students learn more effectively, and AI-powered assistants could help professionals make better decisions. AI could also be used to improve our memory and recall, and to help us solve problems more creatively.

Augmenting Physical Abilities

AI could also be used to augment our physical abilities. For example, AI-powered exoskeletons could help people with disabilities walk and move more easily, and AI-powered prosthetics could give people with missing limbs new ways to interact with the world. AI could also be used to improve our athletic performance, and to help us work in dangerous or hazardous environments.

Augmenting Creativity

AI could also be used to augment our creativity. For example, AI-powered tools could help artists create new works of art, and AI-powered musicians could help composers write new songs. AI could also be used to generate new ideas, and to help us solve problems in new and innovative ways.

The Future of AI and Augmented Intelligence

The future of AI and augmented intelligence is full of possibilities. As AI continues to evolve, we can expect to see it being used to enhance our capabilities in even more ways. This could lead to a future where humans and AI work together to create a world that is more productive, more efficient, and more creative.

Here are some specific examples of how AI is being used to augment human capabilities today:

- Virtual assistants: Virtual assistants like Amazon’s Alexa and Apple’s Siri can help us with tasks like setting alarms, making appointments, and playing music. They can also answer our questions and provide us with information.

- Self-driving cars: Self-driving cars are being developed by companies like Waymo and Uber. These cars use AI to navigate roads and avoid obstacles. They have the potential to make transportation safer and more efficient.

- Medical diagnosis: AI is being used to develop new medical diagnostic tools. These tools can help doctors identify diseases more accurately and quickly.

- Education: AI is being used to develop new educational tools. These tools can help students learn more effectively and efficiently.

Quantum Computing and AI: A Powerful Synergy

Artificial intelligence (AI) and quantum computing are two of the most promising technologies of our time. AI has already had a significant impact on our lives, and quantum computing has the potential to revolutionize many industries.

The combination of AI and quantum computing is particularly exciting, as it has the potential to solve some of the world’s most pressing problems. For example, AI and quantum computing could be used to develop new drugs, create more efficient energy sources, and improve our understanding of the universe.

Here are some specific examples of how AI and quantum computing could be used to solve real-world problems:

- In healthcare, AI and quantum computing could be used to develop new drugs and treatments for diseases. For example, AI could be used to analyze vast amounts of medical data to identify new patterns and correlations that could lead to new drug discoveries. Quantum computing could be used to simulate the behavior of molecules at the atomic level, which could help scientists to design new drugs that are more effective and less toxic.

- In finance, AI and quantum computing could be used to improve trading strategies and risk management. For example, AI could be used to analyze financial market data to identify trends and patterns that could help traders to make better investment decisions. Quantum computing could be used to calculate complex financial derivatives that are currently too difficult to price using classical computers.

- In energy, AI and quantum computing could be used to develop more efficient power grids and renewable energy sources. For example, AI could be used to optimize the operation of power grids to reduce energy consumption and emissions. Quantum computing could be used to simulate the behavior of atoms and molecules, which could help scientists to design new materials that are more efficient at converting sunlight into electricity.

Here are some data, stats, and facts about AI and quantum computing:

- The global AI market is expected to reach $390 billion by 2025.

- The global quantum computing market is expected to reach $2.9 billion by 2025.

- Google’s Sycamore quantum computer was able to solve a problem that would have taken a classical computer 10,000 years to solve.

- IBM’s Eagle quantum computer is expected to be able to achieve quantum supremacy by 2023.

- The US government is investing $1.2 billion in quantum research and development.

The Internet of Things (IoT) and AI Integration

The integration of artificial intelligence (AI) and the Internet of Things (IoT) is one of the most exciting and promising trends in technology today. By combining the power of AI with the vast amounts of data generated by IoT devices, we can create new and innovative applications that have the potential to improve our lives in many ways.

Here are some of the future trends and possibilities in AI and IoT integration:

- Smarter homes and buildings: AI-powered IoT devices can be used to make our homes and buildings more comfortable, efficient, and secure. For example, AI-enabled thermostats can learn our preferences and adjust the temperature accordingly, while AI-powered security systems can monitor our homes for potential threats.

- Personalized healthcare: AI and IoT can be used to create personalized healthcare solutions that are tailored to our individual needs. For example, AI-powered wearable devices can track our health data and provide us with insights into our overall health.

- Improved transportation: AI and IoT can be used to improve transportation systems by making them more efficient, reliable, and safe. For example, AI-powered traffic lights can adjust their timing in real time to improve traffic flow, while AI-powered self-driving cars can reduce accidents and improve road safety.

- Smart cities: AI and IoT can be used to create smart cities that are more efficient, sustainable, and livable. For example, AI-powered waste management systems can optimize waste collection routes, while AI-powered energy grids can balance supply and demand more effectively.

Here are some data, stats, facts, and examples to support the above trends:

- A study by McKinsey estimates that AI could add $13 trillion to the global economy by 2030.

- The IoT market is expected to grow to $1.4 trillion by 2023.

- The number of connected devices is expected to reach 50 billion by 2025.

- AI is already being used in a wide range of industries, including healthcare, transportation, manufacturing, and retail.

Some examples of AI-powered IoT applications include:

- Smart thermostats that learn our preferences and adjust the temperature accordingly.

- AI-enabled security systems that monitor our homes for potential threats.

- AI-powered wearable devices that track our health data and provide us with insights into our overall health.

- AI-powered traffic lights that adjust their timing in real time to improve traffic flow.

- AI-powered self-driving cars that reduce accidents and improve road safety.

- AI-powered waste management systems that optimize waste collection routes.

- AI-powered energy grids that balance supply and demand more effectively.

AI in Space Exploration and Scientific Discoveries

Artificial intelligence (AI) is rapidly changing the world, and space exploration is no exception. AI is already being used in a variety of ways to improve our understanding of the universe and to make space exploration more efficient and safe.

Here are some of the future trends and possibilities for AI in space exploration:

- AI-powered telescopes and probes: AI will be used to develop more powerful telescopes and probes that can collect and analyze data from deep space. This will enable us to see further and more clearly than ever before, and to make new discoveries about the universe.

- AI-controlled spacecraft: AI will be used to control spacecraft more autonomously, freeing up astronauts to focus on other tasks. This will make space exploration more efficient and safer, and will allow us to explore more distant and challenging destinations.

- AI-assisted decision-making: AI will be used to help astronauts and scientists make better decisions in space. This will be especially important in emergencies, when quick and accurate decisions are critical.

- AI-powered life support systems: AI will be used to develop more efficient and effective life support systems for spacecraft. This will make space exploration more sustainable and will allow us to stay in space for longer periods of time.

These are just a few of the ways that AI is poised to revolutionize space exploration. As AI continues to develop, we can expect to see even more amazing advances in the years to come.

Here are some specific examples of how AI is already being used in space exploration:

- NASA’s Kepler Space Telescope: The Kepler Space Telescope uses AI to identify exoplanets, or planets that orbit stars other than the Sun. AI has helped Kepler to discover over 2,600 exoplanets, including many that are potentially habitable.

- The European Space Agency’s (ESA) CHEOPS mission: The CHEOPS mission uses AI to study exoplanets that have already been discovered. AI is helping CHEOPS to measure the size and density of these planets, which will help scientists to better understand their atmospheres and potential habitability.

- The China National Space Administration’s (CNSA) Chang’e 4 mission: The Chang’e 4 mission is the first to land on the far side of the Moon. AI is being used to help the Chang’e 4 rover navigate the lunar surface and to collect scientific data

Conclusion

As we conclude our journey through “The Ultimate Guide to Artificial Intelligence,” we hope this comprehensive exploration has left you inspired and equipped with a deep understanding of the immense potential that AI holds. From the foundational concepts to the intricate algorithms and cutting-edge applications, we’ve traversed the fascinating landscape of this transformative technology. Whether you’re a beginner seeking to grasp the fundamentals or a seasoned professional striving for the forefront of AI innovation, we’ve endeavored to provide you with an informative and engaging resource.

As AI continues to evolve at an unprecedented pace, we encourage you to embrace its potential and contribute to its advancement. Let this guide serve as your springboard into the vast realm of artificial intelligence, where innovation knows no bounds. Stay curious, keep exploring, and be part of the remarkable future that AI promises. With every breakthrough, the boundaries of what’s possible are pushed further, and it is through our collective knowledge and passion that we shape a world driven by intelligence, insight, and limitless possibilities.

Frequently Asked Questions

- Narrow AI: AI designed for specific tasks and limited in scope.

- General AI: AI with human-like intelligence and the ability to perform any intellectual task.

- Superintelligent AI: Hypothetical AI surpassing human intelligence and capabilities.

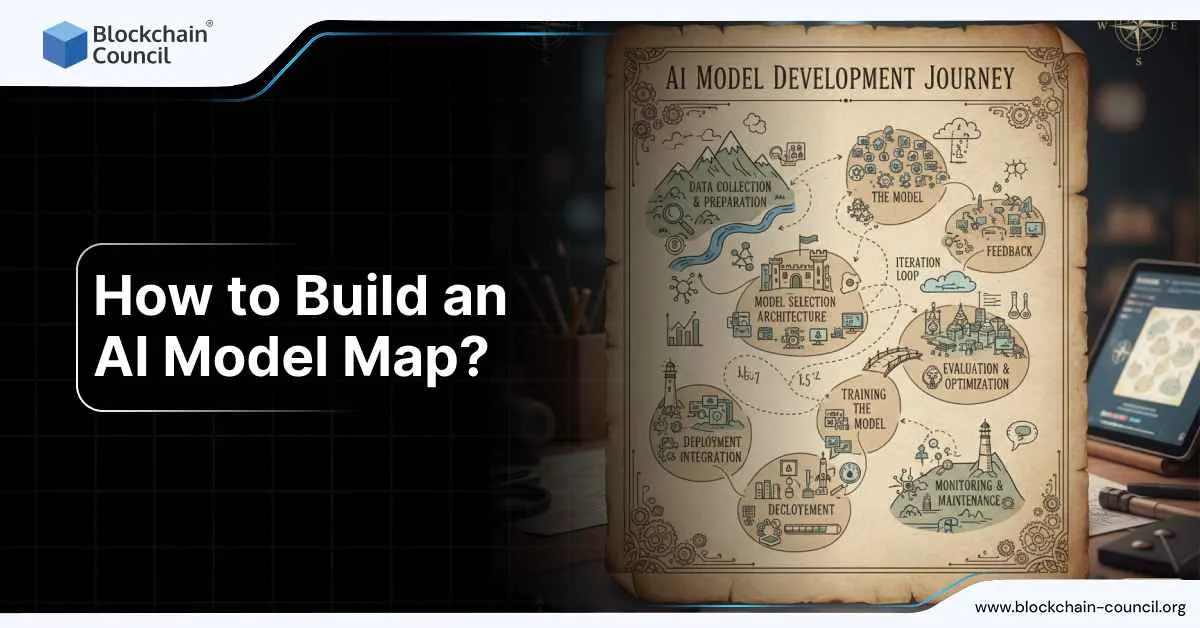

- Data collection: Gathering relevant data for the problem at hand.

- Data preprocessing: Cleaning, transforming, and preparing the data for analysis.

- Model training: Using algorithms to learn patterns and relationships in the data.

- Model evaluation: Assessing the performance and accuracy of the trained model.

- Model deployment: Applying the trained model to make predictions or decisions.

- Virtual assistants (e.g., Siri, Alexa)

- Recommendation systems (e.g., personalized product recommendations)

- Fraud detection and cybersecurity

- Autonomous vehicles

- Medical diagnosis and treatment

- Natural language processing for language translation or chatbots

- In some cases, AI can automate repetitive or mundane tasks, reducing the need for human labor.

- However, AI is more likely to augment human work by assisting in complex tasks and decision-making.

- The impact of AI on employment depends on the industry, job type, and the ability of humans to adapt and learn new skills.

- Diverse and inclusive data collection: Ensuring that training data represents a wide range of backgrounds and perspectives.

- Regularly evaluating and monitoring AI systems for bias during development and deployment

- Improving transparency and explainability of AI algorithms to understand how biases may arise.

- Involving interdisciplinary teams to detect and mitigate biases throughout the AI development process.

- Implementing regulatory frameworks and guidelines to address ethical considerations and potential biases in AI systems.

Related Course

Latest News

Categories

Related Certifications

Related Blogs

AI Ethical Approaches

AI ethical approaches focus on ensuring that artificial intelligence is built and used in ways that are fair, safe, and accountable. With AI systems now

Tavern AI

Curious about chatting with characters from stories and movies? Enter the world of Tavern AI. This platform brings characters to life, allowing you to engage

What are the Best AI Video Editing Tools?

Summary The demand for efficient video production is high in today’s digital landscape, leading to the need for AI video editing tools. Traditional video production

What is Machine Learning and How Does It Work?

Machine learning is a transformative branch of artificial intelligence that empowers systems to learn and improve from experience without being explicitly programmed. It involves algorithms

Can Agentic AI Detect Fraud in Real-Time?

Fraud moves fast. From online payments to recruitment scams, criminals adapt quickly, and traditional rule-based detection systems often struggle to keep pace. The question is

ChatGPT Secret Codes

If you’ve seen TikToks or LinkedIn posts claiming that secret codes like ELI5, TLDR, or Humanize unlock hidden features in ChatGPT — here’s the truth:

Do Colleges Check for AI in Application Essays?

If you are applying to college right now, you are probably asking yourself this very directly: do colleges check for AI in application essays? The

Artificial Intelligence in Healthcare: All You Need to Know

Summary AI is transforming the healthcare industry by improving diagnosis, treatment, and patient outcomes. It is being used in medical imaging to analyze medical images

AI Agent Solana

AI agents are transforming industries—from customer service to content creation. But in the world of blockchain and DeFi, one platform is uniquely positioned to supercharge

ChatGPT 5 for Designers

ChatGPT 5, launched on August 7, 2025, is one of the most powerful AI tools available to creative professionals. For designers, it can assist with

ChatGPT 5 for Writers

ChatGPT 5 is now one of the most powerful writing tools available, helping authors, bloggers, journalists, and content creators produce better work in less time.

Google Launches NotebookLM: Revolutionary AI Chatbot with Unmatched Features, Paving the Way for Enhanced Conversational Experience

In a move that is set to revolutionize the way we take notes, Google has introduced its latest web application called NotebookLM. This innovative tool

Google Bard

The global AI market size is $150.2 billion. Sounds huge, right? What’s more fascinating is that it is expected to reach $1,345.2 billion by 2030.

Benefits Of Training Employees On Artificial Intelligence

Artificial Intelligence (AI) is revolutionizing the modern business landscape, making it imperative for organizations to equip their workforce with AI skills. Training employees in AI

What is the Best LLM for Coding?

Every developer has hit that frustrating moment: staring at a screen, stuck on a bug, or trying to write a function that just won’t work.

How to Become a Chatbot Expert?

Chatbots are becoming increasingly important in the current digital environment. Businesses use them to automate communication, provide better customer support, boost sales, and enhance user

OpenAI A-SWE AI Agent

OpenAI is building something ambitious — an AI agent called A-SWE, short for Agentic Software Engineer. Unlike existing tools that assist developers, A-SWE is being

Deep Agents

Deep Agents are a new class of AI systems that can plan, act, and learn through extended tasks without constant user prompts. Unlike basic chatbots,

Indosat’s AI for HR

Indosat has proven that artificial intelligence can make HR faster, smarter, and more human. With tools like ASTRID, personalized career simulators, and AI-led hackathons, the

Which Smartphone AI Assistant Is Best for Daily Use in 2025-2026?

Introduction From the moment you wake up to the moment you go to bed, your smartphone AI assistant is now part of your daily routine.

The Singularity Question: Could AI Surpass Human Intelligence?

The big question many people ask is simple: can AI surpass human intelligence? This is what researchers call the “singularity” — a moment when machines

How does AI Work? [UPDATED]

Summary Artificial Intelligence (AI) is a field of computer science focusing on creating systems that mimic human intelligence, involving tasks like learning, problem-solving, and decision-making.

Best Natural Language Processing (NLP) Models

Natural Language Processing (NLP) is a branch of AI focused on how computers interact with human language. It enables machines to understand, interpret, and produce

Machine Learning Techniques

Machine learning, a branch of artificial intelligence, helps computers learn from data. It allows them to make predictions or decisions without needing detailed programming. It

AI Cyber for Resilient Security Operations

Cybersecurity threats are growing every day. Attacks are more complex, faster, and harder to detect. Traditional security methods alone are no longer enough to protect

Google Launched Imagen 4 Image Generation Model

Google just released Imagen 4, its most advanced image generation model yet. If you’ve been wondering how it compares to DALL·E, Midjourney, or previous Imagen

OpenAI’s CFO Set Off the AI Bailout Debate

Sometimes, a single word can reshape public perception. That is exactly what happened when OpenAI’s Chief Financial Officer, Sarah Friar, used the word “backstop” during

Machine Learning Engineer Vs. Data Scientist: Career Comparison

When thinking about careers in tech, two popular roles that often come up are Machine Learning Engineer and Data Scientist. Both positions are common in

Veggie AI

Artificial Intelligence (AI) continues to reshape numerous industries, and Veggie AI stands out as a prime example of how technology can be used for creative

World’s Strongest Agentic Model Is Now Open Source

Moonshot AI has officially released Kimi K2, its flagship text-reasoning model, as an open-source framework—marking a historic moment for agentic AI. With one trillion parameters

The Ultimate ChatGPT Guide – All You Need to Know

Imagine having a virtual assistant that understands every word, a chatbot that can hold intelligent and natural conversations or a language model that empowers you

PepsiCo Collaborates with Stanford HAI to Lead the Way in Responsible AI and Industrial Applications

In a groundbreaking collaboration, PepsiCo, Inc. has partnered with the prestigious Stanford Institute for Human-Centered Artificial Intelligence (Stanford HAI) to spearhead the advancement of artificial

AI Jobs

AI Jobs How to get a high-paying job in AI? Develop strong technical skills in programming, mathematics, and machine learning. Consider pursuing AI certifications

How Has NLP Evolved in the GPT-4 Era?

Natural Language Processing (NLP) is the technology that helps computers understand and use human language. It powers chatbots, voice assistants, translation tools, and more. Over

How does Reinforcement Learning Work?

In the world of artificial intelligence (AI), Reinforcement Learning (RL) stands out as a game-changer, reshaping how machines learn and improve. This article breaks down

Role of AI in Fighting Cybercrime

Cybercrime has grown increasingly sophisticated in recent years, presenting significant challenges for individuals, businesses, and governments alike. Artificial Intelligence (AI) is transforming the way cyber

Nano Banana

Nano Banana is Google’s latest tool for turning photos into short, high-quality videos with sound. It allows anyone to upload an image, type a short

Google Launches Search Generative Experience (SGE) with AI Answers Integrated into Search Results

Google’s Search Generative Experience (SGE) has introduced an exciting new dimension to the world of search engines. By seamlessly integrating generative AI into the search

Claude Opus 4.6

Claude Opus 4.6 is positioned as a flagship large-scale model built for long-horizon, high-responsibility work rather than short prompt–response interactions. Released in early February 2026,

Personal Productivity AI Agents

Personal productivity AI agents are designed to increase individual output by operating directly inside the tools where work happens. They triage email, manage calendars, convert

Copy.ai | Copy AI

What is Copy.ai? Copy.ai is an advanced artificial intelligence (AI) writing assistant that harnesses the capabilities of machine learning and natural language processing algorithms to

Crypto Portfolio Managed by AI Is Beating Humans

AI-managed crypto portfolios are now outperforming human-managed ones across several key metrics. From returns to risk management, AI systems are proving they can adapt faster

Top Web3 Job List

Web3 technology is changing the job market, offering new roles and opportunities for tech enthusiasts. With Blockchain, decentralized applications, and cryptocurrencies gaining traction, professionals with

AI in Legal Research and Automated Document Review

Artificial Intelligence (AI) has brought notable changes to the legal world, especially in how legal research and automated document reviews are conducted. Its ability to

How AI Is Powering the Rise of Retail Investors

For decades, the world of investing was tilted toward institutions — the hedge funds, banks, and asset managers armed with expensive research, insider access, and

Logomark AI

What Is Logomark AI? Logomark AI is an advanced tool that uses artificial intelligence to create custom logos. It employs a specialized model called Stable

Artificial Intelligence in Robotics: All You Need To Know

Summary: Artificial intelligence (AI) has made it possible to create robots that can somewhat come close to what a human is like. AI-controlled robots are

Is AI as Simple as It Seems?

Artificial intelligence now appears in almost every digital space. It sits inside search engines, productivity tools, messaging apps, design platforms, and workplace dashboards. Because it

What Happens If You Type God in an AI Prompt?

When someone types God into an AI prompt, they are usually testing limits, defaults, or meaning rather than asking for a factual answer. What happens

AI Lyrics Generator

Using artificial intelligence in music composition has grown significantly, especially for writing lyrics. AI tools for generating lyrics are helpful for both seasoned and new

AI-Powered Tools in Improving Compliance in Legal Firms

The rising adoption of artificial intelligence (AI) in law firms is significantly changing how compliance and regulatory oversight are managed. These tools help legal teams

OpenAI Releases Deep Research API

OpenAI has launched the Deep Research API to help developers and teams build tools that can perform long-form, structured research automatically. This API allows applications

Power BI Free

Power BI is a powerful business analytics service offered by Microsoft that enables you to visualize data and gain insights for better decision-making. While many

Key Principles and Frameworks in AI Ethics

Introduction AI is advancing quickly, but without ethics it can create more harm than good. That is why principles and frameworks in AI ethics are

Google DeepMind Tools

Google DeepMind has become one of the most active players in artificial intelligence, releasing tools that cover everything from large language models to biology research.

Which is the Best AI for Coding?

In recent years, artificial intelligence (AI) has made significant strides in assisting software developers. AI coding assistants now help with tasks ranging from code completion

Agentic AI: Best Courses & Certifications in 2025 to Become an Expert or Developer

Introduction Artificial intelligence has already transformed industries, but the next wave is here — Agentic AI. Unlike traditional AI models that only respond to human

How Can Businesses Use AI Chatbots for Customer Support?