Skills Needed to Become a Prompt Engineer

Prompt Engineer

Companies like Netflix are offering high salaries to prompt engineers due to the increasing demand for AI.

Prompt engineers play a crucial role in ensuring smooth digital operations in a fast-paced world.

The article discusses the top 5 skills required for success in this field.

A prompt engineer is a versatile IT expert responsible for seamless operations and troubleshooting.

They excel in coding, problem-solving, and automation, working with various IT systems.

They specialize in crafting effective input prompts for AI models, optimizing their performance.

They bridge the gap between raw AI capabilities and practical applications.

Their work ensures efficient and precise AI-driven interactions.

Prompt engineers are vital in addressing potential risks associated with AI technologies.

They receive attractive compensation due to high demand.

NLP deals with computer-human language interaction.

NLP is essential for crafting effective prompts that engage users.

Various resources like online courses, books, YouTube tutorials, online communities, and coding practice help in learning NLP.

Python programming is crucial for prompt engineers, as Python offers readability, rich NLP libraries, community support, cross-platform compatibility, and integration capabilities.

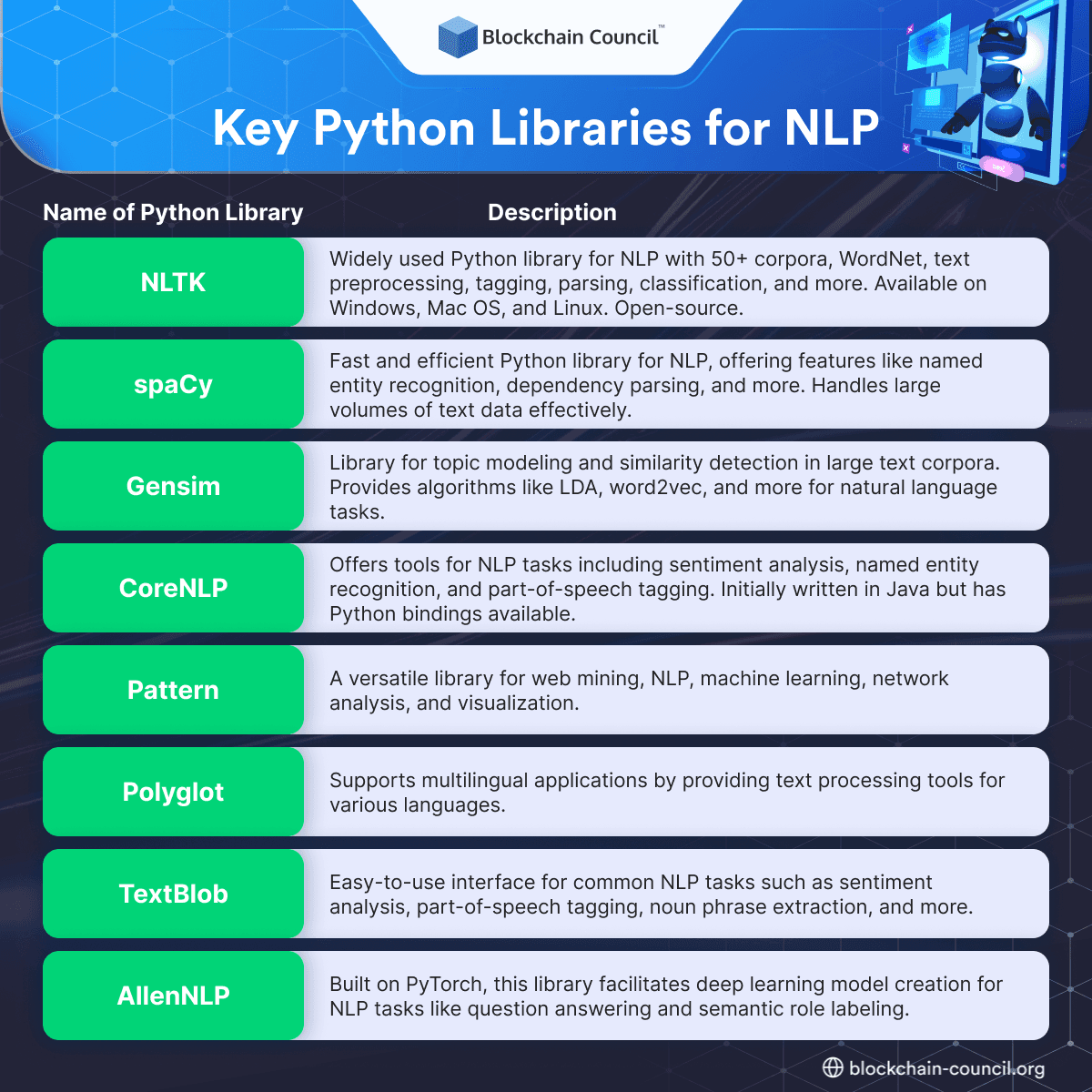

Key Python libraries for NLP include NLTK, spaCy, Gensim, CoreNLP, Pattern, Polyglot, TextBlob, and AllenNLP.

Coding exercises are essential for skill development, such as tokenization practice, named entity recognition (NER), text classification, and word embeddings.

Data analysis and preprocessing skills involve data collection, cleaning, feature engineering, and data visualization.

Data visualization tools like Matplotlib, Seaborn, and Tableau help in effective communication of findings.

Prompts should be clear, context-rich, and engaging for the AI model to generate desired results.

Fine-tuning language models requires a diverse dataset, balancing overfitting and underfitting, and hyperparameter tuning.

The article provides examples of specific prompts for content generation, code generation, and language translation.

Choosing the right language model involves considering model size, training data, fine-tuning, and use cases.

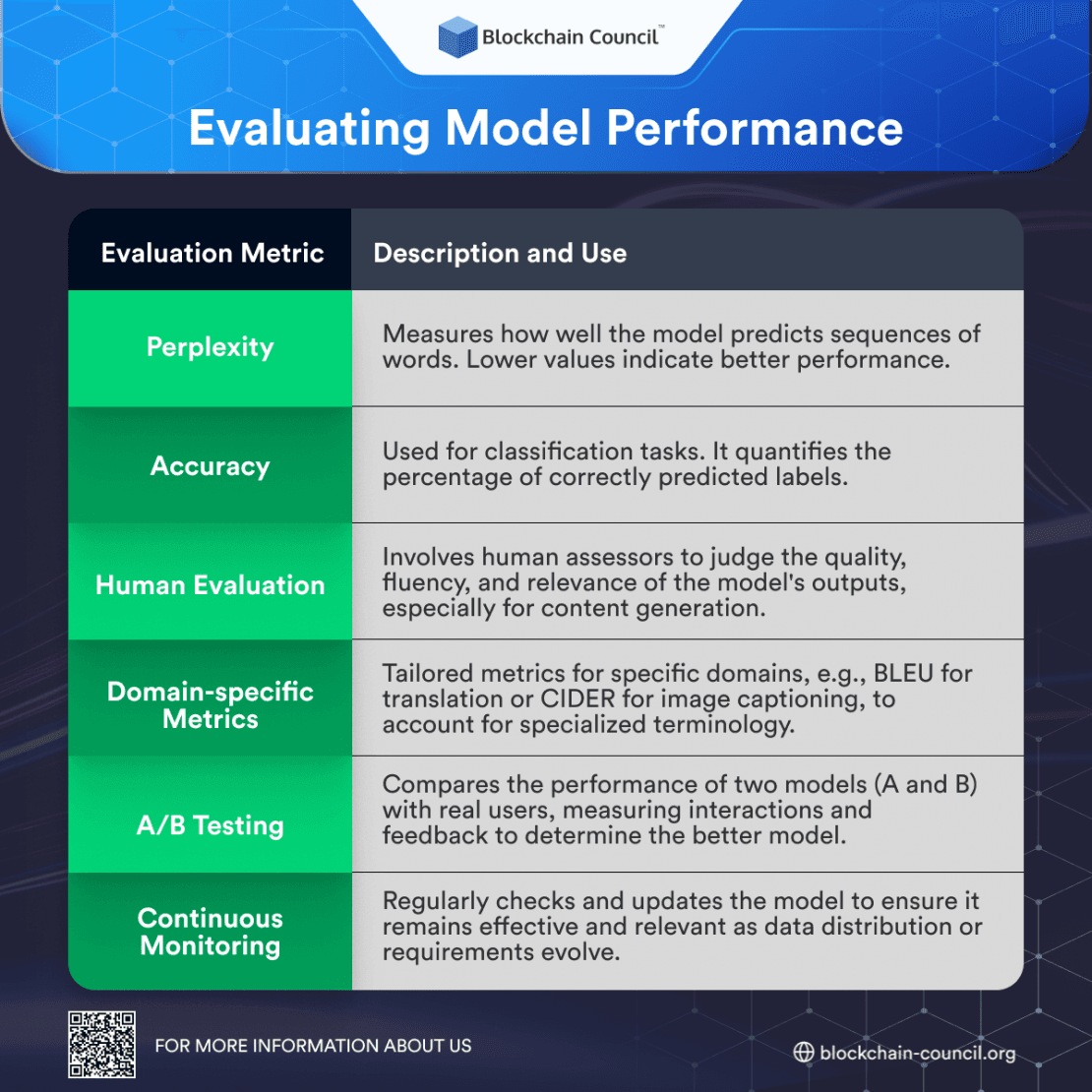

Model performance evaluation metrics include perplexity, accuracy, human evaluation, domain-specific metrics, A/B testing, and continuous monitoring.

Prompt engineering is an iterative process, requiring fine-tuning and adaptation based on real-world feedback.

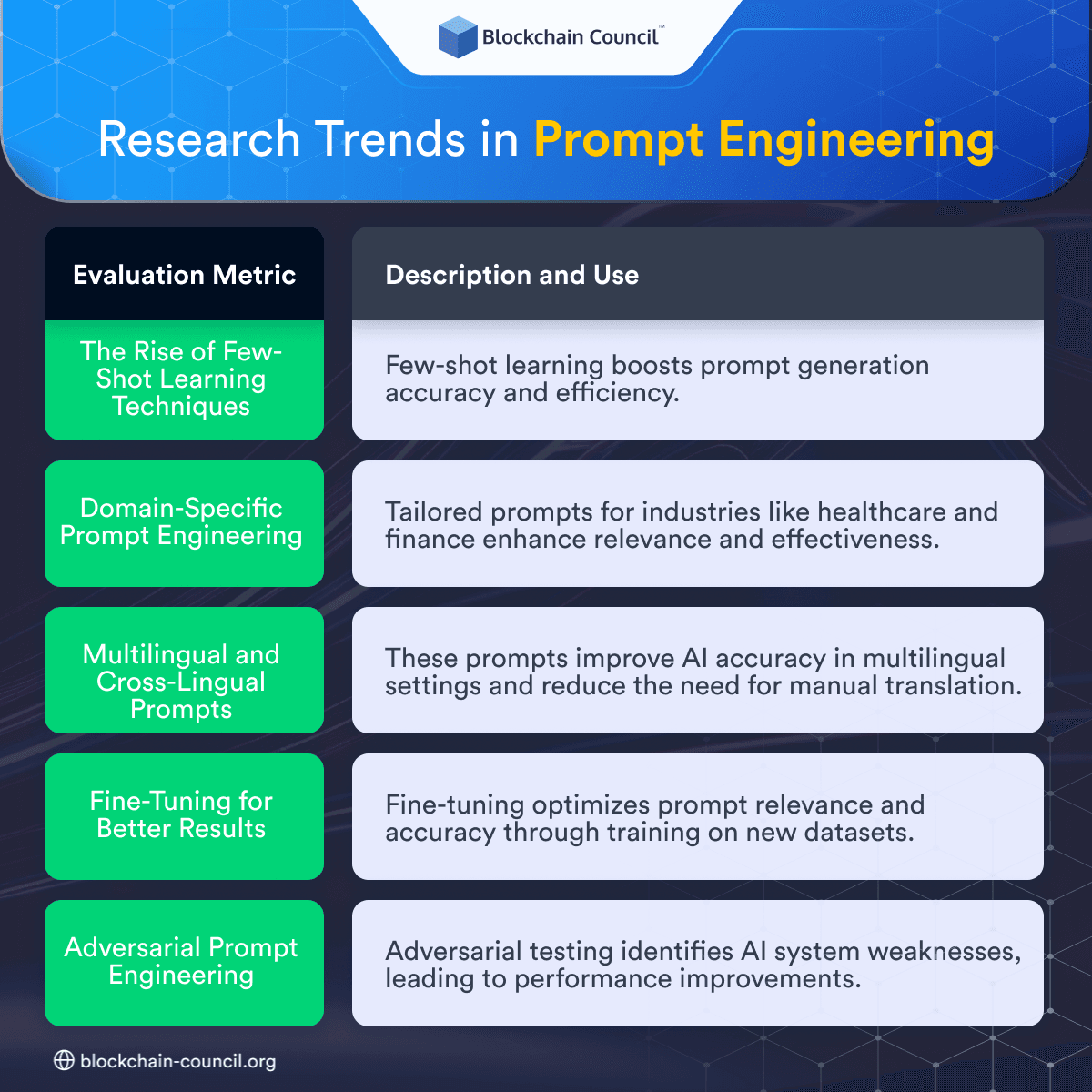

The article discusses trends in prompt engineering, including few-shot learning, domain-specific prompts, multilingual prompts, fine-tuning, and adversarial testing.

It mentions successful prompt engineering projects like FinGPT, Learning-Prompt, tree-of-thoughts, and Awesome-Prompt-Engineering.

Becoming a Certified Prompt Engineer™ through the Blockchain Council certification program enhances skills in prompt crafting, real-world cases, OpenAI API, and career opportunities.

The certification offers recognition and validity throughout one's career.

Prompt engineering is a high-reward, high-demand field requiring skills in automation, scripting, error handling, communication, and problem-solving.

Introduction

Companies like Netflix is offering over $300,000 to prompt engineers. With the growing demand for AI, the need for prompt engineers is skyrocketing. In an era where microseconds can make or break a user’s experience, prompt engineers are the unsung heroes behind the scenes.

Becoming a prompt engineer in today’s fast-paced digital landscape demands a unique set of skills that go beyond traditional engineering. In a world where every second counts, being prompt isn’t just a virtue; it’s a necessity.

This article will delve into the top 5 skills you need to cultivate to excel in this role, whether you’re just starting your journey or are a seasoned professional looking to stay ahead in the game.

What is a Prompt Engineer?

At its core, a Prompt Engineer is a technical wizard, a master of many trades within the IT realm. They are the architects of seamless operations, the troubleshooters of complex problems, and the navigators of digital landscapes. But what truly sets them apart is their ability to respond promptly, decisively, and effectively to any technical challenge that comes their way.

A Prompt Engineer is not bound by a single specialization; they are versatile experts who wear multiple hats. They are proficient in coding, possess exceptional problem-solving skills, and have an innate understanding of automation tools. Whether it’s debugging a system, optimizing a website, or ensuring the smooth flow of data in a network, these professionals are the go-to experts.

Why are Prompt Engineers in Demand?

Pioneers of AI Communication: Prompt engineering is the art of crafting effective input prompts to elicit the desired output from foundation models. It’s the iterative process of developing prompts that can effectively leverage the capabilities of existing generative AI models to accomplish specific objectives.

Critical Role in the AI Ecosystem: As organizations increasingly rely on AI models like ChatGPT, Prompt Engineers play a critical role in fine-tuning these models to produce accurate and meaningful responses. They bridge the gap between raw AI capabilities and practical applications.

Efficiency and Precision: Prompt Engineers are in demand because they ensure efficiency and precision in AI-driven interactions. By crafting prompts that guide AI models, they make these systems more useful and reliable, reducing the likelihood of generating irrelevant or incorrect information.

Addressing Industry Challenges: According to a report by Trend Statistics, 26% of European software and tech companies plan job cuts due to ChatGPT. This highlights the importance of Prompt Engineers in mitigating potential risks associated with AI technologies and ensuring their responsible deployment.

Attractive Compensation: The demand for Prompt Engineers is reflected in their salaries. The same report states that salaries for Prompt Engineers range from £40,000 to £300,000 a year based on experience. Anthropic, a leading AI research company, has job openings for “Prompt Engineer and Librarian” with salaries up to $335,000 per year, showcasing the competitive compensation offered in this field.

Global Opportunities: Prompt Engineers are not limited to specific geographical locations. The salary range for these professionals in the San Francisco Bay Area is $175,000 to $335,000 per year, indicating that opportunities for their expertise span across various regions.

Skill 1: Strong Understanding of Natural Language Processing (NLP)

Basics of NLP

NLP, or Natural Language Processing, is a branch of artificial intelligence that deals with the interaction between computers and human languages. To master this skill, you need to start with the fundamentals of NLP. This includes understanding concepts like tokenization, parsing, and semantic analysis.

Role of NLP in Prompt Engineering

In the world of prompt engineering, NLP plays a pivotal role. It’s the technology behind the scenes that allows machines to understand and generate human-like text. NLP enables prompt engineers to create prompts that are not only clear but also contextually rich. It’s the backbone of crafting prompts that can engage users effectively.

Resources for Learning NLP: For beginners looking to delve into NLP, there are several excellent resources available:

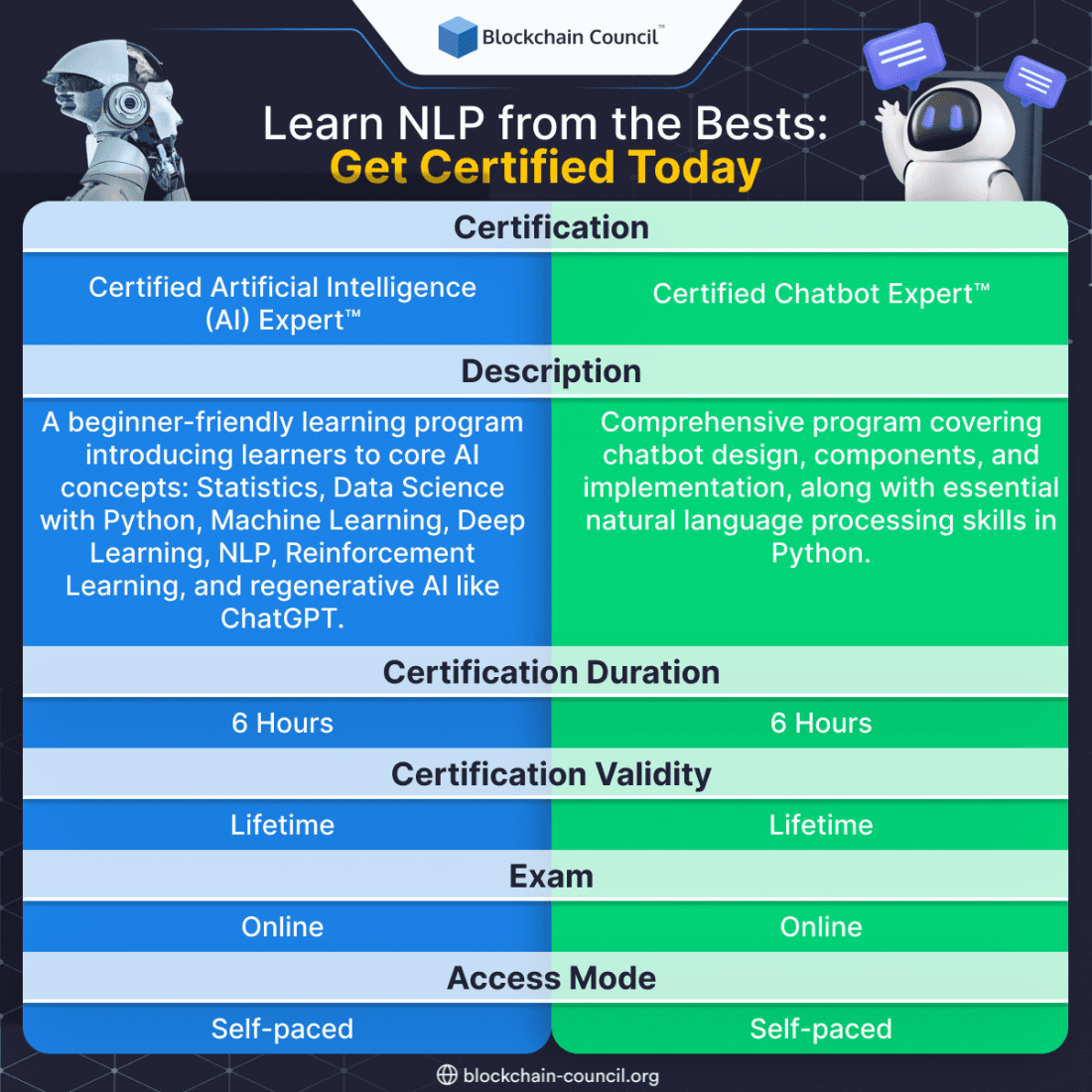

Online Certifications: Platforms like the Blockchain Council offer comprehensive NLP certifications. Look for certifications that cover both theory and hands-on application.

Books: Consider reading books like “Natural Language Processing in Action” by Lane, Howard, and Hapke. These books provide in-depth insights into NLP concepts.

YouTube Tutorials: Many experts share NLP tutorials on YouTube, breaking down complex topics into digestible videos.

Online Communities: Join NLP-focused communities on platforms like Reddit and Stack Overflow. Engaging with experts and enthusiasts can be a valuable learning experience.

Coding Practice: Hands-on coding is essential. Use Python libraries like NLTK and spaCy to experiment with NLP tasks.

Learn NLP from the Bests: Get Certified

Remember, mastering NLP is a journey. Start with the basics, practice consistently, and seek help when needed. With dedication and the right resources, you can become proficient in this critical skill for prompt engineering.

Skill 2: Proficiency in Python Programming

In the world of prompt engineering, mastering Python programming is like having a superpower. Python has become the lingua franca for engineers working on prompt generation and natural language processing (NLP). In this section, we’ll delve into the importance of Python for prompt engineers, explore key Python libraries for NLP, and provide coding exercises to help you develop this crucial skill.

Importance of Python for Prompt Engineers

Python’s popularity among prompt engineers can be attributed to its simplicity, versatility, and a vast ecosystem of libraries and frameworks. Here’s why it’s indispensable:

Readability and Ease of Learning: Python’s clean and readable syntax makes it beginner-friendly. Aspiring prompt engineers can quickly grasp the language, making it an ideal choice for those new to programming.

Rich NLP Libraries: Python boasts a treasure trove of NLP libraries such as NLTK, spaCy, and Gensim. These libraries simplify complex NLP tasks like text tokenization, sentiment analysis, and part-of-speech tagging.

Community Support: Python has a massive and active community of developers. You’ll find countless tutorials, forums, and resources to aid your learning journey. Any problem you encounter, someone has likely faced it before and shared a solution.

Cross-Platform Compatibility: Python runs on multiple platforms, ensuring your code works seamlessly on various operating systems.

Integration Capabilities: Python seamlessly integrates with other technologies, allowing prompt engineers to incorporate it into their existing workflows and tools.

Key Python Libraries for NLP

To harness the power of Python in prompt engineering, familiarize yourself with these essential NLP libraries:

Coding Exercises for Skill Development

Now, let’s put theory into practice with some coding exercises to enhance your Python proficiency:

Tokenization Practice: Write a Python script to tokenize a given text into words and sentences using NLTK. Experiment with different texts and observe how the tokenizer handles various cases.

Named Entity Recognition (NER): Use spaCy to perform NER on a sample text. Identify and extract entities like names, dates, and locations.

Text Classification: Build a basic text classification model using a machine learning library like scikit-learn. Train it to classify text documents into predefined categories.

Word Embeddings: Explore Gensim to create word embeddings from a large text corpus. Visualize word embeddings using techniques like t-SNE for better understanding.

By consistently practicing these exercises and exploring the capabilities of Python libraries, you’ll sharpen your skills as a prompt engineer. Remember, mastery comes through hands-on experience and continuous learning.

Become a Blockchain Developer Today!

15 Hours | Self-Paced

Offers Applicable

Skill 3: Data Analysis and Preprocessing

Data analysis and preprocessing are essential skills for any aspiring prompt engineer. In this section, we’ll delve into the intricacies of these skills, from data collection and cleaning to feature engineering and data visualization techniques.

Data Collection and Cleaning

To kickstart your journey as a prompt engineer, you must first master the art of data collection and cleaning. This phase involves gathering relevant data from various sources, whether it’s structured data from databases or unstructured data from web scraping. The key is to ensure data accuracy and completeness.

Data cleaning is the process of identifying and rectifying errors or inconsistencies in your dataset. This step is crucial as it lays the foundation for accurate analysis. Common tasks include handling missing values, removing duplicates, and addressing outliers. Advanced techniques like imputation and anomaly detection can also come into play.

Feature Engineering

Once your data is pristine, it’s time to move on to feature engineering. Think of features as the building blocks of your machine learning models. Creating meaningful and relevant features can significantly impact the performance of your algorithms.

Feature engineering involves selecting, transforming, and creating new features from your raw data. Techniques like one-hot encoding, scaling, and dimensionality reduction are essential here. It’s all about maximizing the information your data can provide to your models.

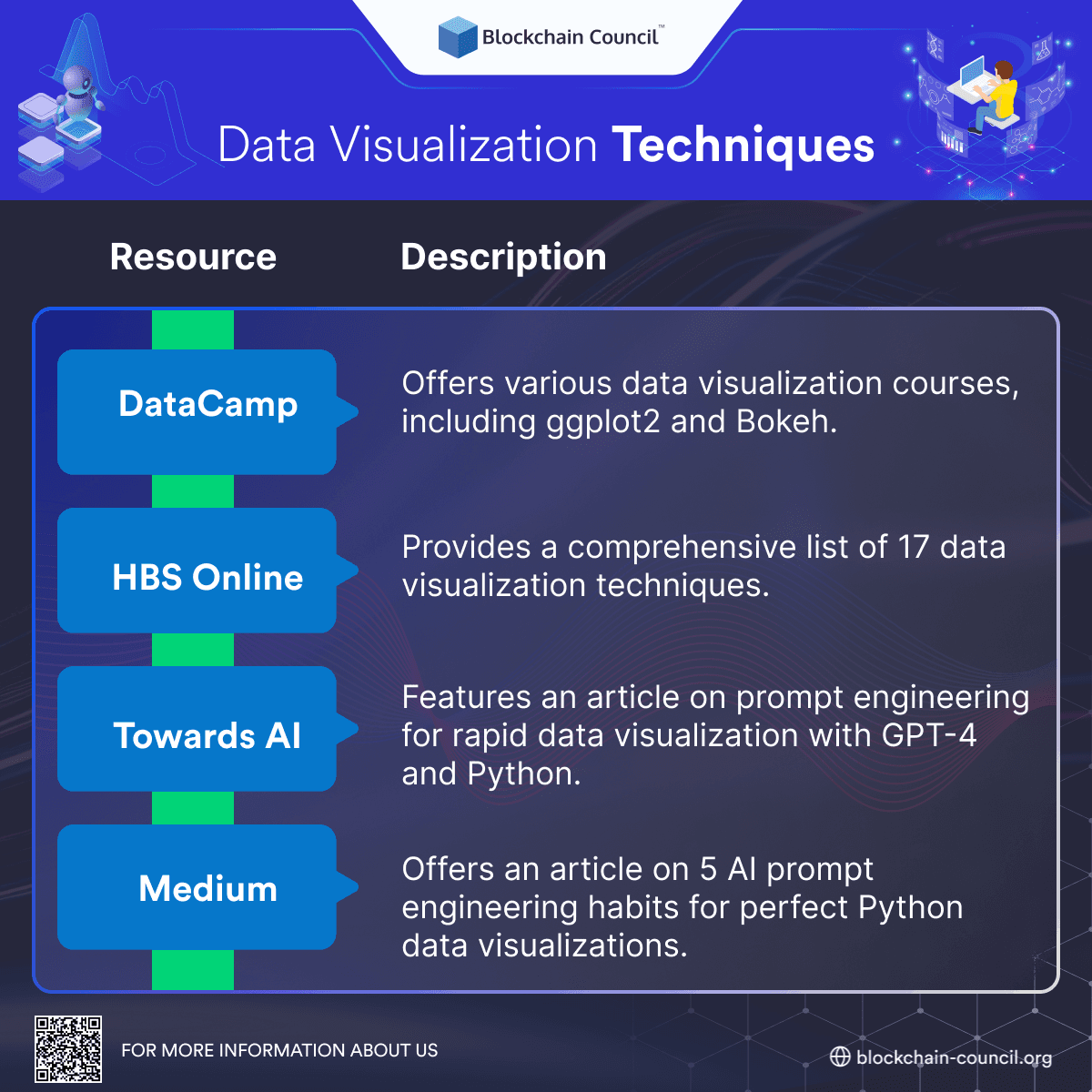

Data Visualization Techniques

Data analysis isn’t just about numbers and algorithms; it’s also about effective communication. Data visualization is the bridge between complex data and human understanding.

Mastering data visualization techniques is crucial for conveying your findings and insights. Tools like Matplotlib, Seaborn, and Tableau can help you create compelling charts and graphs. Remember to choose the right visualization for your data type, whether it’s histograms for distributions or scatter plots for relationships.

Here are some resources that can help you improve your data visualization skills as a prompt engineer:

Skill 4: Prompt Design and Fine-tuning

In the world of AI and language models, mastering the art of prompt design and fine-tuning is like having a secret key to unlock the full potential of these powerful tools. Whether you’re just starting on this journey or a seasoned professional, understanding how to craft effective prompts, fine-tune language models, and apply practical examples is crucial. In this section, we’ll delve into these aspects to help you become a proficient Prompt Engineer.

Creating Effective Prompts

Clarity is King: When crafting prompts, consider them as clear instructions to the AI model. For instance, if you’re using GPT-3 for content generation, a prompt like “Write an article about cars” might yield generic results. Instead, be specific: “Compose a 700-word article on the evolution of electric cars, highlighting Tesla’s innovations.” Clear prompts help the model understand your intent and generate more relevant content.

Context Matters: If you’re engaging in a conversation or a multi-turn interaction with the AI, providing context is crucial. Refer to previous messages or interactions within your prompts. For instance, if you’re having a dialogue about climate change, you could prompt with, “Continuing from our previous discussion about renewable energy, can you explain how solar power contributes to reducing greenhouse gas emissions?”

Experiment and Iterate: The art of prompt design often involves trial and error. Don’t be discouraged if your initial prompts don’t produce the desired results. Experiment with variations, rephrases, or additional context until you achieve the output you seek. Remember, AI models are powerful but can be sensitive to how you frame your requests.

Fine-tuning Language Models

Data Matters: Fine-tuning is essentially teaching the model to specialize in a particular task or domain. To do this effectively, gather a diverse and representative dataset. If you’re fine-tuning for medical text generation, your dataset should include a wide range of medical literature, ensuring the model learns the intricacies of medical terminology and context.

Balancing Act: Fine-tuning requires finding the right balance. Overfitting occurs when the model becomes too specific and struggles with generalization. Underfitting results in a model that doesn’t adapt well to your task. Regularly evaluate your model’s performance on validation data and adjust your fine-tuning strategy accordingly.

Hyperparameter Tuning: Hyperparameters are like the dials and knobs of a machine. Tweaking them can significantly impact your model’s performance. Experiment with learning rates, batch sizes, and the number of training steps to optimize your fine-tuning process. Tools like grid search or Bayesian optimization can assist in finding the best hyperparameters.

Hands-on Examples of Prompt Design

Scenario 1: Content Generation

When generating content, tailoring it to a specific audience and style can be highly effective. Here’s a detailed prompt for creating a blog post on the impact of climate change on coastal communities:

Prompt: “Create an engaging 1000-word blog post in a casual, conversational style about the effects of climate change on coastal communities, targeting environmentally conscious readers.”

In this prompt:

“Create an engaging 1000-word blog post” sets the format and length of the content, ensuring it meets the blog post requirements.

“in a casual, conversational style” specifies the tone, indicating that the content should be approachable and friendly.

“about the effects of climate change on coastal communities” defines the topic, ensuring the content remains focused.

“targeting environmentally conscious readers” identifies the intended audience, making it clear that the post should resonate with those concerned about environmental issues.

By providing these specific details, you guide the AI model to generate a blog post that not only covers the topic thoroughly but also appeals to the desired audience with the appropriate style and tone. This level of precision helps you create content that resonates effectively with your readers.

Scenario 2: Code Generation

In the realm of programming, using AI for code generation can save time and effort. Here’s a more specific prompt for generating Python code:

Prompt: “Write Python code to extract text from a PDF file and save it as a text document.”

In this prompt:

“Write Python code” clearly instructs the AI model to generate Python code.

“Extract text from a PDF file” specifies the task, indicating that text extraction from PDFs is required.

“Save it as a text document” adds the final step, specifying the desired output format.

By providing these details, you guide the AI model to produce code that precisely accomplishes the intended task, making it a valuable tool for programmers.

Scenario 3: Language Translation

Language translation is another powerful application of AI. Here’s a detailed prompt for translating English text into Spanish:

Prompt: “Translate the following English text into Spanish: ‘The future belongs to those who believe in the beauty of their dreams.'”

In this prompt:

“Translate the following English text into Spanish” sets the clear context, instructing the AI model that translation is the task.

“‘The future belongs to those who believe in the beauty of their dreams.'” is the specific text to be translated.

By framing the prompt in this manner, you guide the AI model to provide an accurate translation of the given English text into Spanish. This level of specificity ensures that the output aligns with your requirements, whether for personal or professional use.

Become a Blockchain Architect Today!

12 Hours | Self-Paced

Offers Applicable

Skill 5: Model Selection and Evaluation

Overview of Language Models

Language models are the backbone of NLP applications. They are, in essence, computer programs that have been trained on massive amounts of text data, enabling them to understand and generate human-like text. These models have become remarkably sophisticated in recent years, thanks to advancements in deep learning.

When we talk about language models, it’s impossible not to mention transformers. These architectural marvels have revolutionized NLP by allowing models like GPT-3 to understand context, generate coherent responses, and even translate languages. Transformers are, without a doubt, the driving force behind prompt engineering.

History of Language Models

1950s: Researchers at IBM and Georgetown University developed a system to automatically translate a collection of phrases from Russian to English.

1960s: The first-ever chatbot, Eliza, was created by MIT researcher Joseph Weizenbauz.

2000s: Neural networks began to be used for language modeling, which aims at predicting the next word in a text given the previous words.

2003: Bengio et al. proposed the first neural language model that consists of a one-hidden layer feed-forward neural network.

2010s: Recurrent neural network-based models superseded pure statistical models such as word n-gram language models.

2018: OpenAI introduced GPT-2, a large-scale transformer-based language model that can generate coherent and fluent text.

2018: Google introduced BERT, a transformer-based model that can understand the context of words in a sentence and generate more accurate results.

2020: GPT-3 was introduced by OpenAI, which is currently one of the largest and most powerful language models available.

2023: Microsoft launched Bing AI with real time internet access.

2023: Baidu introduced the ERNIE model to the public.

2023: OpenAI Launched GPT 4.

Choosing the Right Model

Selecting the right language model is akin to choosing the right tool for a job. It’s crucial for prompt engineers to understand the nuances and trade-offs of different models. Here are some key factors to consider when making your choice:

Model Size: Bigger isn’t always better. Smaller models like GPT-2 may be more efficient for certain tasks, while larger ones like GPT-4 are suitable for more complex applications. Consider your project’s computational resources and requirements.

Training Data: The quality and quantity of training data influence a model’s performance. Be sure to choose a model trained on data relevant to your application. For instance, a model trained on legal texts may not perform well in a medical context.

Fine-Tuning: Most prompt engineers don’t start from scratch. They fine-tune pre-trained models on specific tasks or datasets. Understanding the fine-tuning process is essential to adapt models to your needs.

Use Case: Different models excel in different use cases. GPT-4 shines in creative text generation, while BERT is excellent for understanding context in search queries. Evaluate models based on your project’s objectives.

Evaluating Model Performance

Selecting a model is only the beginning; the real test comes when you put it to work. Proper evaluation is critical to ensure your model performs as expected. Here are some evaluation techniques to consider:

Remember, prompt engineering is an iterative process. You may need to fine-tune, re-evaluate, and adapt your model based on real-world feedback.

Research Trends in Prompt Engineering

Successful Prompt Engineering Projects

If you’re looking to dive into the world of prompt engineering and enhance your skills as an engineer, there are several open-source projects available on GitHub that can serve as valuable resources. These projects cover a range of aspects related to prompt engineering, from fine-tuning language models to creating effective prompts for various applications. Below, we’ll introduce you to some noteworthy projects in this field:

1. FinGPT

FinGPT is a data-centric project that leverages the power of GPT-3 to revolutionize the field of finance. By utilizing advanced natural language processing capabilities, FinGPT has the potential to streamline financial analysis, automate tasks, and provide valuable insights for professionals in the finance industry.

2. Learning-Prompt

If you’re new to prompt engineering and want to kickstart your journey, “Learning-Prompt” offers a free online course. This course covers the fundamentals of prompt engineering, including practical tutorials on using models like ChatGPT and Midjourney. It’s an excellent resource for beginners looking to build a strong foundation in this field.

3. tree-of-thoughts

“tree-of-thoughts” is a Python implementation of the Tree of Thoughts concept. This innovative approach significantly enhances model reasoning, boosting its performance by at least 70%. If you’re interested in pushing the boundaries of prompt engineering and improving model interpretability, this project is worth exploring.

4. Awesome-Prompt-Engineering

For those seeking a curated list of prompt engineering techniques and resources, “Awesome-Prompt-Engineering” is a valuable repository. It’s filled with tips, tricks, and best practices for enhancing your prompt engineering skills, particularly when working with ChatGPT and similar models.

Now that you’re familiar with some of these impressive prompt engineering projects, you can start exploring and contributing to them to expand your skill set. These resources cater to a wide range of expertise levels, from beginners to professionals, making it accessible for everyone interested in this fascinating field.

Become a Smart Contract Auditor Today!

10 Hours | Self-Paced

Offers Applicable

How to Become a Certified Prompt Engineer™?

If you’re aiming to elevate your career in the tech and AI industry, obtaining a Certified Prompt Engineer™ certification can be a game-changer. This program, offered by the Blockchain Council, is your ticket to harnessing the potential of prompt engineering and honing your skills with large language models.

In today’s ever-evolving tech landscape, mastering the art of prompt engineering is a must. This certification program is open to individuals at all levels, whether you’re just starting your journey or have years of experience under your belt. Here, we’ll explore the essential skills you’ll develop as you work towards becoming a Certified Prompt Engineer™.

Crafting Precise Prompts for Powerful Results

At the heart of prompt engineering is the ability to create prompts that yield accurate and meaningful responses from AI models. Throughout the program, we’ll teach you how to craft prompts effectively. This involves framing questions, setting context, and optimizing prompts to get the outcomes you desire.

Learning through Real-World Cases: A Hands-On Approach

Theory is crucial, but practical experience is where true learning happens. Our curriculum includes real-world case studies, providing you with valuable hands-on experience. These real-life scenarios will equip you with the skills needed to tackle various tasks, ranging from data analysis to natural language understanding.

Mastering the OpenAI API: Staying Ahead of the Curve

The OpenAI API serves as the powerhouse behind prompt engineering. Our program immerses you in cutting-edge techniques and advanced concepts related to this API. You’ll gain a deep understanding of how to harness its full potential, ensuring you remain at the forefront of this rapidly evolving field.

Unlocking Career Opportunities with Blockchain Council

By becoming a Certified Prompt Engineer™ through the Blockchain Council, you open the door to a world of career possibilities. As AI continues to reshape industries, professionals with prompt engineering skills are in high demand. This certification not only enhances your knowledge but also positions you as a contributor to the future of AI.

Standing Out and Succeeding in a Competitive Landscape

In today’s competitive tech sector, setting yourself apart is crucial. Our program empowers you to showcase your expertise in the field of AI prompts. Your journey to becoming a Certified Prompt Engineer™ starts right here, and it’s worth noting that the certification program duration is 6 hours, ensuring a swift but comprehensive learning experience.

The certification offered by the Blockchain Council is recognized for its validity throughout your career, and the exam is conveniently available online, allowing you to learn at your own pace.

Conclusion

In conclusion, the world of prompt engineering is a high-reward, high-demand field. Netflix’s generous offer is just the tip of the iceberg, as industries worldwide seek prompt engineers to optimize their operations. To excel in this role, mastering automation, scripting, error handling, communication, and problem-solving are paramount. These skills will not only unlock a lucrative career but also ensure you remain at the cutting edge of prompt engineering. So, seize the opportunity, hone your abilities, and become the prompt engineer the world is waiting for.

Frequently Asked Questions

What is the role of a Prompt Engineer in the AI industry?

A Prompt Engineer specializes in crafting input prompts for AI models.

They optimize AI performance by guiding models with clear and context-rich prompts.

They bridge the gap between raw AI capabilities and practical applications.

Their work ensures efficient and precise AI-driven interactions.

They play a crucial role in addressing potential risks associated with AI technologies.

What are the key skills needed to become a successful Prompt Engineer?

Strong understanding of Natural Language Processing (NLP).

Proficiency in Python programming, including knowledge of NLP libraries.

Data analysis and preprocessing skills.

Ability to design effective prompts for AI models.

Expertise in model selection, evaluation, and fine-tuning.

Why is Python programming important for Prompt Engineers?

Python offers readability and ease of learning, making it beginner-friendly.

It has rich NLP libraries like NLTK, spaCy, and Gensim for NLP tasks.

Python has an active community, providing tutorials and resources.

It runs on multiple platforms, ensuring code compatibility.

Python integrates well with other technologies used in prompt engineering.

How do I choose the right language model for prompt engineering?

Consider model size based on project requirements and computational resources.

Ensure the model was trained on relevant data for your application.

Understand the fine-tuning process to adapt models to your needs.

Evaluate models based on their suitability for your project’s objectives.

Choose a model that aligns with your specific use case.

What are the key trends in Prompt Engineering discussed in the article?

Few-shot learning techniques for improved accuracy and efficiency.

Domain-specific prompt engineering for industries like healthcare and finance.

Multilingual and cross-lingual prompts to enhance AI accuracy.

Fine-tuning language models for better results on specific tasks.

Adversarial prompt engineering to identify AI system weaknesses and improve performance.

Related Articles

View All

AI & ML

Bad Prompt vs Good Prompt: How to Write Prompts That Actually Work

Understand the difference between bad prompts and good prompts with practical examples, frameworks, and techniques to improve AI-generated results.

AI & ML

How to Get a Job in AI in 2026: Skills, Portfolio, and Hiring Strategy

Discover the top AI skills, portfolio strategies, certifications, and hiring trends needed to land a successful AI job in 2026.

AI & ML

WordPress 7.0 for Developers: New APIs, Block Editor Enhancements, and a Practical Migration Guide

WordPress 7.0 brings new AI-ready APIs, PHP-only blocks, DataViews admin modernization, and key migration tasks for themes and plugins, including a PHP 7.4+ minimum and the always-on iframed editor.

Trending Articles

The Role of Blockchain in Ethical AI Development

How blockchain technology is being used to promote transparency and accountability in artificial intelligence systems.

AWS Career Roadmap

A step-by-step guide to building a successful career in Amazon Web Services cloud computing.

How Blockchain Secures AI Data

Understand how blockchain technology is being applied to protect the integrity and security of AI training data.