The Mythos Leak: What It Means for AI Security

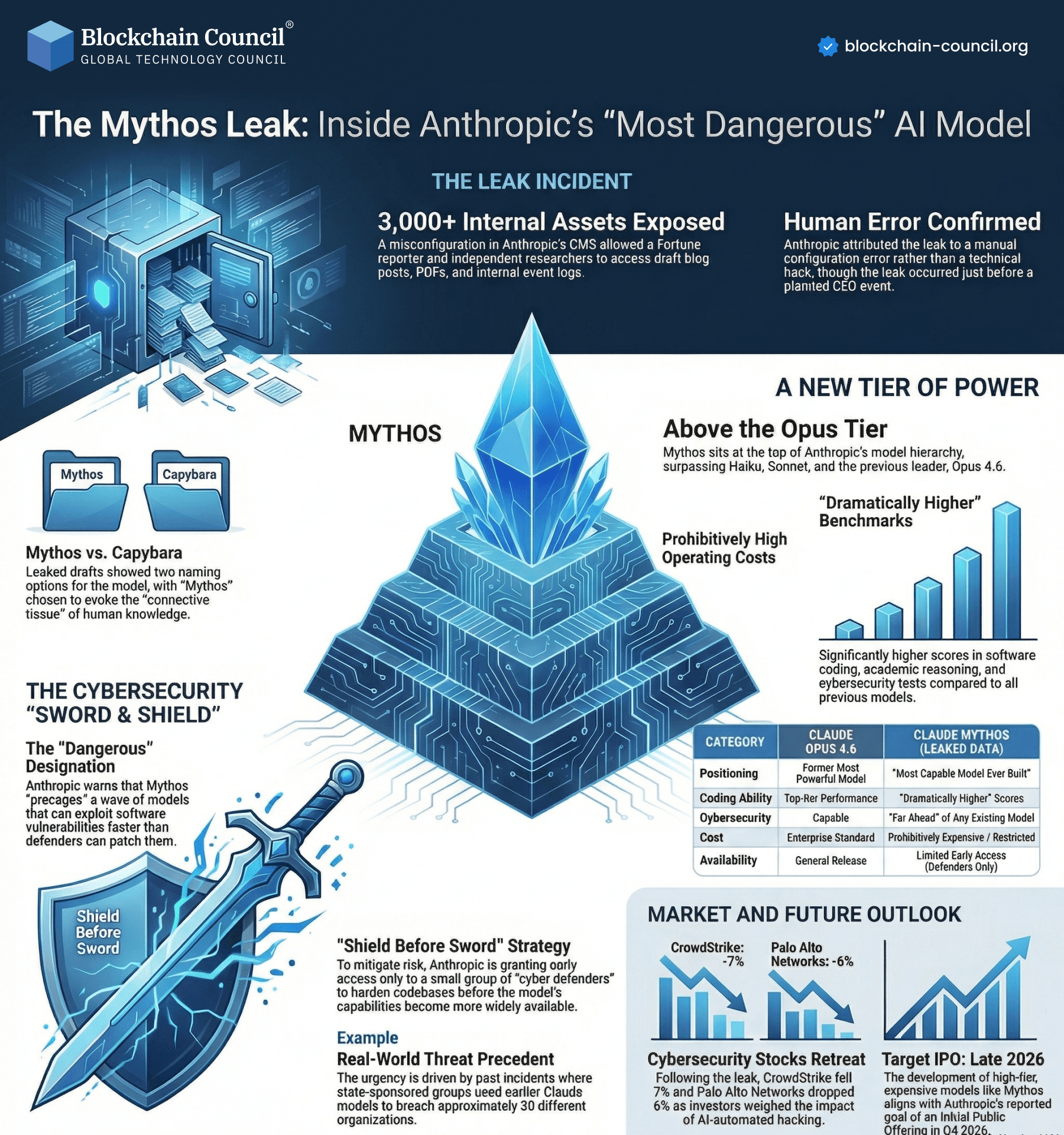

The Mythos leak highlights critical risks in advanced AI systems, exposing over 3,000 internal assets due to configuration errors.

Incidents like the Mythos leak highlight how critical AI security has become-build your expertise with an AI Security Certification, strengthen your technical skills using a Python Course, and understand real-world risk implications through an AI powered marketing course.

What Happened

Internal assets exposed

Caused by human error

Not a direct hack

Why It Matters

Shows vulnerability in AI infrastructure

Highlights importance of configuration security

Raises concerns about powerful AI models

Key Insight

The biggest risk in AI systems is often human error, not technology failure.

AI Power and Risks

Higher performance models

Increased cybersecurity risks

Expensive infrastructure

Industry Impact

Increased focus on AI governance

Adoption of “shield before sword” strategies

Restricted access to powerful models

Learning Perspective

To understand AI security:

Learn system design via an Agentic AI Course

Build technical skills with a Python Course

Explore real-world implications through an AI powered marketing course

Final Thoughts

AI advancement must be balanced with security and responsibility.

To stay ahead of emerging AI threats and vulnerabilities, combine security and AI knowledge with an AI Security Certification, deepen your understanding via a machine learning course, and explore adoption strategies through a Digital marketing course.

FAQS

What is the Mythos Leak in AI?

The Mythos Leak refers to a large-scale exposure of sensitive AI datasets, model logs, or internal prompts that revealed vulnerabilities in AI systems.How did the Mythos Leak occur?

It likely occurred due to misconfigured storage, insecure APIs, or lack of access controls in AI infrastructure.What type of data was exposed?

Training datasets, prompts, user interactions, and possibly proprietary model logic were exposed.Why is it significant?

It highlights major weaknesses in AI security and shows how sensitive AI data can be exploited.Which industries are affected?

Finance, healthcare, defense, and tech-basically any sector using AI at scale.How does it impact AI model training?

Leaked data can be reused to replicate or attack models, reducing competitive advantage.Can leaked datasets be misused?

Yes, attackers can retrain models or extract sensitive patterns.What is prompt injection risk?

It’s when attackers manipulate AI inputs to override instructions or extract data.Impact on enterprises?

It reduces trust and slows AI adoption due to security concerns.Role of data governance?

Strong governance ensures proper access, storage, and usage controls.How to secure AI pipelines?

Use encryption, access controls, and continuous monitoring.Legal implications?

Companies may face fines under data protection laws.Impact on privacy?

User data exposure can lead to identity and financial risks.Developer lessons?

Never expose logs, secure APIs, and validate inputs.What is zero-trust in AI?

A model where no system or user is trusted by default.Detection tools?

SIEM, EDR, and AI monitoring tools can detect anomalies.Regulatory impact?

Governments may enforce stricter AI compliance laws.Long-term risks?

Model theft, misuse, and loss of trust.Open-source risk?

It increases transparency but also exposure if mismanaged.Prevention tips?

Regular audits, secure infrastructure, and strong policies.

Related Articles

View All

Infographics

Security Tokens vs Utility Tokens

Security tokens and utility tokens can be distinguished based on the intended use and functionality of the tokens. In this article, we will delve deeper into the concept of tokens and help you gain a clear picture of security tokens and utility tokens.

Infographics

Impact of Trump Tariffs on AI Companies

Analyze how Trump-era tariffs and trade policies impact AI companies through semiconductor costs, supply chains, manufacturing, and global technology competition.

Infographics

Challenges from xAI Acquisition of X

Explore the major challenges arising from xAI’s acquisition of X including privacy concerns, AI ethics, regulation, misinformation, and platform integration risks.

Trending Articles

Can DeFi 2.0 Bridge the Gap Between Traditional and Decentralized Finance?

The next generation of DeFi protocols aims to connect traditional banking with decentralized finance ecosystems.

Claude AI Tools for Productivity

Discover Claude AI tools for productivity to streamline tasks, manage workflows, and improve efficiency.

How to Install Claude Code

Learn how to install Claude Code on macOS, Linux, and Windows using the native installer, plus verification, authentication, and troubleshooting tips.