Claude Safety & Ethics

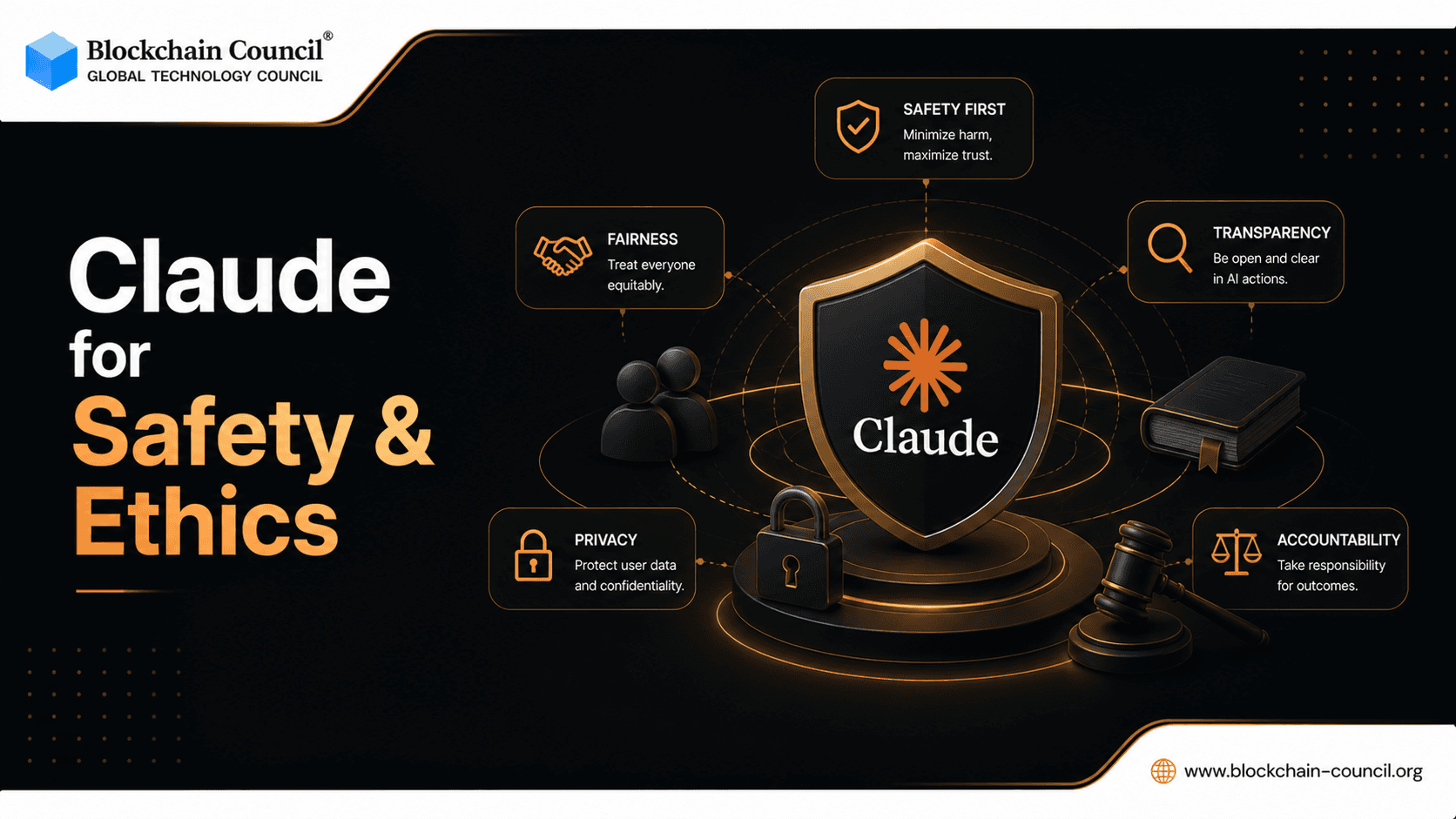

As AI becomes more powerful, one question matters more than ever - can we trust it?

From generating content to making decisions, AI systems influence millions of users daily. This makes safety, ethics, and alignment critical.

This is where Claude AI stands out. Built with a strong focus on responsible AI, Claude is designed to be helpful, honest, and safe.

What is AI Safety & Ethics?

AI safety refers to ensuring that artificial intelligence systems:

Do not cause harm

Provide reliable outputs

Follow ethical guidelines

AI ethics focuses on:

Fairness

Transparency

Accountability

Together, they ensure AI is trustworthy and aligned with human values.

Constitutional AI Principles

One of the key innovations behind Claude is Constitutional AI, developed by Anthropic.

What is Constitutional AI?

It is a framework where AI is trained using a set of guiding principles (a “constitution”) instead of relying only on human feedback.

Key Benefits:

More consistent behavior

Reduced harmful outputs

Better alignment with ethical standards

Why It Matters:

It ensures that Claude:

Follows rules independently

Makes safer decisions

Maintains reliability at scale

Bias Handling in Claude

Bias in AI is a major concern.

What is AI Bias?

Bias occurs when AI produces:

Unfair results

Skewed perspectives

Discriminatory outputs

How Claude Handles Bias:

Balanced training data

Continuous evaluation

Ethical safeguards

Goal:

To provide fair and neutral responses across different contexts.

Safe Outputs: Preventing Harmful Content

Claude is designed to prioritize safety in every response.

Safety Mechanisms:

Content filtering

Harm prevention systems

Context-aware moderation

What It Avoids:

Harmful instructions

Unsafe content

Misleading information

Result:

More reliable and responsible AI interactions.

AI Alignment: Matching Human Values

AI alignment ensures that AI systems behave according to human expectations.

What Alignment Means:

Acting in users’ best interest

Avoiding harmful actions

Providing truthful outputs

Claude’s Approach:

Constitutional principles

Safety training

Continuous improvement

Limitations & Restrictions

No AI system is perfect - and Claude is no exception.

Key Limitations:

May lack real-time data

Can make mistakes

Requires human verification

Restrictions:

Avoids unsafe or unethical tasks

Limits harmful outputs

Follows strict safety guidelines

Why This is Important:

These limitations ensure:

User safety

Ethical usage

Responsible AI deployment

Why Claude is a Leader in Safe AI

Compared to many AI systems, Anthropic focuses heavily on:

Safety-first design

Ethical frameworks

Advanced alignment

Continuous improvements

This makes Claude one of the most trustworthy AI systems available today.

AI Learning Path: Understanding Ethical AI

To fully understand AI safety:

Step 1: Basics

AI fundamentals

Ethics principles

Step 2: Intermediate

Bias understanding

Safety mechanisms

Step 3: Advanced

AI alignment

Responsible AI development

A structured approach like the Certified Claude AI Expert Program helps you:

Understand AI ethics deeply

Apply safe AI practices

Build responsible systems

Tech Certifications for Future AI Careers

Recommended

Business Certifications for Growth

Top Certifications

Career Opportunities

AI Ethics Specialist

Responsible AI Consultant

Policy Advisor

AI Researcher

Conclusion

As AI continues to evolve, safety and ethics will define its future.

Claude sets a strong example by combining:

Advanced technology

Ethical principles

Responsible design

The goal is not just powerful AI - but AI we can trust.

FAQs

1. What is Claude AI safety?

It refers to measures ensuring safe and ethical AI outputs.

2. What is Constitutional AI?

A framework where AI follows predefined ethical principles.

3. Who developed Claude AI?

It was developed by Anthropic.

4. How does Claude handle bias?

Through balanced training and continuous evaluation.

5. Is Claude safe to use?

Yes, it is designed with strong safety measures.

6. What is AI alignment?

Ensuring AI behaves according to human values.

7. Can AI be biased?

Yes, but systems like Claude work to reduce it.

8. What are safe outputs?

Responses that avoid harm and misinformation.

9. Does Claude have limitations?

Yes, like all AI systems.

10. Can Claude make mistakes?

Yes, human verification is important.

11. What is AI ethics?

Principles guiding responsible AI use.

12. Why is AI safety important?

To prevent harm and ensure trust.

13. Can Claude generate harmful content?

It is designed to avoid such outputs.

14. What industries use ethical AI?

Tech, healthcare, finance, and more.

15. What is bias in AI?

Unfair or skewed outputs.

16. How can I learn AI ethics?

Through courses and certifications.

17. Is AI regulated?

Yes, regulations are evolving.

18. What is responsible AI?

AI designed with safety and ethics in mind.

19. Can AI replace human judgment?

No, it should support decision-making.

20. What is the future of AI safety?

Stronger regulations and better alignment.

Related Articles

View All

Claude Ai

Claude for Finance: Practical Workflows, Modeling, and Governance for Financial Professionals

Claude for Finance helps analysts and finance teams automate research, modeling, and close workflows with verifiable sources, governance, and human review.

Claude Ai

Implementing Secure Prompting in Java with Claude: Guardrails, PII Redaction, and Compliance Patterns

Secure prompting in Java with Claude focuses on guardrails, PII redaction, compliance controls, and safe enterprise AI workflows.

Claude Ai

Claude AI for Infrastructure as Code (IaC): Safe Terraform and CloudFormation Generation, Review, and Refactoring

Claude AI improves Infrastructure as Code workflows with safe Terraform generation, CloudFormation review, and automated refactoring support.

Trending Articles

What is AWS? A Beginner's Guide to Cloud Computing

Everything you need to know about Amazon Web Services, cloud computing fundamentals, and career opportunities.

Claude AI Tools for Productivity

Discover Claude AI tools for productivity to streamline tasks, manage workflows, and improve efficiency.

How to Create Claude Skills?

Claude Skills are one of the most important features Anthropic has introduced for users who want automation that is structured, consistent and reusable. Instead of giving Claude long instructions ever