The Hidden Environmental Costs of AI

Artificial intelligence feels invisible. You type a question, get an answer, and never think about what happens behind the screen. But every interaction with AI comes at a real environmental cost. AI needs massive amounts of electricity, water, and hardware to function. These demands are growing quickly, and they carry hidden consequences for the planet. This article explains those hidden costs in plain language, so you can see how AI shapes not only technology but also energy systems, climate goals, and communities worldwide. If you are exploring this field for your career, an AI certification can also help you understand how sustainability and advanced technology come together.

Why Understanding AI’s Environmental Impact Matters

AI systems are not just code. They live inside large warehouses packed with servers, called data centers. These buildings consume electricity to keep machines running, water to keep them cool, and new hardware every few years. Each step in this process, from building chips to disposing of old machines, leaves a mark on the environment.

For most people, the real costs are invisible. When you send a prompt to a Chatbot, you don’t see the energy used to generate your answer. But at scale, across billions of queries each day, the impact is massive. This is why researchers, governments, and even technology companies themselves are starting to measure the hidden environmental costs of AI.

How Much Energy Does AI Really Use

The first and most important cost is electricity. Data centers are power-hungry, and AI makes them even hungrier. In 2022, global data centers consumed about 240 to 340 terawatt hours of electricity. That accounted for about one to one and a half percent of all electricity demand worldwide. To put it in perspective, that is roughly equal to the total electricity consumption of entire countries such as Australia.

In the United States, the picture is even clearer. In 2023, American data centers consumed 176 terawatt hours of electricity. That number represented about 4.4 percent of the entire country’s electricity use. And these figures are rising fast.

According to the International Energy Agency, demand from data centers is expected to more than double by 2030, reaching about 945 terawatt hours. That growth is driven heavily by AI workloads, which include both training large models and running them millions of times a day.

Training a single large AI model can take weeks of nonstop computation on thousands of servers. This is one reason why companies like Google, OpenAI, and Microsoft invest so heavily in powerful GPUs and custom hardware. Once trained, those models must also be used by millions of people every day. That daily use, called inference, adds up to enormous amounts of energy use on its own.

The end result is simple: AI requires more electricity than many people realize. The question is not just whether the electricity exists, but whether it comes from clean or dirty sources.

The Link Between AI and Carbon Emissions

Electricity demand is closely tied to emissions. If the electricity comes from fossil fuels, every query you run through AI indirectly creates greenhouse gases.

A recent U.S. study showed that data centers contributed more than 105 million tonnes of carbon dioxide equivalent in 2023. That was about 2.18 percent of the country’s total emissions. What is more troubling is that the carbon intensity of these data centers is higher than the national average. This means each unit of energy they use tends to result in more emissions compared to other industries.

Big tech companies often speak about improving efficiency. While efficiency is improving, total emissions are still rising because demand is growing even faster. Between 2020 and 2023, indirect emissions from major tech firms rose by 150 percent. These emissions include electricity for data centers, supply chain logistics, and cooling.

Another way to see the scale is to compare AI training to national emissions. Researchers have estimated that training a single advanced AI model can release as much carbon dioxide as the annual emissions of a small country. This makes AI not just a technical breakthrough but also a climate challenge.

Why the Growth of AI Raises Alarms

Energy and emissions are not the only costs, but they set the stage for why people are worried. If AI workloads continue to expand at the current pace, their demand for electricity will rival that of entire developed nations by the end of the decade. Unless renewable energy sources replace fossil fuels at the same speed, the rise of AI could push climate targets out of reach.

The International Energy Agency warns that AI could more than double electricity demand from data centers by 2030. High-adoption scenarios, where AI spreads into nearly every part of society, show electricity demand increasing by 20 to 25 times compared to traditional computing.

These projections highlight a basic truth: AI is not just about digital progress. It is about energy, resources, and emissions in the physical world. That is why understanding the hidden environmental costs of AI is essential for anyone interested in technology, policy, or sustainability.

Institutional Drivers of Sustainable AI

Green infrastructure investment

Leading firms are committing capital to renewable-powered data centres. By aligning AI growth with clean energy, they reduce carbon footprints and ensure scalability without environmental backlash.

Energy-efficient model design

Research is shifting towards smaller, specialized, and more efficient models. Techniques like knowledge distillation, pruning, and quantisation are cutting computational costs while preserving performance.

Hardware innovation

Chipmakers are racing to deliver energy-optimised processors tailored for AI workloads. Advances in neuromorphic computing and photonic chips could drastically lower energy consumption per training cycle.

Carbon-aware scheduling

AI systems are increasingly trained and deployed during periods of low-carbon energy availability. This smart scheduling aligns demand with renewable supply, improving both economics and sustainability.

Circular economy for hardware

Institutions are exploring refurbishing, recycling, and second-life strategies for GPUs and servers. This reduces e-waste while extending the value chain of AI infrastructure.

Transparent reporting

A growing push for standardised metrics—such as carbon per parameter trained—enables investors and regulators to evaluate environmental impacts. Transparency helps institutions prioritise sustainable innovation.

Policy and regulatory clarity

Governments are setting clearer guidelines for sustainable digital infrastructure. Carbon-pricing schemes and green credits are incentivising organisations to adopt eco-friendly AI strategies.

Cross-sector collaborations

Tech companies, academia, and energy providers are forming alliances to accelerate sustainable AI. Joint initiatives are pooling resources and knowledge to scale climate-friendly solutions.

Institutional sentiment

Surveys show that a majority of enterprises consider sustainability a key factor in their AI adoption roadmaps. This sentiment is reshaping procurement, R&D, and long-term investment priorities.

Global sustainability frameworks

International bodies are aligning on shared standards for sustainable AI deployment. Harmonised frameworks ensure that companies can scale AI responsibly across borders with consistent practices.

How Careers and Knowledge Fit In

While the environmental story may sound alarming, it also creates opportunities for professionals. New careers are emerging around making AI more efficient and sustainable. Engineers, policymakers, and data specialists are needed to measure, manage, and reduce the footprint of AI systems.

For those interested in growing in this field, education plays a key role. Structured learning such as AI certs gives you the foundation to understand how to apply AI responsibly. For people focused on broader systems, a Data Science Certification is a strong way to build skills in managing and analyzing complex datasets that include environmental metrics. Those in business can explore a Marketing and Business Certification to apply AI in ways that also meet sustainability goals. For those curious about digital infrastructure, blockchain technology courses also highlight the shared challenges of energy demand and scalability across advanced technologies.

This link between knowledge and action matters. As AI continues to grow, the professionals who understand its environmental impact will be the ones shaping a more sustainable future.

Water Use and Cooling Challenges

Electricity is only one part of the equation. The other big hidden cost of AI is water. Data centers produce a lot of heat because servers run constantly at high capacity. To prevent damage and keep performance stable, they must stay cool. Many facilities rely on water-based cooling systems, which pull in millions of gallons each day.

Analysts predict that by 2028, AI-related data centers could consume more than 1,068 billion liters of water annually. That would be an eleven-fold increase compared to today. To put that in simple terms, it is the same as filling hundreds of thousands of Olympic-sized swimming pools every year.

The impact of this water use is not evenly distributed. Some data centers are built in regions that already face water stress. Farmers in these areas often compete with industrial facilities for the same supply. In places like Arizona, residents have already raised concerns that water-hungry technology firms are draining local resources.

Even at the level of individual queries, water usage adds up. Google’s recent data shows that one Gemini text prompt requires about 0.26 milliliters of water for cooling. That may sound tiny, but when billions of prompts are processed daily, the total becomes enormous.

Communities are beginning to notice. Local governments are questioning permits for new data centers, and activists are calling for companies to disclose their true water footprints. Transparency remains limited, but the trend is clear: AI is pushing water demand higher and faster than many expected.

The Growing Problem of Electronic Waste

AI is built on powerful chips such as GPUs and TPUs. These devices are essential for training and running large models. But they have a limited lifespan. As demand for faster, more efficient hardware grows, older chips and servers are replaced at a rapid pace.

This cycle produces a significant amount of electronic waste. Estimates suggest that AI could add between 1.2 and 5 million metric tons of e-waste globally by 2030. In some scenarios, that would make up nearly 12 percent of global e-waste.

Electronic waste is not just about volume. Many components contain toxic materials like lead and mercury. If not handled properly, they can leach into soil and water, causing long-term pollution. While recycling programs exist, they are often uneven across regions and do not fully recover valuable materials.

The hidden cost of this waste is also economic. Building new hardware requires mining rare earth metals and manufacturing in energy-intensive plants. Each replacement cycle restarts the chain of extraction, transport, and disposal. This means that e-waste is both a local pollution problem and a global resource challenge.

Uneven Social and Local Impacts

Environmental costs are not shared equally. Data centers often bring their heaviest impacts to communities that already face challenges. Poorer areas are more likely to host facilities that rely on fossil fuel power plants. This increases air pollution and raises local health risks.

Water use creates another layer of inequity. A facility in a water-scarce region may divert resources away from agriculture or local residents. The benefits of AI are global, but the environmental burden is often concentrated on a few communities.

Researchers describe this as an uneven distribution of costs and benefits. Wealthy users in major cities enjoy powerful AI tools with little awareness of their impact. Meanwhile, smaller communities near data centers may face rising water bills, limited supply, and environmental stress.

This imbalance raises questions about fairness. Should companies compensate communities more directly? Should governments regulate where facilities are built? These debates are only beginning, but they will grow louder as AI expands.

Training vs Inference: Where the Energy Goes

People often ask whether training or inference consumes more energy. The answer is complex. Training large models is extremely energy intensive. It can take weeks of nonstop computation on thousands of GPUs. The result is a model that may consume as much energy as thousands of households during its training phase.

But inference, or daily use, should not be overlooked. Once the model is deployed, it is accessed millions or even billions of times. Each prompt requires less energy than training, but the sheer scale of requests makes the total impact huge.

For example, training a state-of-the-art model might emit the same carbon as a small country in one year. But if millions of people use that model every day for several years, the inference costs can exceed the training costs over time.

This means both sides matter. Efficiency improvements in training are helpful, but without changes to inference infrastructure, the footprint will continue to grow. It also explains why companies are investing in hardware that can process requests faster and with lower energy use.

Why These Issues Are Hard to See

One reason these costs remain hidden is that companies do not always release detailed data. Reporting standards for AI’s environmental footprint are weak. Some companies share high-level numbers on electricity or water use, but they rarely break down the share specific to AI.

Without transparency, it is hard for researchers, regulators, and the public to understand the true impact. This lack of visibility allows costs to remain externalized, falling on local communities and ecosystems rather than on companies or users.

The Organization for Economic Co-operation and Development has pointed out this gap in policy. Until clear reporting rules are adopted, the hidden costs of AI will remain undercounted.

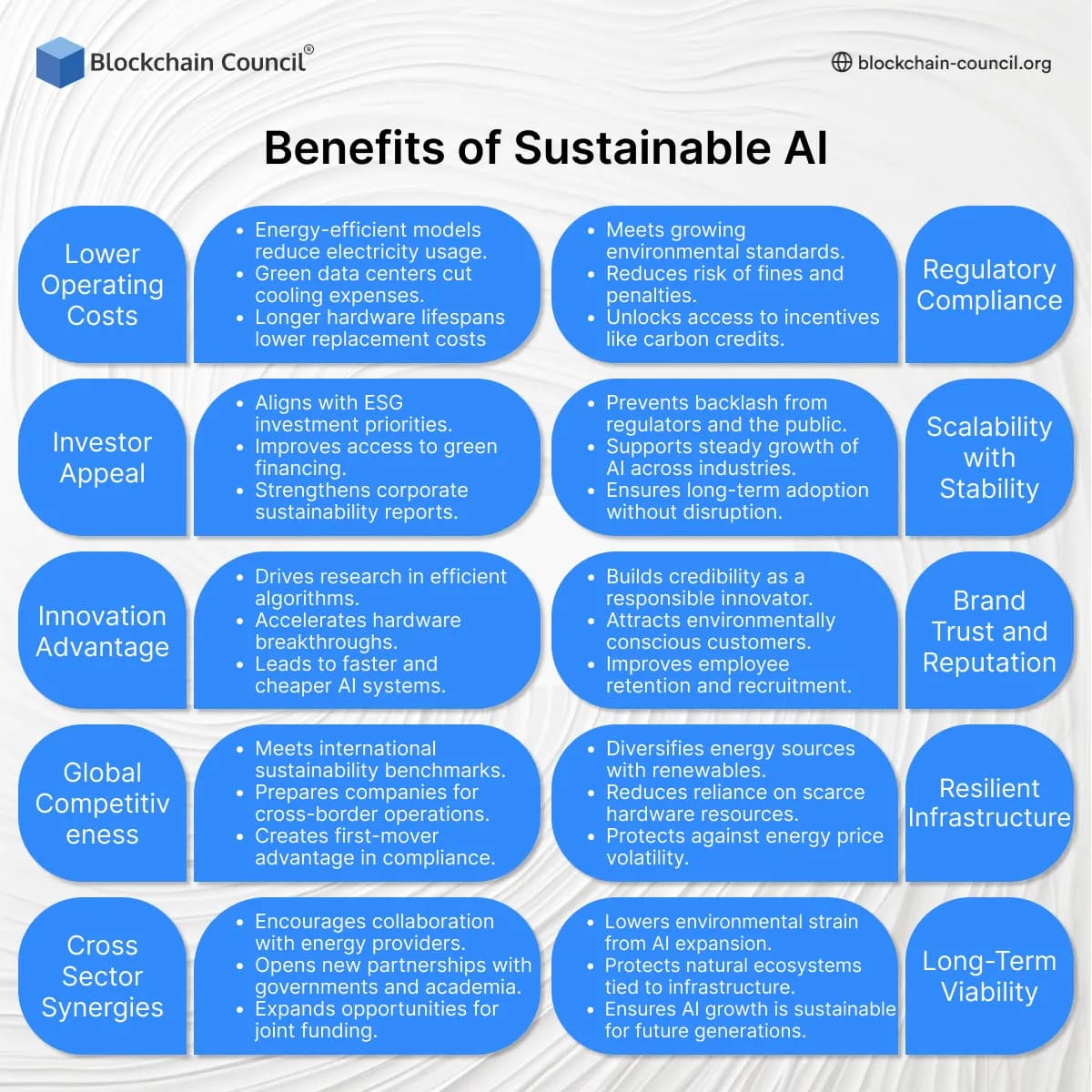

Benefits of Sustainable AI

Lower Operating Costs

- Energy-efficient models reduce electricity usage.

- Green data centers cut cooling expenses.

- Longer hardware lifespans lower replacement costs.

Regulatory Compliance

- Meets growing environmental standards.

- Reduces risk of fines and penalties.

- Unlocks access to incentives like carbon credits.

Investor Appeal

- Aligns with ESG investment priorities.

- Improves access to green financing.

- Strengthens corporate sustainability reports.

Scalability with Stability

- Prevents backlash from regulators and the public.

- Supports steady growth of AI across industries.

- Ensures long-term adoption without disruption.

Innovation Advantage

- Drives research in efficient algorithms.

- Accelerates hardware breakthroughs.

- Leads to faster and cheaper AI systems.

Brand Trust and Reputation

- Builds credibility as a responsible innovator.

- Attracts environmentally conscious customers.

- Improves employee retention and recruitment.

Global Competitiveness

- Meets international sustainability benchmarks.

- Prepares companies for cross-border operations.

- Creates first-mover advantage in compliance.

Resilient Infrastructure

- Diversifies energy sources with renewables.

- Reduces reliance on scarce hardware resources.

- Protects against energy price volatility.

Cross-Sector Synergies

- Encourages collaboration with energy providers.

- Opens new partnerships with governments and academia.

- Expands opportunities for joint funding.

Long-Term Viability

- Lowers environmental strain from AI expansion.

- Protects natural ecosystems tied to infrastructure.

- Ensures AI growth is sustainable for future generations.

Hardware Manufacturing and Resource Extraction

AI would not exist without specialized hardware. Graphics processing units (GPUs), tensor processing units (TPUs), and other high-performance chips are the engines that train and run large models. But these chips do not come out of thin air. They are manufactured through an intensive process that begins with mining rare earth elements and other metals.

Mining is destructive. It strips landscapes, contaminates soil, and pollutes rivers. Many mines are located in regions with weak environmental protections. Communities near these operations often deal with poor air quality, toxic waste, and unsafe labor conditions. This means that before a chip even enters a data center, it has already left an environmental footprint.

The next step is fabrication. Semiconductor plants are some of the most resource-hungry industrial facilities in the world. They use enormous amounts of water for cooling and cleaning. They also consume vast amounts of electricity. Once built, the chips must be packaged, transported, and installed into servers. Each step creates emissions and resource use that are rarely counted in official AI impact reports.

Disposal and End-of-Life Issues

No piece of hardware lasts forever. Servers wear out. Cooling systems break down. Chips become outdated as new, faster versions are released. This cycle produces a steady stream of waste.

Disposal is tricky because electronic components contain toxic materials like mercury and lead. If these parts are not recycled properly, they can leak into soil and water, creating long-term pollution. Many countries ship old electronics to regions with weak recycling standards, where they are broken down in unsafe conditions. Workers in these areas may be exposed to hazardous chemicals without protection.

Even recycling itself has costs. Recovering metals from old electronics requires energy, water, and chemicals. While recycling is better than dumping, it is not a perfect solution. The reality is that every replacement cycle for AI hardware restarts a chain of extraction, manufacturing, and disposal.

Future Growth and Rising Demand

The demand for AI is not slowing down. Reports from the International Energy Agency show that by 2030, electricity demand from data centers could more than double, reaching nearly 945 terawatt hours per year. That is almost the same as the entire electricity consumption of Japan today.

In high adoption scenarios, AI could increase electricity use by 20 to 25 times compared to traditional computing. This is not a distant risk. AI is moving into healthcare, finance, education, entertainment, and more. Each new application adds more daily queries, more servers, and more demand for power and cooling.

Water demand is also expected to grow sharply. By 2028, AI data centers may consume more than 1,068 billion liters of water annually. That is an 11-fold increase from today’s levels. Communities already struggling with drought could face serious shortages if more facilities are built nearby.

The risk is simple. If clean energy and sustainable practices do not expand as quickly as AI adoption, the environmental impact could derail global climate targets.

Lack of Transparency and Accountability

One reason the full costs remain hidden is the lack of standard reporting. Companies often release broad sustainability reports, but they rarely separate AI-specific numbers. For example, they may disclose total electricity use without stating how much is tied to training and inference.

This lack of clarity makes it difficult to compare companies or hold them accountable. Regulators are beginning to call for stricter transparency standards, but progress is slow. Without public data, it is hard for researchers to estimate the real global impact.

Transparency is not just a policy issue. It also affects consumers and businesses. If people do not know the environmental cost of their tools, they cannot make informed choices. A culture of openness is needed so that AI progress does not come at the expense of hidden environmental damage.

What Can Be Done to Reduce the Costs

AI’s environmental impact is not fixed. There are real steps that can help reduce the costs while still allowing innovation.

- Shift to renewable energy: Data centers powered by wind, solar, or hydro have a much lower carbon footprint. Companies can sign long-term contracts for clean power.

- Improve efficiency: Hardware can be designed to use less energy per task. Software can also be optimized to reduce waste.

- Smarter cooling systems: Advanced cooling methods, such as liquid cooling or locating facilities in cooler climates, can reduce water and energy demand.

- Longer hardware lifespans: Extending the life of chips and servers reduces the volume of e-waste. Better recycling systems can recover more valuable metals.

- Policy and regulation: Governments can enforce transparency standards, limit water use in stressed regions, and push companies toward cleaner energy.

These solutions will not remove all costs, but they can make AI growth more balanced with environmental needs.

Building Knowledge for a Sustainable Future

For professionals, this is also a time of opportunity. Companies are looking for people who can design, manage, and regulate AI in ways that are efficient and sustainable. Education is one way to prepare for this future.

Structured learning such helps professionals understand the shared challenges of scaling digital infrastructure. For example, AI certs are becoming more common, giving people the chance to work with advanced systems while understanding their costs.

These certifications do more than teach technical skills. They also build awareness of how technology choices affect the environment and society. The professionals trained today will be the ones who shape a more sustainable AI ecosystem tomorrow.

Conclusion

AI has the power to transform industries, solve problems, and improve lives. But behind every Chatbot response and generated image is a hidden environmental cost. Data centers consume vast amounts of electricity. Training and inference release millions of tons of carbon emissions. Cooling systems require billions of liters of water. Hardware manufacturing and disposal create pollution and waste.

These costs are not spread evenly. Communities near data centers often face the heaviest burdens, from water shortages to air pollution. And without clear reporting, the true scale of the problem remains hidden from most users.

Yet there is room for action. Companies can invest in clean energy and smarter systems. Governments can set transparency standards. Professionals can learn the skills to design and manage AI responsibly. For individuals, awareness is the first step. Every choice we make about how to build and use AI helps shape its environmental footprint.

The future of AI will not be judged only by how powerful it becomes, but also by how responsibly it grows. By facing its hidden environmental costs today, we can build a future where AI is not only smart but also sustainable.

Related Articles

View All

AI & ML

AI Terms Explained: Core Concepts, Trends, and Practical Definitions

Learn the most important AI terms, from ML and LLMs to agents, RAG, and governance. Understand definitions, trends, and practical enterprise use cases.

AI & ML

Human vs AI in Web3 and Smart Contract Auditing: Replacement or Acceleration?

LLMs speed up Web3 audits with scanning, fuzzing, and better reports, but human researchers still lead threat models, invariants, and complex attack-path analysis.

AI & ML

Human vs AI in Cybersecurity: Where Analysts Still Beat Automation and How to Build a Hybrid Defense Team

Human vs AI in cybersecurity is about division of labor. Learn where analysts outperform automation and how to build a hybrid human-AI defense team.

Trending Articles

The Role of Blockchain in Ethical AI Development

How blockchain technology is being used to promote transparency and accountability in artificial intelligence systems.

How Blockchain Secures AI Data

Understand how blockchain technology is being applied to protect the integrity and security of AI training data.

What is AWS? A Beginner's Guide to Cloud Computing

Everything you need to know about Amazon Web Services, cloud computing fundamentals, and career opportunities.