AI and Mental Health

Artificial intelligence is rapidly changing the way mental health care is delivered, accessed, and understood. From chatbots that provide emotional support to algorithms that help detect early signs of depression, AI is stepping into a space long underserved by traditional systems. The key question many ask is simple: can AI really help improve mental health while ensuring safety, privacy, and trust? This article explores the promise, risks, and ethical concerns of AI in mental health, looking at how it can expand access while highlighting why careful oversight is essential. For professionals who want to lead responsibly in this field, an AI certification is a strong step toward understanding both the technology and its social impact.

Why Mental Health Needs AI

Mental health care faces a global crisis. The World Health Organization reports that nearly one billion people worldwide live with some form of mental disorder, yet fewer than half receive treatment. In low- and middle-income countries, the treatment gap is even larger due to shortages of trained professionals.

AI offers a potential bridge. It can scale support by providing round-the-clock chatbots, digital therapy tools, and early screening systems. These are not replacements for human clinicians but can act as first responders—identifying issues earlier, reducing wait times, and providing relief when human resources are limited.

The biggest appeal of AI in mental health is accessibility. With internet access, individuals in remote or underserved areas can access digital tools instantly. This could change how societies approach prevention, diagnosis, and treatment.

Benefits of AI in Mental Health

Early Detection and Diagnosis

- Analyzes speech, text, and behavioral patterns for early warning signs.

- Helps detect conditions like depression, anxiety, and PTSD.

- Provides scalable screening tools for underserved populations.

Personalized Treatment Plans

- Recommends tailored therapy approaches based on patient data.

- Tracks individual progress to adjust treatment in real time.

- Supports precision medicine by combining genetics, lifestyle, and history.

Virtual Therapy and Chatbots

- Offers 24/7 support through AI-driven mental health assistants.

- Reduces stigma by enabling private, accessible conversations.

- Extends access in regions with therapist shortages.

Monitoring and Crisis Prevention

- Continuously monitors patient behavior and mood via wearables or apps.

- Flags potential crises, such as suicidal ideation, for rapid intervention.

- Provides alerts to caregivers and clinicians when urgent care is needed.

Clinician Support

- Reduces administrative burden by automating note-taking and documentation.

- Offers data-driven insights to support clinical decisions.

- Enhances efficiency, allowing clinicians to focus more on patient care.

Accessibility and Affordability

- Expands access in low-resource areas with limited mental health professionals.

- Reduces therapy costs by scaling digital interventions.

- Bridges gaps for people unable to attend in-person sessions.

Research and Insights

- Identifies population-level trends in mental health conditions.

- Analyzes treatment effectiveness across diverse groups.

- Supports innovation in therapy and intervention design.

Ethical and Privacy Considerations

- Protects sensitive patient data with secure AI systems.

- Avoids algorithmic bias that could misdiagnose or exclude patients.

- Ensures AI tools supplement, not replace, human therapists.

AI Chatbots and Digital Companions

One of the most visible applications of AI in mental health is chatbots. These tools simulate conversations and provide emotional support, stress management tips, or guided self-help exercises.

For mild to moderate issues, studies show that chatbots can reduce symptoms of anxiety and depression when users engage consistently. They are not designed to treat severe conditions but offer a bridge between self-care and professional help.

Popular apps like Woebot, Wysa, and Replika use conversational AI to help people track their moods, reframe negative thoughts, or simply feel less isolated. While these tools are not substitutes for therapy, they can serve as “digital first aid,” offering users a place to talk when human therapists are unavailable.

Yet risks exist. Users may form emotional dependencies on chatbots, blurring the line between companionship and therapy. Long-term reliance without human guidance could worsen feelings of isolation rather than improve them. This is why experts stress that AI chatbots should supplement, not replace, professional care.

Personalized Monitoring and Wearables

Beyond chatbots, AI is increasingly being integrated into wearable devices and health apps. Smartwatches and fitness trackers now collect data such as heart rate, sleep cycles, and activity levels. AI analyzes this data to detect patterns that may signal mental health issues.

For example, irregular sleep or sudden drops in activity can be early indicators of depression. AI-driven platforms can flag these changes and alert users or healthcare providers. This type of continuous monitoring provides insights that traditional check-ins might miss.

AI also enables personalized care. By analyzing patterns unique to each user, AI can suggest tailored interventions—like mindfulness reminders for stress or activity nudges for low mood. Such personalization helps make mental health care more proactive.

Still, concerns remain about privacy. Sensitive health data must be protected with strong safeguards. Without robust regulation, the risk of misuse or breaches could undermine public trust.

Early Detection and Screening

Another promising use of AI is early screening. Algorithms can analyze speech, text, or social media behavior to detect markers of depression, anxiety, or suicidal thoughts. For example, subtle changes in word choice or sentence structure can signal shifts in mental state.

Healthcare providers are beginning to explore AI-driven assessments that can help flag at-risk individuals earlier. This can be life-saving, particularly for suicide prevention, where timely intervention is critical.

However, false positives and false negatives remain a risk. An AI system that inaccurately flags someone as suicidal could cause unnecessary distress. Conversely, failing to detect a genuine case could have tragic consequences. Human oversight remains essential in interpreting these signals.

Effectiveness: Where AI Works Best

Research suggests AI is most effective for mild to moderate conditions where support, monitoring, and self-care play large roles. For example, cognitive behavioral therapy (CBT) principles can be adapted into chatbot scripts that guide users through exercises.

But for severe psychiatric conditions such as schizophrenia, bipolar disorder, or high-risk suicidality, AI cannot replace the nuanced judgment of trained clinicians. In these cases, AI may still support treatment by providing data insights or assisting professionals with workload, but the human element remains irreplaceable.

This distinction is vital. AI is a tool, not a cure. It works best when used as part of a blended care model where humans provide depth, empathy, and accountability.

The Ethical and Safety Concerns

As AI tools grow in mental health, ethical concerns are front and center.

- Accuracy: Inaccurate suggestions or diagnoses could cause harm.

- Privacy: Sensitive mental health data must be handled with the highest safeguards.

- Dependency: Over-reliance on chatbot may replace genuine human support.

- Equity: Access to AI tools is uneven, raising the risk of widening disparities.

Emotional AI adds another layer of risk. These systems attempt to detect emotions in real time, but misreading emotions could lead to inappropriate or even harmful responses. Cultural differences in emotional expression complicate matters further.

Regulation is beginning to emerge. For instance, in the United States, the FDA is weighing rules for AI-driven mental health devices, particularly concerning diagnosis and therapeutic claims. Internationally, frameworks stress transparency, human oversight, and fairness.

Why Oversight Is Essential

Unlike other areas of healthcare, mental health deals heavily with trust, vulnerability, and subjective experience. If AI is not transparent or safe, it risks damaging trust not just in technology but in mental health care as a whole.

Oversight ensures that AI tools meet ethical and clinical standards. This includes requiring human-in-the-loop systems for high-risk applications, rigorous testing for accuracy, and clear communication with users about what AI can and cannot do.

The principle is simple: AI can support, but it cannot replace human responsibility.

Real-World Case Studies of AI in Mental Health

Chatbots in Practice

Chatbots like Woebot, Wysa, and Replika are among the most widely recognized AI tools for mental health. Woebot, created by clinical researchers, uses principles from cognitive behavioral therapy (CBT) to help users reframe negative thoughts. It checks in daily, asks reflective questions, and offers practical strategies.

Wysa has been adopted in workplaces and universities to provide scalable mental health support. Users can engage with its chatbot for stress management, anxiety, and depression. When necessary, Wysa connects users with human therapists.

Replika, on the other hand, focuses on companionship. Users chat with an AI “friend” that remembers their conversations and adapts to their personality. While some find this comforting, others raise concerns about emotional dependency, especially for vulnerable individuals.

These examples highlight both the promise and the risks. Chatbots can extend access, but they must be carefully managed to prevent overreliance or harm.

Screening and Prediction Tools

AI is also being tested in hospitals and clinics for early detection of mental health conditions. Algorithms analyze patients’ speech, facial expressions, or social media activity to identify markers of depression or anxiety.

For instance, researchers have used AI models to study subtle pauses, tone changes, and word patterns in speech that correlate with mental health conditions. Such screening could one day allow doctors to detect problems earlier than traditional methods.

On social media, AI tools scan for suicidal language and send alerts to platforms or crisis lines. Facebook has experimented with such tools, though critics worry about privacy, consent, and false alarms. The potential is real, but so are the risks.

Wearables and Continuous Monitoring

Wearables are increasingly part of AI mental health strategies. Devices like Fitbit or Apple Watch track physical indicators such as heart rate variability and sleep quality. AI algorithms interpret these signals to suggest when stress is high or mood may be declining.

Some startups go further, developing specialized mental health wearables. These collect data such as skin conductance or breathing patterns, offering real-time feedback to users. The idea is to help people recognize their emotional states earlier and manage them proactively.

While promising, continuous monitoring also raises questions: who owns the data, how is it stored, and can it be misused by insurers, employers, or third parties? These concerns underscore the need for robust safeguards.

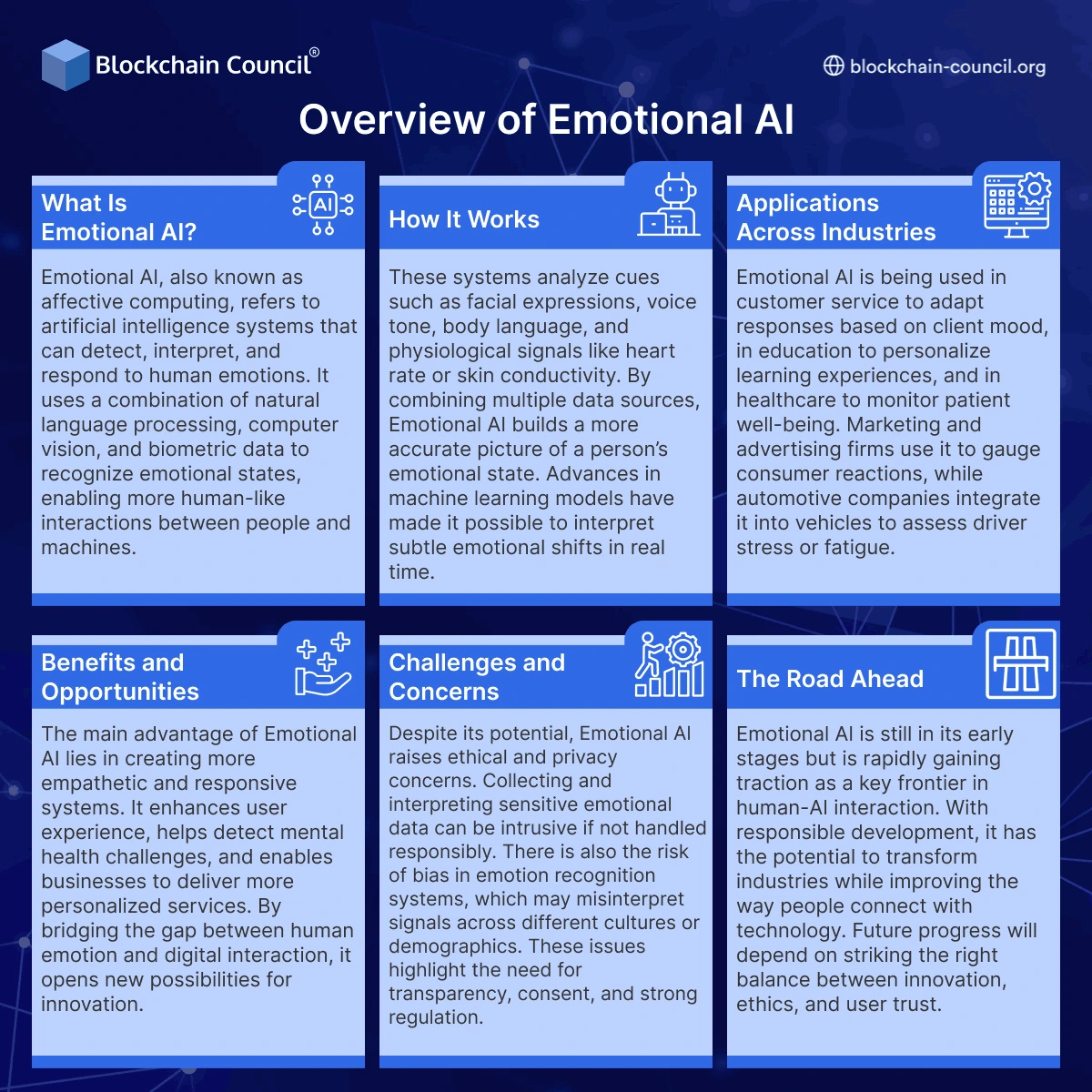

Overview of Emotional AI

What Is Emotional AI?

Emotional AI, also known as affective computing, refers to artificial intelligence systems that can detect, interpret, and respond to human emotions. It uses a combination of natural language processing, computer vision, and biometric data to recognize emotional states, enabling more human-like interactions between people and machines.

How It Works

These systems analyze cues such as facial expressions, voice tone, body language, and physiological signals like heart rate or skin conductivity. By combining multiple data sources, Emotional AI builds a more accurate picture of a person’s emotional state. Advances in machine learning models have made it possible to interpret subtle emotional shifts in real time.

Applications Across Industries

Emotional AI is being used in customer service to adapt responses based on client mood, in education to personalize learning experiences, and in healthcare to monitor patient well-being. Marketing and advertising firms use it to gauge consumer reactions, while automotive companies integrate it into vehicles to assess driver stress or fatigue.

Benefits and Opportunities

The main advantage of Emotional AI lies in creating more empathetic and responsive systems. It enhances user experience, helps detect mental health challenges, and enables businesses to deliver more personalized services. By bridging the gap between human emotion and digital interaction, it opens new possibilities for innovation.

Challenges and Concerns

Despite its potential, Emotional AI raises ethical and privacy concerns. Collecting and interpreting sensitive emotional data can be intrusive if not handled responsibly. There is also the risk of bias in emotion recognition systems, which may misinterpret signals across different cultures or demographics. These issues highlight the need for transparency, consent, and strong regulation.

The Road Ahead

Emotional AI is still in its early stages but is rapidly gaining traction as a key frontier in human-AI interaction. With responsible development, it has the potential to transform industries while improving the way people connect with technology. Future progress will depend on striking the right balance between innovation, ethics, and user trust.

Emotional AI refers to systems designed to detect, interpret, and respond to human emotions. This could involve analyzing facial expressions, voice tone, or written text.

In mental health, emotional AI could improve engagement. Imagine a chatbot that not only responds to what you type but also detects frustration or sadness in your tone and adjusts accordingly. This creates a more human-like interaction and may help users feel understood.

But the risks are significant. Emotional cues vary across cultures, and misinterpretations could lead to inappropriate responses. For example, an AI misreading sarcasm as distress could cause unnecessary alarm. Conversely, failing to detect genuine distress could delay critical help.

Another risk is manipulation. Emotional AI could be misused to keep users engaged with apps for profit, rather than supporting their health. The line between empathy and exploitation must be carefully managed.

Privacy and Consent

Mental health data is among the most sensitive information a person can share. When AI tools collect, store, or analyze this data, privacy becomes a central concern.

Users must know exactly what data is being collected, how it is stored, and who can access it. Unfortunately, many mental health apps have been criticized for vague privacy policies and poor protections.

Informed consent is another challenge. People using chatbots or wearables may not fully understand how their data will be used. Transparency is critical to ensure trust. Without it, users may avoid AI tools altogether, undermining their potential.

Internationally, frameworks like the EU’s General Data Protection Regulation (GDPR) set strict rules for data handling. In the United States, regulations are patchier, and mental health apps often fall outside traditional healthcare laws like HIPAA. Stronger, targeted protections are needed as AI use grows.

Regulation and Oversight

Regulation of AI in mental health is emerging but uneven. In the United States, the Food and Drug Administration (FDA) has started reviewing AI-driven mental health devices. In September 2025, an FDA panel weighed safety concerns around AI diagnostic tools, particularly their risk of giving misleading results.

Professional organizations are also issuing guidance. The American Psychological Association stresses that AI tools must complement, not replace, human therapists. Clinicians remain responsible for ensuring the safety and accuracy of interventions.

Globally,ethical frameworks emphasize similar principles: transparency, accountability, fairness, and human oversight. UNESCO, the World Economic Forum, and various research groups all warn against overreliance on AI without clear safeguards.

Still, enforcement is inconsistent. Some tools on the market operate with little regulation, leaving users vulnerable. Stronger global coordination will be essential to align innovation with protection.

How AI Can Complement Therapists

Despite the risks, AI can be an ally to mental health professionals rather than a competitor.

- Administrative Support: AI can help therapists by automating scheduling, note-taking, and progress tracking. This frees clinicians to focus more on patient care.

- Data Insights: AI tools analyzing wearable or behavioral data can give therapists richer information about their clients’ daily lives, improving treatment plans.

- Scalable Support: For mild cases, AI chatbots can provide ongoing support between sessions. This keeps patients engaged and reduces dropouts.

- Crisis Detection: AI monitoring systems can flag early warning signs, allowing therapists to intervene sooner.

This blended model combines the efficiency of AI with the empathy and expertise of humans. The key is clear boundaries: AI supports, but humans lead.

The Challenge of Trust

Trust is fragile in mental health care. A single negative experience with an AI tool—such as an inaccurate response or a privacy breach—can cause lasting damage to user confidence. For vulnerable populations, trust is even harder to rebuild.

This is why transparency, oversight, and ethical design are non-negotiable. Users must feel confident that AI tools are safe, respectful, and trustworthy. Only then can they serve as meaningful complements to traditional care.

Expanding Access Globally

AI holds particular promise for underserved communities worldwide. In low-income countries with limited access to psychiatrists or psychologists, chatbots and apps could provide vital support. Even basic screening tools could help identify those in need earlier.

In refugee camps or conflict zones, AI-based mental health apps can provide immediate support where professionals are scarce. However, cultural sensitivity is essential. AI systems must be trained on diverse data to reflect different languages, values, and emotional norms.

Without localization, AI risks misrepresenting or alienating the very populations it is meant to serve. Collaboration with local communities is key to designing tools that are both effective and respectful.

The Long-Term Future of AI in Mental Health

AI is still in its early stages in mental health, but the trajectory is clear. In the coming years, AI systems will likely become more integrated into everyday care. Instead of separate apps or a chatbot, mental health support may be built directly into devices and digital services people already use.

For example, smartphones could come preloaded with AI companions that monitor mood and provide early interventions. Wearables could continuously analyze biometric data, offering real-time stress management tips. Even workplace platforms might include AI wellness assistants to help employees balance workloads and mental well-being.

The potential impact is enormous. With broader adoption, AI could normalize proactive mental health care. Instead of waiting until symptoms become severe, people could receive continuous support. Early interventions could reduce the burden on overworked clinicians and lower the global treatment gap.

At the same time, the risks will grow. More integration means more data collection, more chances of misuse, and more pressure to rely on technology. The challenge will be ensuring these systems evolve with strong ethical frameworks in place.

Risks of Overreliance and Emotional Dependency

As AI becomes more accessible, one of the biggest risks is overreliance. People may begin to substitute AI companionship for human connection. While talking to a chatbot can feel comforting, it does not replace genuine social interaction.

Research is already showing that some users develop emotional attachments to chatbots like Replika. They may treat the AI as a friend or even a partner, creating dependencies that complicate real-world relationships. Vulnerable populations, especially teenagers and the elderly, may be particularly at risk.

Another risk is what researchers call “feedback loops.” If an AI reinforces negative beliefs—intentionally or unintentionally—it could worsen mental health rather than improve it. For example, if a chatbot fails to challenge harmful self-talk, it might deepen depressive thoughts.

The long-term danger is not just individual harm but societal change. If large populations begin turning to AI instead of human therapists, the role of empathy, accountability, and shared experience in mental health care could erode.

Professional Skills in the Age of AI

For AI to be used responsibly, professionals in mental health and related fields need new skills. Clinicians must understand how AI systems work, what data they use, and how to interpret their outputs. They must also know when AI is appropriate and when it is not.

AI certs are becoming an important way for professionals to build these skills. They cover both technical foundations and ethical considerations, preparing learners to use AI safely in high-stakes contexts like healthcare.

For those working with data-heavy mental health tools, a Data Science Certification can be invaluable. It teaches professionals how to handle sensitive datasets, apply machine learning responsibly, and evaluate the accuracy of models. These skills are critical for designing AI systems that are not only effective but also fair and transparent.

Leaders in hospitals, clinics, and mental health organizations may benefit from a Marketing and Business Certification. This type of training helps them integrate AI into service delivery responsibly, balancing innovation with ethics. It also equips them to communicate clearly with patients, staff, and regulators about what AI can and cannot do.

In parallel, blockchain technology courses are increasingly relevant. Blockchain offers tools for verifying data integrity and securing patient records. In mental health, where confidentiality is paramount, blockchain can add an extra layer of trust and accountability.

Together, these certifications provide a roadmap for professionals who want to lead in an era where AI and mental health are increasingly intertwined.

Ethical Guardrails for the Future

To build a future where AI supports mental health without causing harm, clear ethical guardrails are needed. These include:

- Transparency: Users must understand what AI tools can do, how they work, and what their limitations are.

- Human Oversight: High-risk applications, such as suicide prevention or diagnosis, must always involve human professionals.

- Privacy: Mental health data must be protected at the highest level, with strict consent and data handling policies.

- Fairness: AI tools must be designed with diverse populations in mind to avoid bias and cultural misrepresentation.

- Accountability: Developers, providers, and organizations must be held accountable for the outcomes of AI-driven tools.

These principles are already echoed in frameworks from organizations like UNESCO, the American Psychological Association, and the World Economic Forum. The next step is enforcing them consistently across markets and applications.

The Balance Between AI and Human Care

At its best, AI does not replace therapists—it empowers them. It handles repetitive tasks, analyzes complex data, and provides scalable support, allowing human clinicians to focus on what they do best: listening, empathizing, and guiding.

This balance is crucial. Mental health is not just about fixing symptoms. It is about relationships, trust, and shared humanity. AI can analyze words, but it cannot feel empathy. It can predict outcomes, but it cannot share lived experience.

A healthy future for AI in mental health means recognizing these limits. Humans must remain at the center of care, with AI serving as a supportive partner.

The Role of Public Trust

Public trust will determine whether AI in mental health succeeds or fails. If people believe AI tools are unsafe, biased, or invasive, they will avoid them. If they see them as transparent, supportive, and respectful, adoption will grow.

Trust depends on how developers, regulators, and professionals act today. Building trustworthy systems means prioritizing safety over speed, fairness over profit, and accountability over convenience. Only then can AI serve as a reliable ally in mental health care.

Conclusion: A Cautious Path Forward

AI has enormous potential to expand access to mental health care, reduce barriers, and provide timely support. It can help detect early signs of distress, personalize treatment, and support clinicians with better data. For underserved populations, it may offer a lifeline where none exists.

But AI also brings risks—overreliance, privacy violations, emotional dependency, and cultural bias. These risks cannot be ignored. Without safeguards, they could harm the very people AI is meant to help.

The path forward is cautious optimism. AI can be part of the mental health system of the future, but only if guided by strong ethics, thoughtful regulation, and skilled professionals. The goal should not be replacing human care but strengthening it—making therapy more accessible, data more actionable, and support more continuous.

Mental health is one of the most human areas of healthcare. AI has a role to play, but the essence of care will always depend on people: therapists, caregivers, communities, and families. Technology can amplify support, but empathy, trust, and connection remain uniquely human.

Related Articles

View All

AI & ML

Freelancing with Vibe Coding Skills

Learn how vibe coding empowers freelancers to deliver faster, smarter, and more efficient AI-driven projects.

AI & ML

AI Agents in Coding: The Future of Development

Discover how AI agents are transforming software development by automating coding tasks and managing complex workflows.

AI & ML

How to Debug Code Using AI

Explore how AI tools can simplify debugging by identifying errors, suggesting fixes, and improving code quality efficiently.

Trending Articles

The Role of Blockchain in Ethical AI Development

How blockchain technology is being used to promote transparency and accountability in artificial intelligence systems.

AWS Career Roadmap

A step-by-step guide to building a successful career in Amazon Web Services cloud computing.

Top 5 DeFi Platforms

Explore the leading decentralized finance platforms and what makes each one unique in the evolving DeFi landscape.