Advanced Prompt Engineering Techniques

Advanced prompt engineering is essential for unlocking the full potential of large language models (LLMs) like GPT-4. By crafting precise and structured prompts, users can guide these models to produce more relevant, accurate, and contextually appropriate responses. This is especially important when handling complex tasks such as writing, coding, or problem-solving, where the model needs to deliver nuanced and detailed results.

For anyone looking to enhance their skills in AI, the AI Certification offers comprehensive training on the principles and practices of AI prompt engineering.

Chain-of-Thought (CoT) Prompting

Chain-of-Thought (CoT) prompting is a technique where you guide the model through a series of reasoning steps before arriving at a final answer. This is particularly useful for tasks that require logical deductions, complex problem-solving, or deep analysis.

For example, when asking the model to solve a math problem, instead of simply asking for the answer, you would prompt it to explain its reasoning step-by-step. This helps the model break down complex problems and leads to more accurate, coherent responses.

CoT prompting is effective for:

- Complex decision-making tasks

- Problem-solving in areas like mathematics or logic

- Generating step-by-step explanations for technical subjects

By encouraging structured reasoning, CoT prompts help models provide more thorough and accurate outputs.

Few-Shot Prompting

Few-Shot prompting involves providing the model with a few examples of the desired output within the prompt. This helps the model understand the context and format required, making it easier to generate responses that align with your expectations.

For example, if you want the model to generate product descriptions, you can include one or two sample descriptions to guide the model’s output. This ensures the model mimics the style, tone, and structure that you need.

Few-Shot prompting is beneficial for:

- Tasks that require consistency across responses

- Generating content in a specific format or style

- Training the model with minimal examples

This technique helps guide the model to produce more relevant and tailored responses based on the given examples.

Self-Consistency

Self-Consistency is an advanced technique that involves generating multiple responses to the same prompt and selecting the most frequent or consistent answer. This is especially useful when dealing with ambiguous queries or tasks that have multiple valid answers.

For example, if you ask the model a question that could have more than one valid response, generating multiple answers and selecting the one that appears most often can improve reliability and accuracy.

Self-Consistency works well for:

- Ambiguous or open-ended queries

- Tasks requiring reliable and accurate answers

- Reducing variability and errors in responses

This technique ensures that the model provides more dependable results, especially when dealing with complex or uncertain queries.

Tree-of-Thought (ToT)

Tree-of-Thought (ToT) extends the Chain-of-Thought approach by evaluating multiple reasoning paths at once. Instead of following a single line of reasoning, the model explores different possible solutions or outcomes before arriving at a final decision.

For instance, in a complex scenario, you can ask the model to consider various solutions, compare their pros and cons, and then select the most appropriate course of action. This approach ensures that the model arrives at a well-rounded and comprehensive conclusion.

ToT is effective for:

- Evaluating multiple solutions or outcomes to a problem

- Handling complex decision-making tasks

- Ensuring the model considers various perspectives before reaching a conclusion

By exploring different reasoning paths, ToT allows the model to provide a more balanced and thoughtful answer.

Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation (RAG) combines the power of information retrieval with generative AI. Instead of relying solely on the model’s internal knowledge, RAG allows the model to retrieve relevant information from external sources, such as databases, documents, or online resources, to inform its output.

For example, if you ask the model a question that requires up-to-date knowledge, RAG enables it to pull in relevant information from trusted sources and generate a response based on that data. This technique is ideal for knowledge-intensive tasks, ensuring that the model’s responses are informed and accurate.

RAG is useful for:

- Knowledge-based tasks that require up-to-date or specialized information

- Providing fact-checked and accurate responses

- Reducing the limitations of the model’s training data

By integrating external knowledge with generative responses, RAG helps models provide more contextually grounded and accurate answers.

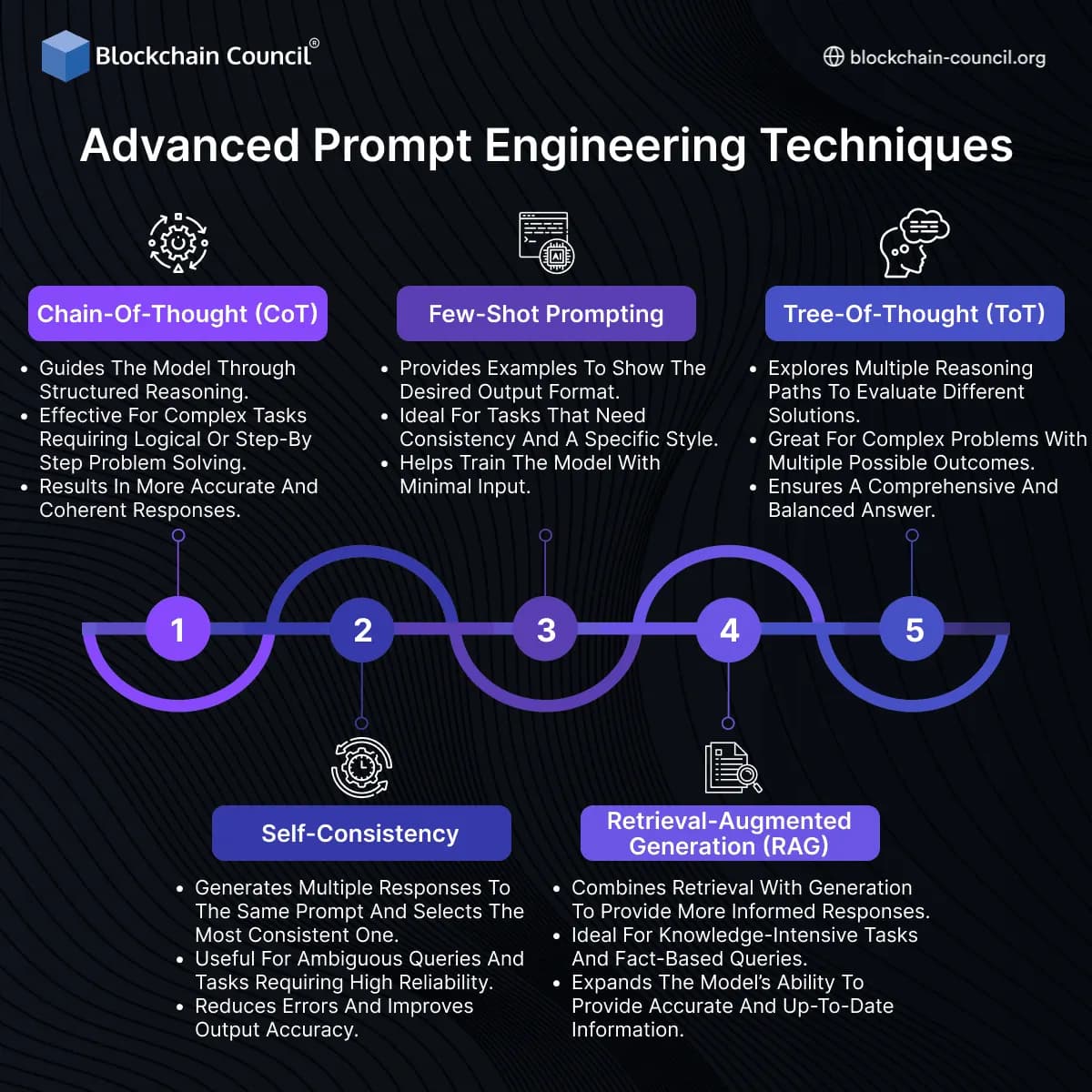

Summary of Advanced Prompt Engineering Techniques

Chain-of-Thought (CoT)

- Guides the model through structured reasoning.

- Effective for complex tasks requiring logical or step-by-step problem solving.

- Results in more accurate and coherent responses.

Few-Shot Prompting

- Provides examples to show the desired output format.

- Ideal for tasks that need consistency and a specific style.

- Helps train the model with minimal input.

Self-Consistency

- Generates multiple responses to the same prompt and selects the most consistent one.

- Useful for ambiguous queries and tasks requiring high reliability.

- Reduces errors and improves output accuracy.

Tree-of-Thought (ToT)

- Explores multiple reasoning paths to evaluate different solutions.

- Great for complex problems with multiple possible outcomes.

- Ensures a comprehensive and balanced answer.

Retrieval-Augmented Generation (RAG)

- Combines retrieval with generation to provide more informed responses.

- Ideal for knowledge-intensive tasks and fact-based queries.

- Expands the model’s ability to provide accurate and up-to-date information.

Conclusion

Advanced prompt engineering techniques are vital for unlocking the full potential of language models like GPT-4. By using strategies like Chain-of-Thought prompting, Few-Shot prompting, and Retrieval-Augmented Generation, users can improve the precision, relevance, and reliability of AI responses. These techniques enable more accurate decision-making, problem-solving, and content generation. Additionally, professionals interested in data management can explore the Data Science Certification, which provides key insights into data preparation for AI. For those aiming to incorporate AI into business strategies, the Marketing and Business Certification helps you understand how to align AI with organizational goals.

Related Articles

View All

AI & ML

Fine-Tuning vs RAG vs Prompt Engineering: Choosing the Right Approach for Custom AI Applications

Compare fine-tuning vs RAG vs prompt engineering for custom AI applications. Learn when to use each method based on cost, freshness, latency, and reliability.

AI & ML

RAG vs Fine-Tuning vs Prompt Engineering

Compare RAG vs fine-tuning vs prompt engineering for enterprise GenAI. Learn costs, setup time, data freshness, and when hybrid approaches work best.

AI & ML

Prompt Injection and LLM Jailbreaks

Prompt injection and LLM jailbreaks are top LLM security risks in production. Learn the main attack types, real-world examples, and layered defenses for RAG pipelines, agents, and multimodal applications.

Trending Articles

The Role of Blockchain in Ethical AI Development

How blockchain technology is being used to promote transparency and accountability in artificial intelligence systems.

AWS Career Roadmap

A step-by-step guide to building a successful career in Amazon Web Services cloud computing.

Top 5 DeFi Platforms

Explore the leading decentralized finance platforms and what makes each one unique in the evolving DeFi landscape.