Shadow AI

What is Shadow AI?

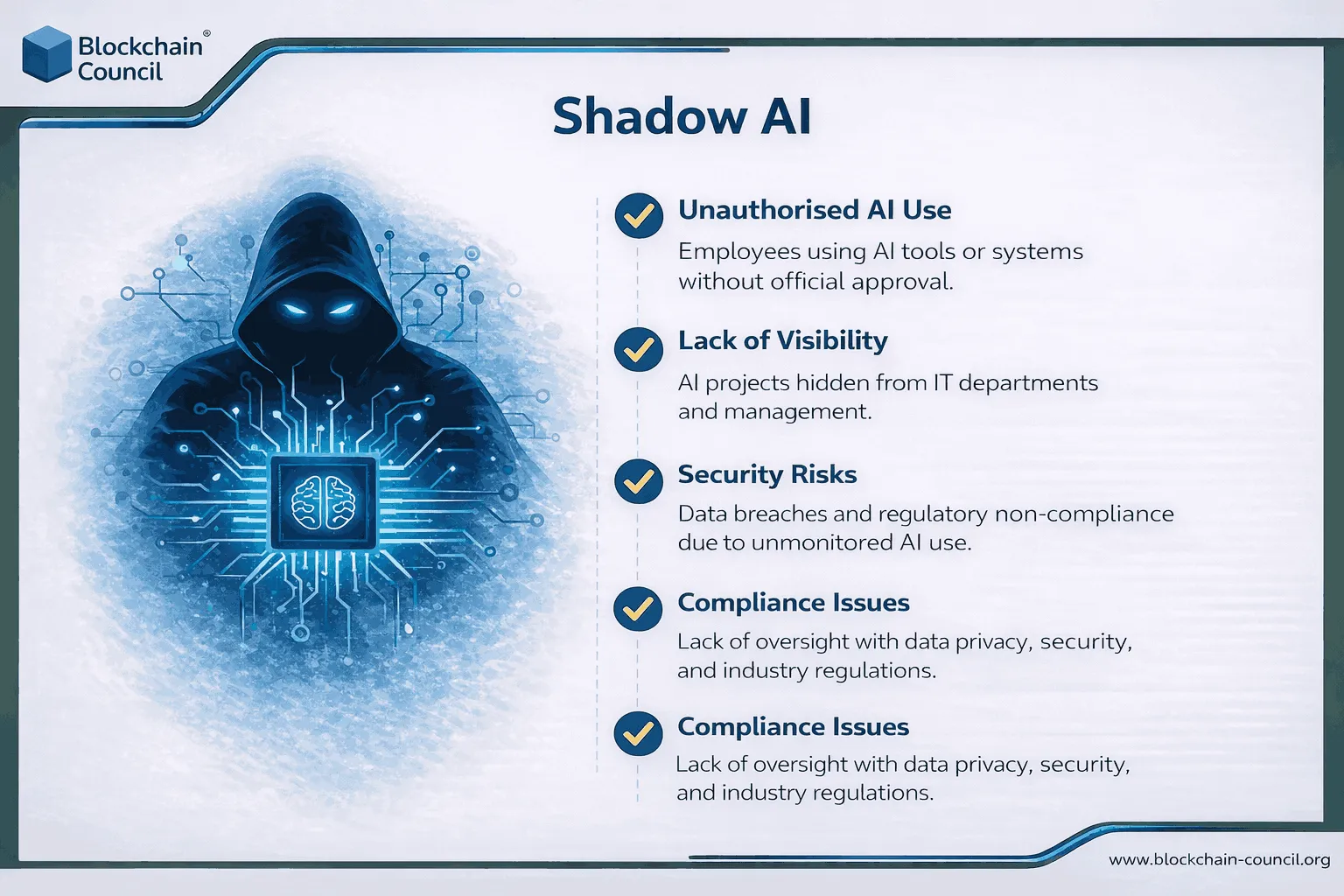

Shadow AI is when employees use AI tools for work without the company approving them or being able to see what’s happening.

- No IT review.

- No security checks.

- No compliance sign-off.

- No monitoring.

If that sounds familiar, it’s because it’s the same idea as shadow IT—people using unapproved apps to get work done—but AI makes it easier and riskier. A single browser tab can take in sensitive text, summarize internal files, write code from private repos, or connect to email and cloud drives through plugins and agents.

Most people using shadow AI aren’t trying to break rules. They’re trying to move faster. The problem is the company often has no idea:

- Which tools are being used

- What data is being pasted or uploaded

- Whether the tool keeps or trains on that data

- What permissions were granted through connectors

- What logs exist if something goes wrong

That’s why shadow AI isn’t “an AI trend.” It’s a visibility and control problem. Get an AI course to understand how to use AI ethically.

Risk of Shadow AI in 2026

AI use at work is already widespread, and a lot of it is happening outside official tools.

Microsoft and LinkedIn’s 2024 Work Trend Index reported:

- 75% of global knowledge workers use AI

- 78% of AI users bring their own tools to work (BYOAI)

When people bring their own tools, companies lose the normal safeguards that come with approved software: vendor review, access controls, audit logs, and data rules.

Security teams are also treating shadow AI as a likely breach path. A Gartner prediction widely reported in late 2025 says 40% of enterprises could face security or compliance breaches tied to shadow AI by 2030. That prediction matters because it reflects what security leaders are seeing now: unsanctioned tools are already inside many organizations.

In India, the urgency can be even higher. Microsoft’s reporting in 2024 showed very high AI use among Indian knowledge workers, and 2025 reporting points to leaders planning rapid adoption of AI agents in the next 12–18 months. High usage plus fast agent rollout usually creates tool sprawl unless governance and approved options keep pace.

Cons

Many leaders still think shadow AI means someone asking a chatbot to rewrite an email. That’s the lowest-risk version.

The higher-risk version is when people use AI with real company inputs and real permissions:

- Uploading contracts, customer lists, or financial files to “summarize”

- Pasting code, logs, or incident details into a consumer tool

- Installing browser extensions that can read web pages, email, or CRM screens

- Connecting plugins to Google Drive, Microsoft 365, Slack, Jira, Salesforce

- Giving an agent permission to send emails, update tickets, or move files

At that point, AI becomes a data pipe. And because it’s “just a tool,” many employees don’t treat it with the same caution as sending data to a third party.

Main Risk Areas

Data Leakage And IP Loss

One prompt can leak information you can’t easily retrieve:

- Source code and internal libraries

- Customer data and PII

- Contracts and pricing details

- Credentials, keys, and secrets

- Strategy docs, roadmaps, and plans

If the tool isn’t approved, you may have no audit trail and no way to confirm what happened to that data.

Compliance And Regulatory Exposure

If regulated data ends up in an unapproved system, you can lose:

- Required audit trails

- Data residency guarantees

- Retention and deletion controls

- Vendor commitments you rely on in contracts

Even if the employee only wanted a summary, the system still processed the data.

Legal And Confidentiality Issues

In legal or sensitive business contexts, using public tools for document work can create confidentiality problems. It can also complicate how protected information is handled, especially if there are no controls or logging.

Security Blind Spots

Traditional security tools often don’t capture:

- What users paste into a browser-based AI tool

- What a plugin reads or writes

- What an agent does after it’s granted access

That’s why shadow AI is increasingly framed as an audit issue: you need evidence, controls, and repeatable monitoring.

Quality And Accountability Problems

Shadow AI can also create business risk even with no data leak:

- People act on outputs without checking

- There’s no clear source for the answer

- Results can’t be reproduced later

- Nobody can explain what tool was used, or why

That’s how bad decisions get made quietly—and then become expensive later.

How Shadow AI Works

Here are patterns that show up across companies:

- Personal AI accounts used for work prompts

- Browser extensions that can read pages, email, or CRM screens

- Teams uploading customer lists or contracts for summaries

- Developers using unapproved coding assistants on private repos

- Departments buying niche AI SaaS on a card, skipping vendor review

- Early agent experiments given wide access to email, files, and ticketing systems

The common thread: people choose the fastest tool that works.

How To Detect Shadow AI?

You don’t have to guess. Companies use a few practical signals:

- CASB/SSE logs for traffic to popular AI domains and unknown AI SaaS

- DLP alerts tied to uploads and prompt-like copy/paste flows

- SSO discovery for unsanctioned apps, OAuth grants, and excessive scopes

- Endpoint and browser telemetry for extensions and data uploads

- Expense and procurement scans for AI tools paid outside standard channels

If the company can’t see AI usage, it can’t manage the risk. Detection is step one.

Tips to Control Shadow AI In 2026

The goal isn’t to ban AI. The goal is to make safe usage easy and unsafe usage hard.

Use Approved Tools

Blanket bans push people to work around you. A better approach is to offer tools that meet real needs:

- Approved chat assistant and coding assistant

- SSO and simple onboarding

- Clear rules for what’s allowed

- A short “safe prompts” guide by role

Guardrails On Sensitive Data

Policies only work when backed by controls:

- Data classification connected to DLP

- Blocking or warnings for secrets, keys, PCI, certain PII, regulated IDs

- Restricting uploads of crown-jewel docs and source code to external tools

Control Plugins, Connectors, And Agents

Agents increase risk because they can act:

- Approval for OAuth grants and high-risk scopes

- Least-privilege templates for common agent use cases

- Logging for prompts, tool calls, actions, and data touched

- Periodic access reviews and scope cleanup

Do Vendor Review With AI-Specific Questions

Basic vendor review often misses the key points. Ask:

- Where is data stored and for how long?

- Is training on your data off by default and committed in writing?

- Which subprocessors touch data?

- What’s the incident response path and timeline?

- Do you get audit rights and notice of major changes?

Use A Recognized Risk Structure

Many teams use the NIST AI Risk Management Framework to structure governance and controls. The value is clarity: who owns the risk, how it’s measured, and what the response plan is.

This is also where structured learning helps. Teams building this capability often connect it to a Tech Certification track focused on access controls, governance, and regulated systems.

Good AI Policy

A good policy is short, clear, and enforceable. Many companies include:

- Approved tools list + a fast request path for new tools

- Prohibited data list (PII, credentials, unreleased financials, source code, legal matters)

- Rules for external sharing, retention, and citing AI output

- Human review required for customer-facing, legal, financial, and security decisions

- Mandatory training with refresh cycles

- Enforcement language: monitoring notice, escalation, exceptions

If requesting approval takes weeks, shadow usage will keep growing.

Future

In the next 6–18 months, expect three shifts:

- More shadow usage around agents because they automate real workflows

- More leadership attention because failures can become reportable incidents

- More “approved marketplaces” where companies offer curated tools plus monitoring

Can We Ban Shadow AI?

Shadow AI isn’t going away. People will use AI because it saves time. The question is whether your organization can see it, control it, and guide it.

The simplest way to reduce shadow AI is practical, not dramatic:

- Make the approved option easy and good enough

- Put strong controls around sensitive data

- Treat connectors and agents like high-risk access, not “just apps”

And because internal adoption is also a communication problem, not only a security problem, rollout plans and clear messaging matter—often the kind of work covered in a Marketing and Business Certification track.