Inside the Workflow of a Modern Tool Using AI Agent

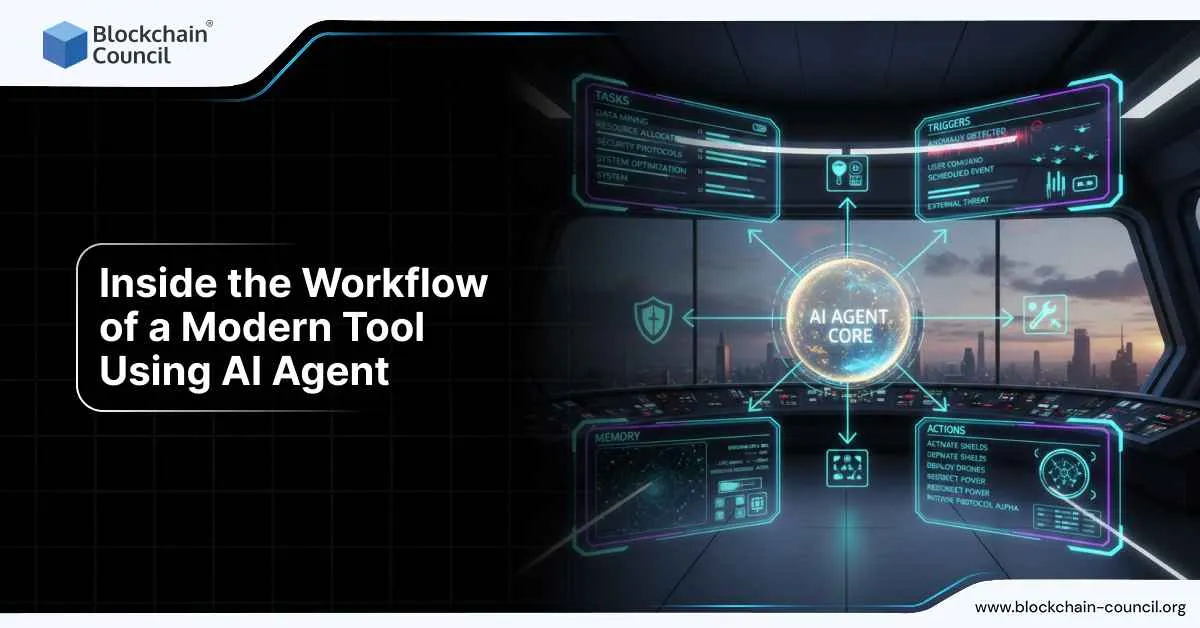

Inside the workflow of a modern tool using AI agent is where the real shift in software design becomes visible. These tools are no longer built around single prompts or one-off automation. They operate as coordinated systems that plan, decide, act, recover from errors, and improve over time. By late 2024 and throughout 2025, this architecture moved from research papers into production tools used in customer support, research, finance operations, developer tooling, and marketing platforms.

What makes these tools “modern” is not just the presence of an AI model, but the way the agent is embedded into a repeatable, observable, and governed workflow.

At the center of this evolution is applied artificial intelligence that can reason over goals, choose actions, and adapt based on outcomes. Professionals trying to understand or build these systems often start by grounding themselves in how modern AI systems actually work end to end, which is why structured learning such as an AI certification has become increasingly relevant for teams moving from experimentation to deployment.

Step One: Input and Goal Framing

Every modern AI agent workflow begins with structured intake. This step became formalized around 2024, when teams realized that vague user input leads to unstable agent behavior.

Instead of passing raw text directly to a model, modern tools convert input into:

- A clearly defined goal

- Constraints such as time, scope, or data access

- Success criteria that the agent can check against

For example, a research agent does not receive “analyze this market.” It receives a goal like “produce a 1,000-word market brief with verified data points, updated after 30 December 2025, and no speculative claims.”

This framing step dramatically reduces hallucinations and wasted compute.

Step Two: Planning and Task Decomposition

Once the goal is defined, the agent creates a plan. By 2025, most production systems moved away from simple linear chains and adopted graph-based planning.

In this stage, the agent:

- Breaks the goal into discrete tasks

- Orders tasks based on dependencies

- Identifies which steps require tools, retrieval, or human review

For example, a compliance agent may plan to retrieve documents first, analyze regulatory text second, and only then generate a summary. If any step fails, the plan allows the agent to retry or reroute instead of collapsing.

This planning layer is what separates agents from basic automation.

Step Three: Tool Selection and Execution

After planning, the agent selects tools. These tools are not generic plugins. They are tightly defined functions with schemas, permissions, and failure handling.

Common tools include:

- Internal databases and document stores

- Web search or licensed data feeds

- CRM, ticketing, or finance systems

- Code execution or testing environments

Modern tools enforce strict rules here. If a tool fails or returns incomplete data, the agent logs the failure and either retries or adjusts the plan. This behavior became standard after repeated production incidents in 2023 and 2024, where silent tool failures caused incorrect outputs.

Building reliable tool layers requires strong systems thinking, API discipline, and observability. That foundation is often formalized through programs like a Tech Certification, especially for teams designing agent-driven platforms rather than one-off bots.

Step Four: Memory and Context Management

Memory is one of the most misunderstood parts of agent workflows. Modern tools distinguish clearly between:

- Short-term working memory for the current task

- Long-term memory for preferences, history, or recurring projects

By 2025, most production systems stopped allowing agents to write freely to long-term memory. Instead, memory updates require explicit triggers or human approval. This change followed incidents where agents accumulated outdated or incorrect assumptions over time.

Retrieval systems also became more disciplined. Agents are expected to fetch evidence first, then reason, then act. This order matters, especially in regulated or factual domains.

Step Five: Human-in-the-Loop Controls

A defining feature inside the workflow of a modern tool using AI agent is the presence of interruption points. These are deliberate pauses where the agent must stop and request approval before proceeding.

Typical approval points include:

- Sending external communications

- Modifying customer records

- Executing financial actions

- Publishing content

This pattern emerged strongly in 2024, when enterprises realized that full autonomy without oversight created unacceptable risk. Modern agent tools are designed to pause, persist state, and resume cleanly once approval is given.

Step Six: Observability and Evaluation

No modern AI agent workflow runs blind. Tracing, logging, and evaluation are built in by default.

Each run records:

- The plan the agent created

- Tools it called and their outputs

- Decisions it made and why

- Final outcomes compared to success criteria

This data feeds continuous evaluation. Teams regularly replay agent runs, test new model versions, and measure failure rates. By late 2025, this practice became standard across serious AI deployments.

How This Workflow Shows Up in Real Products

Customer support agents use this workflow to triage tickets, retrieve account history, draft responses, and escalate edge cases. Research tools use it to gather sources, verify facts, and generate reports. Marketing platforms use it to plan campaigns, generate variants, test performance, and refine messaging based on results.

In every case, the agent is not the product. The workflow is.

Why This Matters for Business Strategy

Understanding inside the workflow of a modern tool using AI agent is no longer just a technical concern. It shapes cost, risk, speed, and competitive advantage.

Organizations that treat agents as controlled systems outperform those that treat them as chatbots. Aligning agent capabilities with business outcomes requires clear ownership, governance, and measurement. This alignment is often guided by frameworks similar to those taught in a Marketing and Business Certification, where technology decisions are evaluated in terms of customer impact and long-term value.

Conclusion

The biggest change is not smarter models. It is better workflows.

Modern AI agent tools succeed because they plan before acting, retrieve before reasoning, pause before risk, and log everything. Inside the workflow of a modern tool using AI agent, intelligence is no longer accidental. It is designed, constrained, and improved over time.