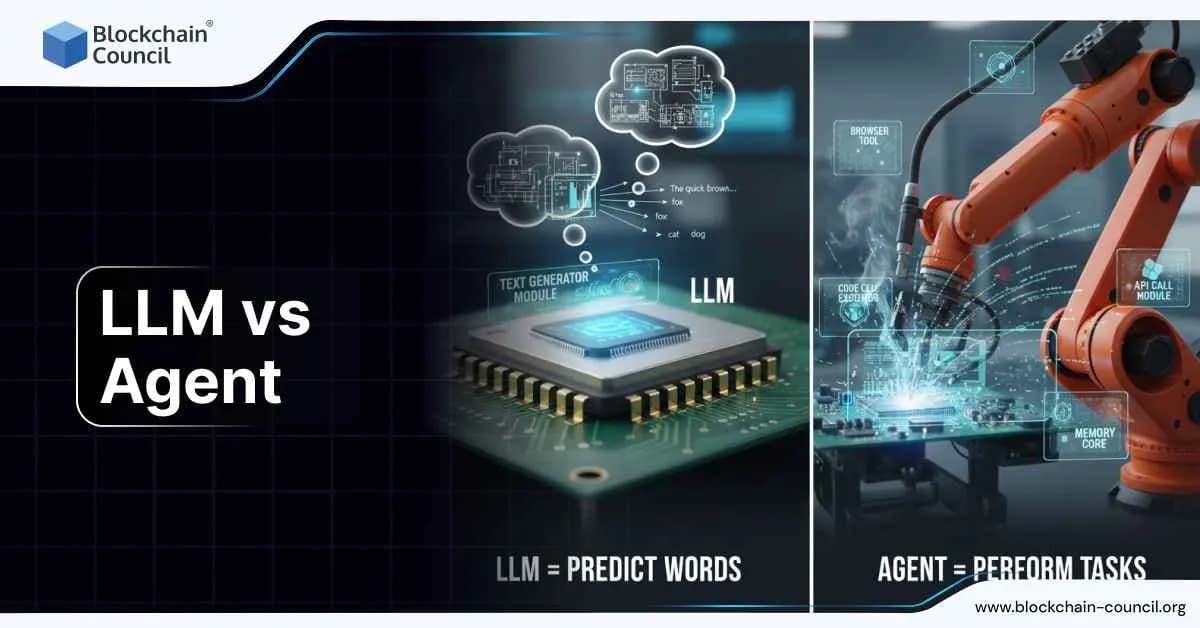

LLM vs Agent

AI systems are changing rapidly, and one of the most important shifts is the move from simple Large Language Models (LLMs) to full agents that can plan, decide, act and work toward goals. Understanding this shift is now essential for professionals building automation, product teams evaluating AI capabilities and organisations preparing for AI powered workflows. Many people strengthen their foundation through programs like the AI Certification because recognizing the difference between an LLM and an agent determines how companies structure their next generation tools.

What an LLM Actually Does

An LLM is a large neural network trained on massive text corpora to predict the next token. It understands patterns in language and can generate coherent text, reason through problems, classify information, summarize content and assist with coding. According to industry documentation, LLMs operate purely as predictive engines. They accept text as input and produce text as output. They do not act in the outside world, hold long term memory or pursue goals independently.

Recent models have increased context windows, multimodal inputs and better reasoning quality, but their fundamental nature remains the same. They do not choose tools, call APIs, manage workflows or make autonomous decisions unless another system wraps them in additional logic.

This is why industry leaders describe LLMs as the core intelligence component in AI systems but not full actors.

What an AI Agent Is

An agent is a complete system built around an LLM. It includes planning, memory, tool use and decision making. Unlike an LLM, an agent interacts with the environment, runs tasks over multiple steps and adjusts actions based on outcomes. Major companies define agents as software entities that use AI to pursue goals with some level of autonomy. Agents can write and run code, retrieve data, call APIs, search the web, update documents, analyze logs and operate inside business workflows.

Several sources describe agents as having four core elements:

- Goal or task

Agents receive instructions at a higher level than simple prompts. For example, automate monthly reporting, clean thousands of rows of data or process customer cases end to end.

- Planning

The agent breaks the task into steps, evaluates what information is needed, chooses tools and adjusts the plan as new results come in.

- Tool use

Agents call APIs, operate software systems, run scripts, browse sources or interact with databases.

- Memory and state

Agents store intermediate results, past actions and session information. This allows them to reason across long workflows.

This combination is what shifts AI from a passive text generator to an active worker.

Key Differences Between LLMs and Agents

Autonomy

LLMs respond only when asked.

Agents continue working until the task is complete.

Environment interaction

LLMs cannot change files, run code or access databases.

Agents act through tool calls and external interfaces.

Memory

LLMs forget everything once the context window resets.

Agents maintain memory across steps or even across sessions.

Control flow

LLMs only generate output.

Agents decide which actions to take next and whether to revise earlier steps.

Reliability

LLMs can hallucinate or drift without grounding.

Agents can incorporate verification loops, citations, fact checking or multi step reasoning structures.

The Industry Shift Toward Agentic Systems

Between late 2024 and early 2025, major AI labs announced new agent focused platforms. OpenAI, Anthropic, GitHub, Google and others have built frameworks that allow developers to define tools, workflows and multi step plans inside models. The rise of agent standards like Agents.md and tool caller protocols has motivated companies to treat AI not as isolated chat interfaces but as autonomous digital teammates.

This shift is driven by two trends:

- Businesses want automation, not just answers.

Companies do not want models that reply to questions. They want models that submit forms, update CRMs, resolve tickets, analyze data, run checks and close tasks.

- Models now support longer contexts and richer reasoning.

Larger context windows allow agents to store more information. Better reasoning improves planning accuracy.

These improvements change the economics of software automation.

Why This Matters for Technical Teams

Technical teams adopting agentic systems need a deeper understanding of architecture, data flow and system level design. This is why many engineers and developers expand their expertise through the Tech Certification because successful agent systems require:

- high quality tool definitions

- careful memory management

- safe execution environments

- structured workflows

- protective rules to avoid unintended actions

Teams also need to evaluate failure modes, such as incorrect planning loops, tool misuse or infinite task cycling. These concerns do not exist in simple LLM chat deployments.

When LLMs Are Enough

Not every task needs an agent. LLM only systems remain ideal for:

- writing assistance

- summarization

- translation

- tutoring

- document rewriting

- brainstorming

- code explanation

- structured Q and A

- single step reasoning tasks

If the task needs no actions and no multi step planning, an LLM is the simpler and cheaper solution.

When Agents Become Necessary

Agents outperform LLMs when tasks include:

- multi step workflows

- tool calls

- dynamic decision making

- data retrieval from external sources

- long horizon planning

- repetitive or large volume tasks

- interaction with files, systems or APIs

Examples include finance report generation, research automation, CRM updates, helpdesk resolution, compliance checks and operational analytics.

LLM vs Agent

| Capability | LLM | Agent |

| Autonomy | Responds to prompt only | Works toward a goal with multi step action |

| Environment access | None, text only | APIs, files, databases, software systems |

| Memory | Limited to context window | Long lived task or session memory |

| Planning | Single shot | Multi step planning and adjustment |

| Use cases | Writing, Q and A, summaries | Automation, workflows, tool execution |

What Businesses Should Prepare For

The movement from LLMs to agents is not a minor change. It reshapes how work is done inside companies. Departments such as operations, finance, HR, customer support and marketing will adopt agent based automation for multi step tasks that once required human supervision. This transition requires process redesign, workforce training and new governance structures.

Leaders preparing for these changes often explore strategic frameworks through programs like the Marketing and Business Certification because adopting agents impacts customer experience, productivity and cross department coordination.

Conclusion

LLMs are powerful reasoning and language engines, but they do not act on their own. Agents extend these models with planning, memory and tool use, turning them into autonomous systems capable of completing tasks end to end. The future of AI in business will depend on how quickly organisations adopt agentic architectures and redesign workflows around them. Understanding the difference between LLMs and agents is the first step toward building practical and scalable AI powered operations.

Related Articles

View All

AI & ML

Prompt Injection and LLM Jailbreaks

Prompt injection and LLM jailbreaks are top LLM security risks in production. Learn the main attack types, real-world examples, and layered defenses for RAG pipelines, agents, and multimodal applications.

AI & ML

Ethical Hacking for AI Systems: Step-by-Step Pen-Testing for ML and LLM Apps

Learn a step-by-step ethical hacking methodology for AI systems, including pen-testing ML pipelines and LLM apps for prompt injection, RAG leaks, and tool abuse.

AI & ML

LLM Security Testing Playbook: Red Teaming, Eval Harnesses, and Safety Regression Testing

Learn a practical LLM security testing playbook using red teaming, eval harnesses, and safety regression tests to catch jailbreaks, leakage, and bias in CI/CD.

Trending Articles

The Role of Blockchain in Ethical AI Development

How blockchain technology is being used to promote transparency and accountability in artificial intelligence systems.

AWS Career Roadmap

A step-by-step guide to building a successful career in Amazon Web Services cloud computing.

Top 5 DeFi Platforms

Explore the leading decentralized finance platforms and what makes each one unique in the evolving DeFi landscape.