Key Principles and Frameworks in AI Ethics

Introduction

AI is advancing quickly, but without ethics it can create more harm than good. That is why principles and frameworks in AI ethics are essential. They help organizations design, deploy, and govern AI in ways that protect people and build trust. In this guide, we will explore the most important principles and frameworks shaping AI ethics today. If you are planning a career in this space, building your foundation with an AI Certification is a smart first step. It shows that you understand both the technology and the responsibilities that come with it.

Why Ethical AI Requires Shared Principles

Ethics provides the guardrails that allow AI to be innovative but safe. Shared principles mean that governments, companies, and researchers are working from the same rulebook. Without them, AI risks becoming untrustworthy, biased, or unsafe.

By agreeing on core principles, organizations create alignment across sectors and borders. This makes it easier to regulate, easier to adopt, and easier to trust.

Universal Ethics Pillars Across Frameworks

Most major frameworks highlight the same set of values. These universal pillars provide the foundation for responsible AI.

- Fairness: AI should not discriminate. Decisions must be free of bias and equitable across groups.

- Transparency: People must understand how AI systems make decisions. Black-box systems erode trust.

- Accountability: Someone must be responsible for AI outcomes. Organizations cannot blame “the algorithm.”

- Privacy: Personal data must be protected through strong security and careful data practices.

- Security: Systems must be resilient against attacks and misuse.

- Human Rights: AI must respect human dignity, freedoms, and values at every stage.

These values are echoed in frameworks from governments, companies, and international organizations.

Leading Frameworks Shaping AI Ethics

OECD Principles

The OECD AI Principles are one of the first intergovernmental standards. They call for innovative and trustworthy AI that respects human rights and democratic values.

IEEE Human-Centric Ethics

The IEEE framework emphasizes designing AI with human well-being in mind. It includes rights, accountability, transparency, and the avoidance of misuse.

Department of Defense Principles

The U.S. Department of Defense created five principles for military use of AI: responsible, equitable, traceable, reliable, and governable. These show how ethics can be tailored for sector-specific needs.

Together, these frameworks set clear standards that other industries and countries often adopt.

Technical and Design-Centric Approaches

Ethics does not stop at principles. It also requires practical methods built into design and engineering.

- Trustworthy AI: This approach stresses explainability, robustness, fairness, and privacy. Techniques like differential privacy and federated learning make systems safer.

- Value Sensitive Design: VSD is a method where engineers embed human values directly into the design process. This ensures ethics are considered from the start, not as an afterthought.

- IEEE 7000 Standards: These provide structured methods for addressing ethics in system design, allowing organizations to follow clear steps for ethical engineering.

These methods make AI ethics more than words on paper. They turn principles into action.

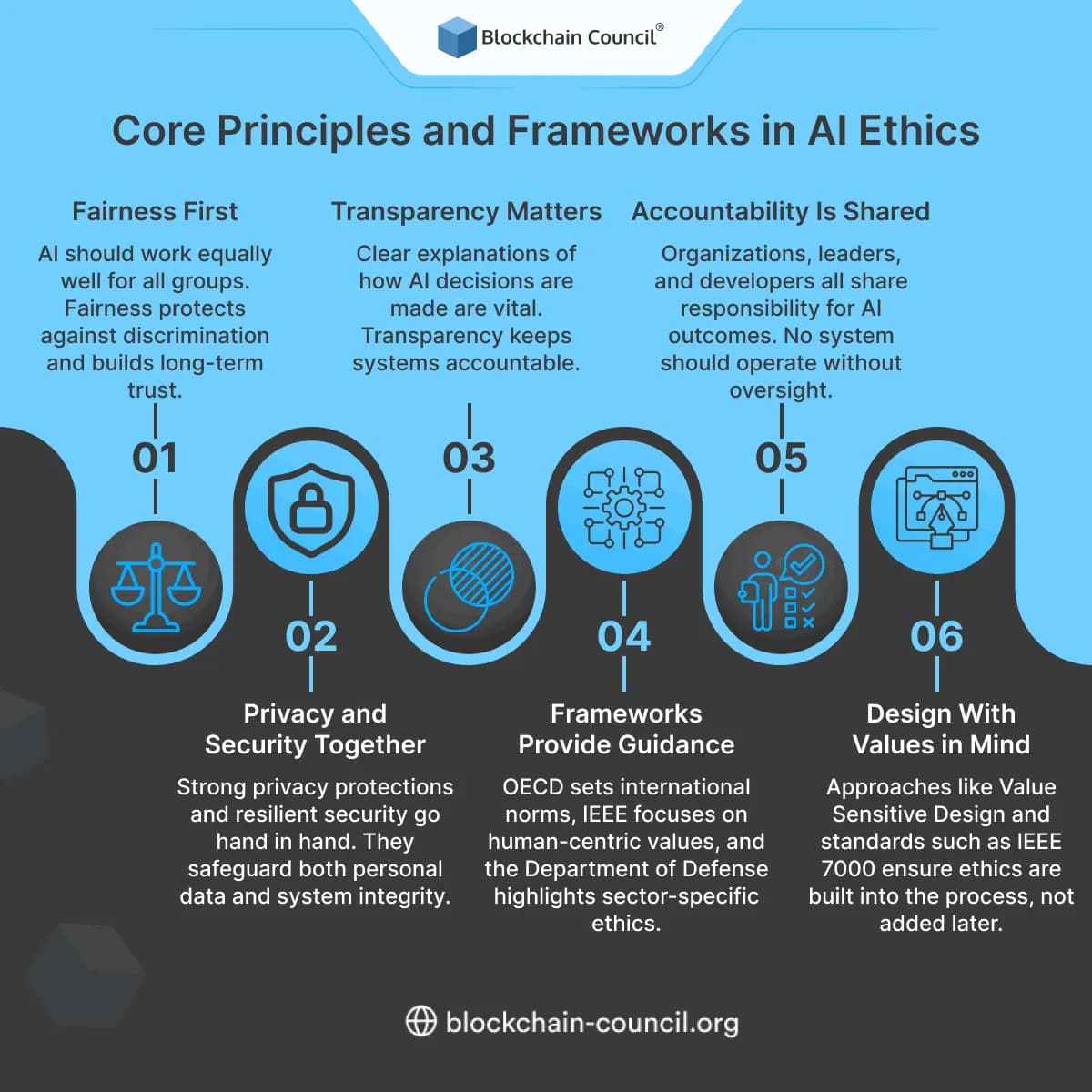

Core Principles and Frameworks in AI Ethics

Fairness First

AI should work equally well for all groups. Fairness protects against discrimination and builds long-term trust.

Transparency Matters

Clear explanations of how AI decisions are made are vital. Transparency keeps systems accountable.

Accountability Is Shared

Organizations, leaders, and developers all share responsibility for AI outcomes. No system should operate without oversight.

Privacy and Security Together

Strong privacy protections and resilient security go hand in hand. They safeguard both personal data and system integrity.

Frameworks Provide Guidance

OECD sets international norms, IEEE focuses on human-centric values, and the Department of Defense highlights sector-specific ethics.

Design With Values in Mind

Approaches like Value Sensitive Design and standards such as IEEE 7000 ensure ethics are built into the process, not added later.

This summary captures the most important and practical lessons from AI ethics today.

Real-World Applications and Governance

AI ethics is no longer a theoretical exercise. It is part of global governance. In 2024, the United Nations adopted a resolution supporting safe, rights-respecting AI. This signaled global alignment on the need for transparency and fairness.

Companies are also creating ethics councils and embedding ethics officers into leadership. Training is becoming common for developers and managers to understand their ethical responsibilities.

Bringing Ethics into Practice

For organizations, the path forward is clear. Start by adopting a governance council that oversees AI projects. Use frameworks like OECD and IEEE as reference points. Apply technical safeguards like differential privacy. Most importantly, test and review AI systems regularly to ensure ethical standards hold throughout the lifecycle.

Professionals can also take steps to strengthen their own skills. Data specialists can expand their expertise through the Data Science Certification. Business leaders can prepare for broader strategy and governance roles with the Marketing and Business Certification. These AI certs help ensure that both technical and strategic leaders are ready to manage AI responsibly.

Conclusion

AI ethics is no longer optional. It is the foundation for building trust, ensuring compliance, and unlocking long-term benefits from AI systems. By following shared principles and adopting proven frameworks, organizations can design AI that respects human rights, protects privacy, and delivers fair outcomes.

For professionals, this is also an opportunity. Building skills in AI ethics not only makes you more valuable but also positions you as a leader in one of the most important areas of technology today.

Related Articles

View All

AI & ML

Anthropic API Key

Learn how Anthropic API keys work, how developers access Claude AI models, authentication methods, and AI application integration best practices.

AI & ML

AI Hype vs. ROI: Practical Frameworks to Validate Generative AI Use Cases Before Scaling

Enterprises are spending more on generative AI, but ROI often lags. Learn a practical framework to validate use cases with baselines, TCO, pilots, and scorecards.

AI & ML

Is the AI Bubble Real? Key Indicators, Market Signals, and What Investors Should Watch

Is the AI bubble real? Explore key indicators like capex vs revenue, concentration risk, funding quality, and valuations to assess bubble risk in AI markets.

Trending Articles

The Role of Blockchain in Ethical AI Development

How blockchain technology is being used to promote transparency and accountability in artificial intelligence systems.

AWS Career Roadmap

A step-by-step guide to building a successful career in Amazon Web Services cloud computing.

Top 5 DeFi Platforms

Explore the leading decentralized finance platforms and what makes each one unique in the evolving DeFi landscape.