How to Build an AI Model Map?

An AI model map is a clear, living record of every AI model your organization uses. It answers one simple question fast: what AI do we have, where is it used, who owns it, and how risky is it. If you are dealing with multiple tools, vendors, or teams, building an AI model map is no longer optional.

If you are learning governance and validation basics through an AI Certification, this is one of the first practical systems you are expected to understand and implement.

What Is an AI Model Map?

In real practitioner language, an AI model map is a single view of all AI models in use, including:

- What the model does

- Where it runs

- What data it touches

- Who owns it

- What decisions it influences

- How risky it is

- How it is evaluated and monitored

You will also hear the same idea called a model inventory, model registry, or AI system of record. Different names, same goal.

Why Teams Build an AI Model Map

Most teams do not start with mapping for fun. They do it because something breaks.

Common triggers include:

- Leadership asking “how many AI models do we actually run?”

- A security or compliance review

- Unexpected costs from hidden AI usage

- Incidents where no one knows who owns a model

- Regulatory pressure around documentation and risk

From a Tech Certification perspective, this is basic operational hygiene, not advanced theory.

What Counts as a “Model” in the Map?

This is the first decision teams must make.

Most practitioners include:

- Traditional ML models like scoring or prediction systems

- LLMs and embedding models, including vendor APIs

- Agent workflows that combine models, tools, and memory

- Decision systems that act on model outputs

A key insight from governance discussions is simple: if a system influences decisions, it belongs in the map, even if it is not a custom-trained model.

Step 1: Discover Models Before You Document Them

You cannot map what you cannot see.

Teams usually discover models by checking:

- Cloud ML platforms and endpoints

- Kubernetes and VM deployments

- API gateways and secrets managers for model keys

- Code repositories for model calls

- Procurement and SaaS usage logs

- Network and browser activity for shadow AI

Shadow AI is the biggest blind spot. People often use tools long before governance catches up.

Step 2: Classify Models So the Map Is Usable

Without structure, the map becomes unreadable.

Most teams classify each model by:

- Model type such as ML, LLM, embedding, agent

- Source such as in-house, vendor API, open model

- Usage such as internal or customer-facing

- Risk tier such as low, medium, high

This mirrors how regulators and internal risk teams think.

Step 3: Create a Standard Model Record

Every model gets a basic record. This is the core of the map.

A practical minimum includes:

Identity

- Model name and ID

- Version

- Owner and business sponsor

Purpose

- Use case

- Who uses it

- What decisions it affects

Data

- Input sources

- Data sensitivity

- Retention rules

Deployment

- Where it runs

- Endpoints and regions

- Dependencies like tools or vector databases

Quality and Monitoring

- Evaluation metrics

- Monitoring in place

- Last review date

This is where theory turns into something auditable.

Step 4: Add Model Cards for Important Systems

For models that matter, teams write model cards.

A model card is the readable explanation of:

- What the model is for

- What it should not be used for

- Known limitations

- Evaluation results

- Safety considerations

This makes the map usable by non engineers, auditors, and leadership.

Step 5: Choose Where the Model Map Lives

Teams usually mature through three stages.

Stage 1: Spreadsheet or Wiki

Fast to start. Hard to maintain. Breaks quickly.

Stage 2: Model Registry

Tools like MLflow, Vertex AI, or Azure ML manage versions and ownership cleanly.

Stage 3: Governance Platform plus Registry

Used by enterprises that need approvals, audits, and reporting.

The right choice depends on scale, not ambition.

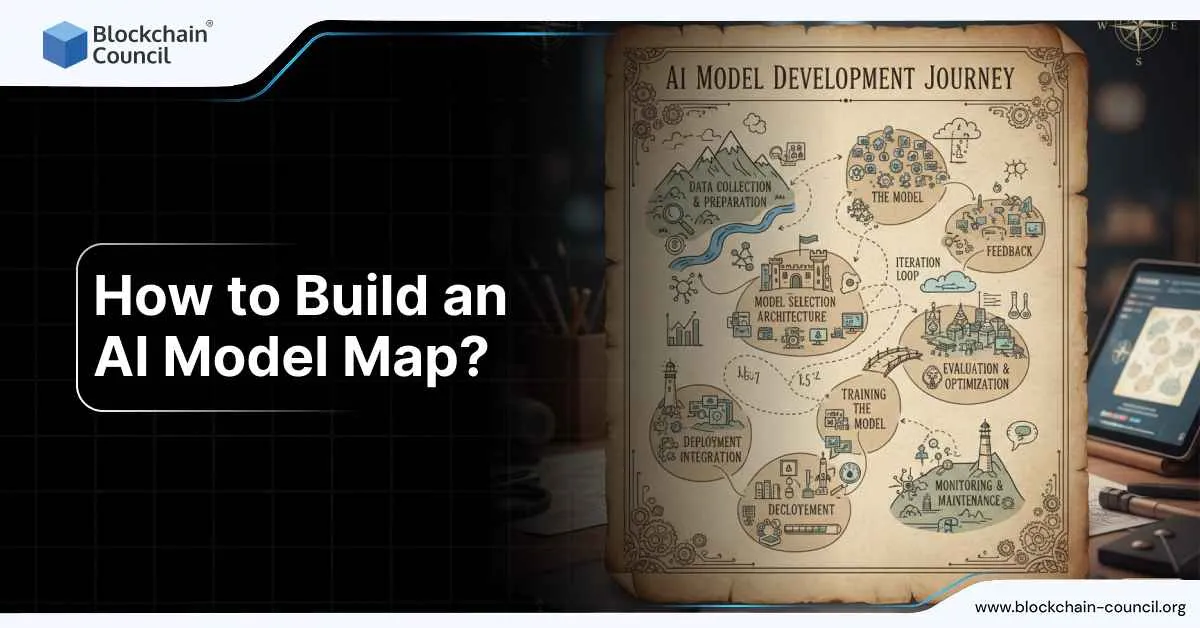

Step 6: Visualize the Model Ecosystem

Text alone is not enough.

The most useful visuals show:

- Model to data flow

- Model to application flow

- Model to decision flow

- Dependencies between tools and services

These visuals make risk and complexity visible instantly.

Step 7: Keep the Map Updated Without Friction

Maps fail when updates feel optional.

Teams keep them alive with simple rules:

- No production deployment without a model record

- No vendor model key without an owner and risk tier

- Any change requires a version update

- High-risk models get scheduled reviews

This turns the map into a habit, not a project.

Special Notes for GenAI and Agent Systems

For GenAI, teams usually add:

- Prompt templates and system instructions

- Tool permissions and connectors

- Retrieval sources and update cadence

- Safety controls and abuse monitoring

- Cost metrics like token usage and latency

This is where AI governance meets FinOps and security.

A Simple AI Model Map Starter Template

Most teams can start with these columns:

- Model ID

- Model name

- Model type

- Owner

- Business use case

- Customer-facing yes or no

- Decisions influenced

- Data sensitivity

- Deployment location

- Vendor or model source

- Version

- Evaluation method

- Monitoring status

- Risk tier

- Last review date

You can build this in a day and improve it over time.

Why This Matters Long Term

An AI model map is not paperwork. It is how you stay in control as AI usage grows.

> For leadership, it answers accountability questions.

> For engineers, it prevents chaos.

> For compliance, it provides evidence.

> For strategy and Marketing and Business Certification holders, it supports confident decisions about where AI should and should not be used.

Build it early, keep it simple, and let it evolve.