Multimodal Foundation Models

Multimodal foundation models are large AI systems trained to understand and generate more than one kind of data, typically text, images, audio, and video. Instead of treating language, vision, and sound as separate problems with separate models, multimodal systems aim to learn shared patterns across modalities and use them together.

This matters because the real world is not made of paragraphs. It is made of screens, photos, voice notes, scanned documents, charts, and messy context. Multimodal models are designed to work in that reality, not the tidy universe where everything is already typed out.

What “multimodal” actually means

A multimodal foundation model can accept inputs such as an image plus a question, a video clip plus a prompt, or speech plus on-screen content, then produce an output like text, structured data, or audio. OpenAI’s GPT-4o is positioned as “natively multimodal,” meaning it is built to reason across text, vision, and audio with low-latency interactions.

Google’s Gemini 1.5 line is also described as multimodal and has emphasized long-context understanding, including very large context windows that can hold extensive documents and other media.

Open-source and “open weights” options have expanded too. Meta’s Llama 3.2 introduced vision-capable variants intended to bring multimodal capability to more developers, including those targeting edge devices.

How these models are built

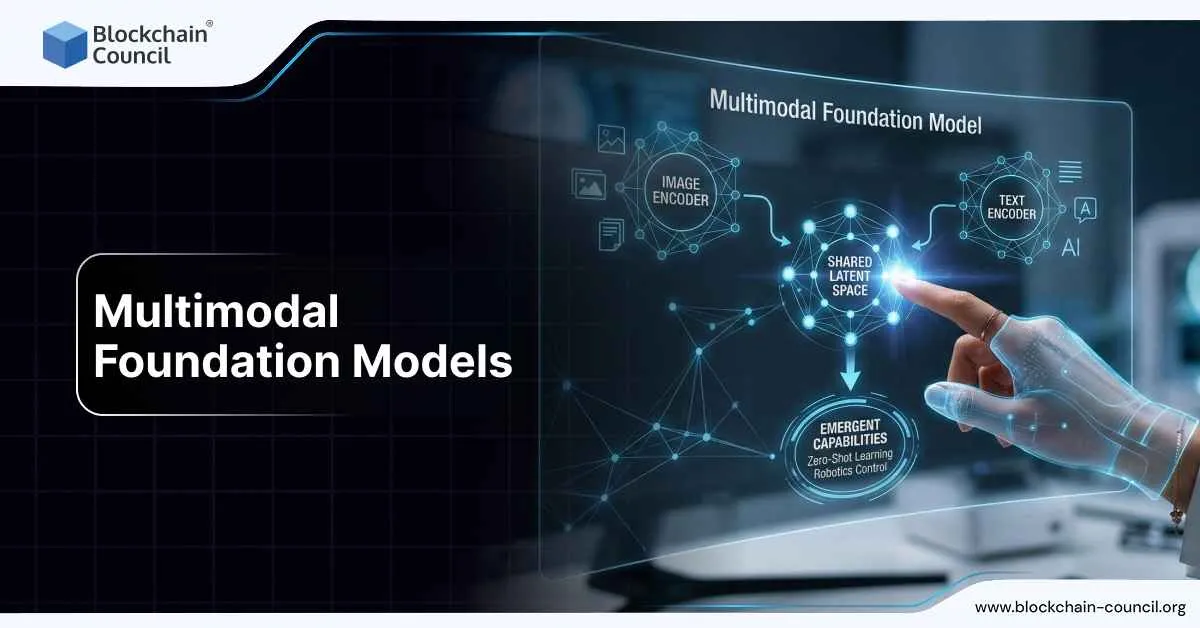

Shared representations

At a high level, multimodal models map different data types into internal representations that can interact. Images might be converted into sequences of visual tokens. Audio can be converted into features or tokens. Text is tokenized in the usual way. Once everything is in a compatible internal format, the model can learn relationships like “this region of the image corresponds to this phrase” or “this sound indicates this event.”

Training signals

Multimodal training often uses combinations of:

- Image-text pairs (captions, alt-text, web data)

- Instruction-style datasets (questions about images, documents, UI screens)

- Audio-text pairs (speech and transcripts)

- Video-text pairs (descriptions, temporal reasoning tasks)

The best systems increasingly mix general web-scale pretraining with curated instruction tuning and safety tuning. The aim is not only capability, but also reliable behavior under messy real inputs.

Why “foundation” matters

These are “foundation” models because they are designed as general-purpose bases that can be adapted to many tasks: summarizing documents with figures, answering questions over a product photo, analyzing a call recording, or extracting fields from a scanned form.

What’s new recently

Native multimodality and faster interaction

One major trend is moving from “bolt-on” vision or speech to more integrated systems. GPT-4o was announced as a flagship model that works across audio, vision, and text in real time. This shift is about user experience as much as accuracy: smoother back-and-forth, less friction between “showing” and “telling.”

Longer context for mixed inputs

Another trend is long context. Google presented Gemini 1.5 as a step forward in long-context multimodal understanding, and later materials highlighted very large context windows in Gemini 1.5 Pro. Long context is not just “more tokens.” It enables practical workflows like analyzing hundreds of pages of mixed text and images, or reviewing long videos while keeping earlier details in memory.

Multimodal models spreading across ecosystems

Meta’s Llama 3.2 vision models are an example of multimodal capability showing up in more places, including cloud platforms and edge-focused setups. As competition increases, multimodality is becoming less “premium add-on” and more “baseline expectation,” which is how technologies usually become boring and useful.

Evaluation is getting more serious

Research communities are expanding benchmarks that test vision-language reasoning, document understanding, and interactive feedback loops that involve multiple modalities. Recent academic work compares top models for tasks that combine visual and textual signals, reflecting how evaluation is adapting to real usage.

Real-world examples that actually matter

Document and form understanding

Businesses run on PDFs, scanned forms, receipts, invoices, and screenshots. Multimodal models can extract structured fields, answer questions about a contract page, or summarize a report that contains charts and tables. This is not glamorous, but it saves time and reduces manual review.

Customer support with images

A customer can upload a photo of a damaged product, a screenshot of an error message, or a picture of a device’s wiring, then ask for help. A multimodal model can interpret the image and provide targeted steps. Compared to text-only support, this cuts the “describe what you see” loop that humans famously struggle with.

Healthcare and medical imaging support

In strictly controlled, compliant settings, multimodal models can help with workflow tasks like drafting radiology summaries from structured inputs, triaging images for routing, or explaining medical documents in plain language. The key point is “support,” not replacing clinical judgment, because regulators and reality both exist.

Robotics and embodied AI

Robots and agents in the physical world need vision, sometimes audio, and an understanding of instructions. Multimodal models are increasingly used as the reasoning core for systems that interpret scenes and plan actions, even if the final control loop is handled by specialized software.

Key challenges

Reliability and hallucinations across modalities

If a model “confidently” misreads a chart or misses a small detail in an image, the output can be wrong in ways that look persuasive. Multimodality increases the surface area for errors. Better evaluation, uncertainty handling, and human-in-the-loop design remain critical.

Data quality, consent, and privacy

Training data that includes images and audio increases privacy and copyright complexity. This has pushed the industry toward stronger data governance, filtering, and provenance tracking, plus enterprise deployments that keep sensitive content in controlled environments.

Safety in audio and vision

Multimodal models can be used for scams, impersonation, and manipulation. Safety work now includes guarding not only text outputs but also voice outputs, image interpretation, and tool use. The “attack surface” is bigger, so defensive engineering has to be, too.

Cost and latency

Processing video and audio can be expensive. The practical winners are often models that balance quality with speed and cost, especially for high-volume business use.

Why skills and certification are showing up everywhere

As multimodal foundation models move from demos to deployment, organizations need people who can translate capability into safe, measurable systems. That includes model selection, evaluation design, data handling, prompt and tool pipelines, and compliance.

Structured learning pathways can help professionals signal competence, especially when hiring managers are trying to separate real expertise from confident guessing. Options include an AI certification for AI fundamentals and applied practice, plus broader tracks like, deep tech certification, and marketing certification for people working across emerging-technology roles.

Even if your job is not “model training,” multimodal systems touch product, risk, security, operations, and customer experience. That means cross-functional literacy is becoming a real career advantage, not just something people pretend to have on professional networking sites.

Where this is going next

Multimodal foundation models are trending toward three things: better real-time interaction, better long-context reasoning over mixed media, and broader availability across closed and open ecosystems. The next phase is less about flashy capability and more about dependable integration: consistent performance, clear evaluation, strong safeguards, and predictable cost.

In short, multimodal foundation models are turning AI from a text box into a general interface for digital work. Humans will still manage to misuse it, but at least the tools are getting closer to how the world actually looks and sounds.