How Does Edge AI Work?

Edge AI works by taking an AI model that was trained elsewhere, usually in the cloud or a data center, and moving it close to where data is created so decisions happen instantly. Instead of sending everything to remote servers and waiting, the device itself becomes smart enough to act.

Anyone starting an AI Course often learns models in notebooks first. Edge AI is where those models leave the lab and start working in the real world, inside cameras, machines, phones, cars, and sensors.

This is not about theory. This is about how AI actually runs day to day.

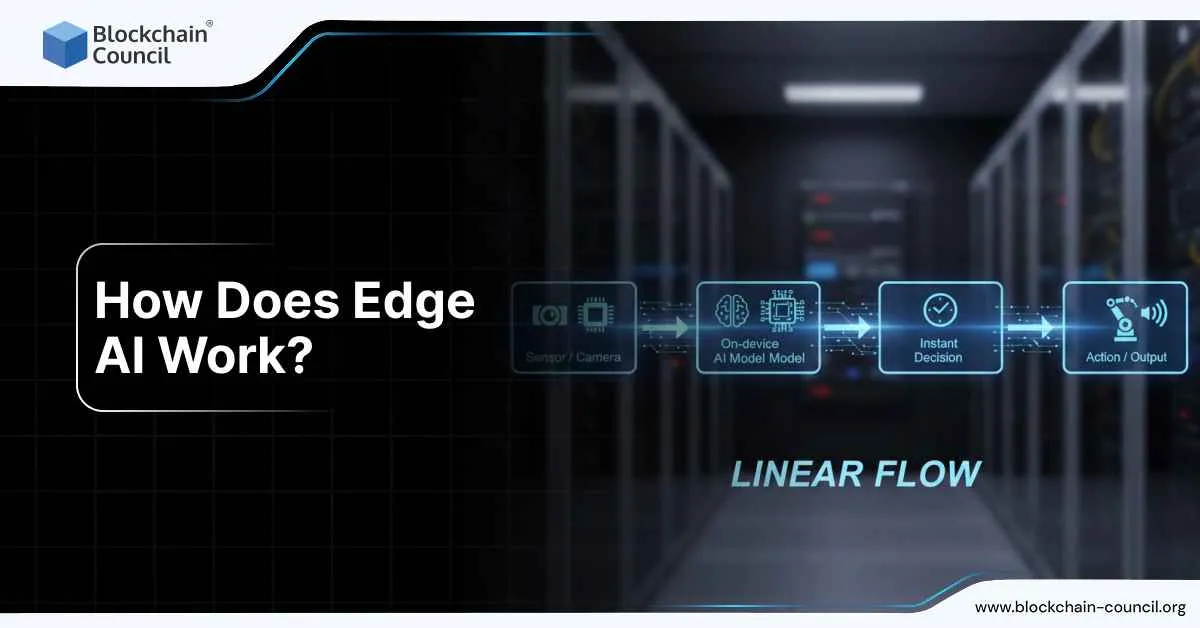

Edge AI working

Edge AI follows one basic idea.

- Data is created somewhere.

- The decision should happen there too.

If a camera sees danger, it should react immediately.

>If a machine senses failure, it should stop instantly.

>If a device hears a command, it should respond without waiting for the internet.

That is the core promise of Edge AI.

The basic edge AI loop

Every edge AI system follows the same loop, even if the hardware changes.

- A device captures data locally

This could be video frames, audio, sensor readings, or machine signals.

- A model runs locally

The AI model processes the data on the device or a nearby gateway.

- An output is produced

Labels, scores, bounding boxes, text, or predictions.

- An action happens immediately

Alerts fire, machines stop, doors unlock, calls route, systems adjust.

- Only selected data goes to the cloud

Logs, metrics, and small samples are sent later for monitoring and improvement.

The key is simple.

Decisions are local. The cloud is optional support.

Training vs inference

This is where many beginners get confused.

Most Edge AI systems do not train models on the device.

They work like this:

- Training happens centrally

Large datasets and powerful hardware are used to train and validate models.

- Inference happens at the edge

The trained model is deployed to devices to make predictions on live data.

Training is heavy.

Inference is fast and lightweight.

Edge AI is almost always about inference.

What runs on an edge device

An edge AI device is not just running a single model file. It runs a small pipeline.

Data capture and preparation

Before AI even runs, raw data needs cleanup.

- Reading sensors or video streams

- Decoding and resizing images

- Normalizing audio or signals

- Filtering noise

This step reduces compute load and improves accuracy.

Inference runtime

This is the engine that runs the model.

- Loads the optimized model

- Executes it on CPU, GPU, or NPU

- Manages memory and performance

Different devices use different runtimes depending on hardware.

Post-processing logic

Model outputs are rarely usable as-is.

Post-processing includes:

- Applying thresholds

- Tracking objects over time

- Filtering low-confidence predictions

- Applying business rules

This step turns raw predictions into decisions.

Local actions

Finally, the device acts.

- Trigger alarms

- Stop equipment

- Store events

- Display overlays

- Send minimal alerts upstream

This is where Edge AI creates real value.

Why Edge AI is fast

Edge AI is fast because it removes distance.

No waiting for:

- Network round trips

- Cloud queue delays

- Bandwidth bottlenecks

The model runs where the data is created.

This leads to:

- Lower latency

- Lower bandwidth costs

- Better reliability

- Improved privacy

That is why Edge AI is critical in safety, healthcare, manufacturing, and real-time systems.

Making models fit on edge hardware

Edge devices are constrained. Models must be adapted.

Model size reduction

Common techniques include:

- Reducing numerical precision

- Removing unused layers

- Compressing weights

Smaller models load faster and use less power.

Hardware optimization

Models are often converted into formats optimized for the target device.

This allows:

- Faster inference

- Better use of accelerators

- Lower energy consumption

Latency vs throughput tuning

Edge systems often prioritize latency.

- Single predictions must be fast

- Real-time response matters

In some cases like cameras or factories, throughput also matters.

The balance depends on the use case.

These optimizations are a major focus area in advanced Tech Certification programs.

How models get deployed to devices

Deployment is where Edge AI becomes operational.

App-based deployment

The model is bundled inside the application running on the device.

This is common for:

- Mobile apps

- Consumer devices

- Embedded systems

Managed edge deployment

Models are shipped as managed artifacts.

This approach supports:

- Remote updates

- Version control

- Rollback on failure

- Fleet-wide management

This is essential when managing hundreds or thousands of devices.

Monitoring and updates

Edge AI is not deploy-and-forget.

The real world changes.

- Lighting shifts

- Environments evolve

- Sensors drift

- User behavior changes

A healthy Edge AI lifecycle looks like this:

- Collect performance metrics

- Capture limited samples

- Identify failures and drift

- Retrain or fine-tune models

- Redeploy updates safely

Without monitoring, accuracy degrades silently.

Example

Consider a factory inspection system.

- Cameras capture product images

- A defect detection model runs locally

- Decisions happen instantly

- Only defect events are logged centrally

The factory does not stream video nonstop.

It sends only what matters.

That is Edge AI working exactly as intended.

Why Edge AI is hard

Edge AI sounds simple, but it is difficult in practice.

Challenges include:

- Limited power and memory

- Heat and thermal limits

- Many device types and operating systems

- Safe updates and rollbacks

- On-device security and tamper resistance

This is why production Edge AI focuses as much on operations as on model accuracy.

Why businesses care about Edge AI

Edge AI is not just a technical upgrade. It changes how businesses operate.

Key benefits include:

- Faster customer experiences

- Lower cloud infrastructure costs

- Better compliance with data privacy laws

- Increased resilience during outages

Industries using Edge AI heavily include:

- Manufacturing

- Retail

- Healthcare

- Transportation

- Smart infrastructure

This is why Edge AI strategy increasingly appears alongside Marketing and Business Certification discussions, especially when AI directly impacts customer experience and operations.

Conclusion

Edge AI works by pushing intelligence closer to reality.

Models are trained centrally, optimized carefully, deployed locally, monitored continuously, and updated over time.

The result is AI that reacts instantly, respects privacy, and keeps working even when networks fail.

That is not futuristic.

That is how modern AI systems already work today.