Ethical AI and Responsible Leadership

What Is Ethical AI and Why Does Responsible Leadership Matter Now?

Artificial intelligence is no longer a futuristic idea—it’s here, shaping decisions, automating workflows, and influencing nearly every aspect of business. But as AI’s power grows, so does its potential for harm. Algorithms can make biased hiring decisions, misuse personal data, or spread misinformation at a scale no human could match. That’s where ethical AI and responsible leadership come in.

Ethical AI means designing, deploying, and managing AI systems in ways that are transparent, fair, safe, and aligned with human values. It’s about ensuring technology serves people rather than exploiting them. Responsible leadership, in this context, means guiding AI adoption with integrity—balancing innovation with accountability.

The challenge is that AI doesn’t have morality built in. It reflects the values and intentions of its creators and users. Without proper oversight, even well-meaning systems can reinforce inequality or create new risks. That’s why leaders today must not only understand AI’s technical side but also its societal impact.

Many organizations are now recognizing this shift. They’re appointing Chief AI Ethics Officers, creating governance boards, and investing in team-wide AI training. Programs like the AI certification help professionals develop a foundational understanding of responsible AI development—covering topics like fairness, bias detection, privacy, and explainability.

In 2025, ethical leadership isn’t just a moral choice—it’s a business imperative. Companies that fail to handle AI responsibly face regulatory penalties, reputational damage, and loss of customer trust. On the other hand, those that embed ethics into AI operations are building long-term resilience and brand loyalty.

Responsible leadership means being proactive—setting clear rules before problems arise, not reacting after harm is done. As one global executive recently said, “We can’t claim to lead in innovation if we lag in ethics.”

What Are the 2025 Rules Leaders Actually Need to Follow?

Regulation around AI is evolving rapidly, and 2025 marks a turning point. Governments are no longer just advising on best practices—they’re enforcing compliance. The European Union’s AI Act is the first comprehensive AI regulation in the world, setting clear obligations for companies that build, sell, or use AI systems.

Under this law, AI systems are categorized based on risk—from minimal to high. High-risk systems, like those used in healthcare, finance, or recruitment, require transparency, human oversight, and extensive testing before deployment. Companies must maintain detailed documentation, implement risk management processes, and allow independent audits.

For leaders, this means governance is no longer optional—it’s mandatory. Every AI project must have clear accountability, measurable safeguards, and documented decision flows.

Meanwhile, in the United States, frameworks such as the NIST AI Risk Management Framework (AI RMF) provide voluntary but highly respected guidelines. This framework helps organizations identify, measure, and manage AI risks through four pillars: Govern, Map, Measure, and Manage. Leaders can use these steps to ensure that AI systems align with business goals without compromising ethics or safety.

Across the globe, other countries are following suit. Canada and Singapore have established AI ethics frameworks, while Japan and South Korea focus on transparency and explainability standards. Together, these regulations push organizations to create trustworthy AI ecosystems.

In practice, responsible leaders should:

- Maintain an internal register of all AI systems and their risk levels.

- Conduct bias, privacy, and security assessments for each system.

- Assign clear accountability for AI decisions at every stage.

- Train all employees—not just developers—on ethical AI practices.

Regulation is only one side of the story. The other is corporate self-regulation. Many organizations voluntarily align with standards such as ISO/IEC 42001, a management system standard for AI governance. This ensures consistent oversight across departments and countries.

Ethical AI isn’t about compliance alone—it’s about leadership integrity. Regulations define the floor; responsible leaders aim for the ceiling.

How Do ISO/IEC 42001 and NIST AI RMF Work Together in Practice?

ISO/IEC 42001 and NIST AI RMF are the two most important global frameworks for AI governance today. When used together, they give leaders both structure and flexibility.

ISO/IEC 42001 provides a formal management system—think of it as a blueprint for responsible AI operations. It defines how an organization should plan, implement, review, and continually improve AI governance. It includes principles for risk control, data transparency, bias management, and human oversight. It’s auditable, meaning companies can prove compliance through certification.

NIST AI RMF, on the other hand, is more about the process of managing AI risks. It offers detailed guidance on identifying what can go wrong, measuring it, and mitigating it effectively. The two frameworks complement each other perfectly: ISO sets up governance; NIST executes it.

A responsible leader would use ISO/IEC 42001 to establish company-wide AI policies and NIST AI RMF to implement those policies at the project level. For instance:

- Govern (NIST) maps to Leadership and Policy (ISO).

- Map and Measure (NIST) link to Risk Assessment and Documentation (ISO).

- Manage (NIST) connects to Implementation and Monitoring (ISO).

By aligning these systems, organizations create a continuous feedback loop of ethical improvement. Instead of treating AI governance as a one-time compliance exercise, they make it an ongoing part of corporate culture.

Leaders who integrate both frameworks gain three advantages:

- Clarity: Everyone understands the company’s ethical boundaries.

- Consistency: All departments follow the same rules, regardless of geography.

- Credibility: Certification demonstrates commitment to customers and regulators alike.

AI governance isn’t paperwork—it’s trust architecture. The more structured your ethics, the stronger your innovation.

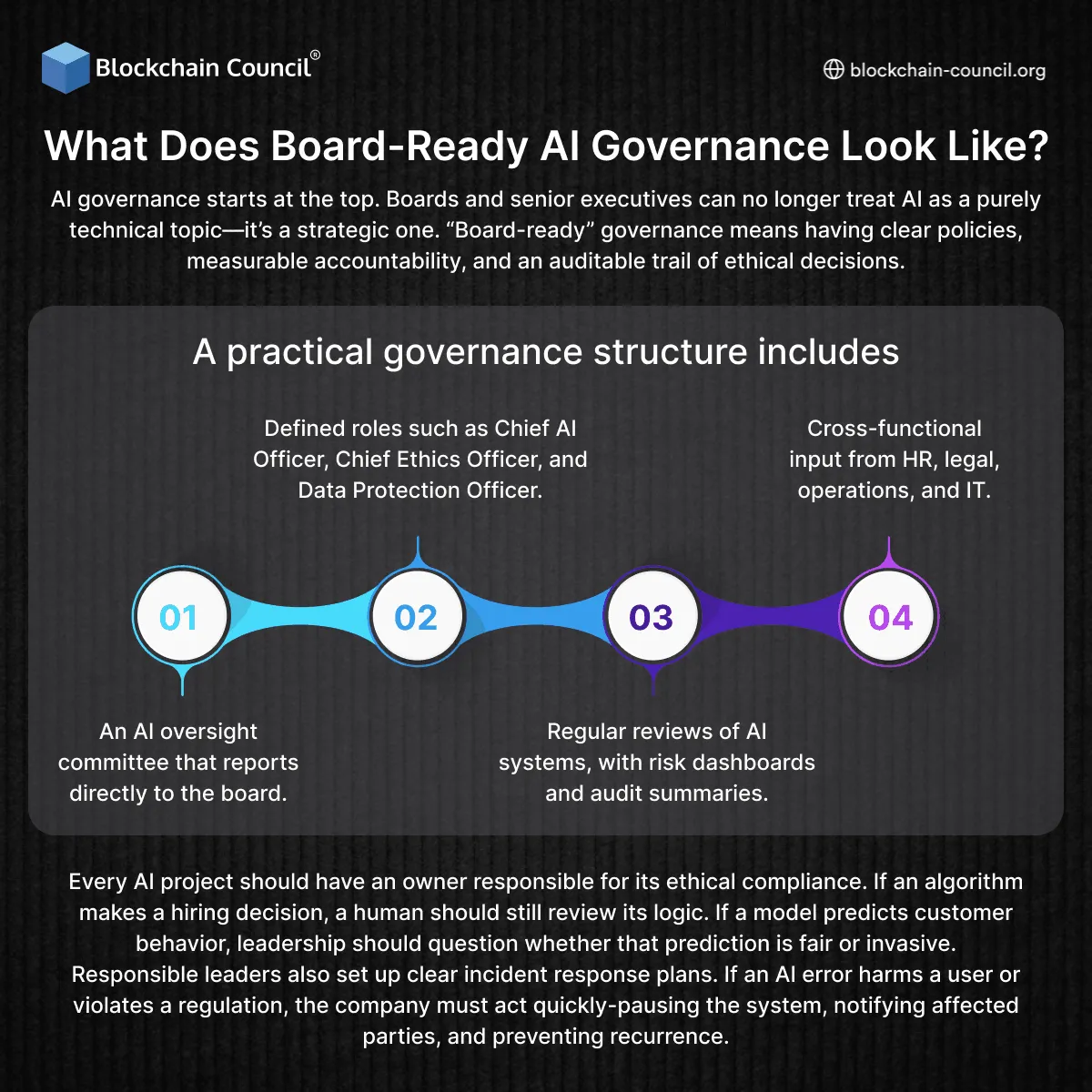

What Does Board-Ready AI Governance Look Like?

AI governance starts at the top. Boards and senior executives can no longer treat AI as a purely technical topic—it’s a strategic one. “Board-ready” governance means having clear policies, measurable accountability, and an auditable trail of ethical decisions.

A practical governance structure includes:

- An AI oversight committee that reports directly to the board.

- Defined roles such as Chief AI Officer, Chief Ethics Officer, and Data Protection Officer.

- Regular reviews of AI systems, with risk dashboards and audit summaries.

- Cross-functional input from HR, legal, operations, and IT.

Every AI project should have an owner responsible for its ethical compliance. If an algorithm makes a hiring decision, a human should still review its logic. If a model predicts customer behavior, leadership should question whether that prediction is fair or invasive.

Responsible leaders also set up clear incident response plans. If an AI error harms a user or violates a regulation, the company must act quickly—pausing the system, notifying affected parties, and preventing recurrence.

Ethics doesn’t scale without structure. Boards that embed ethical AI governance early reduce future risk and build stronger stakeholder confidence.

How Should Leaders Address Bias, Safety, and Human Oversight?

Bias and safety are the twin pillars of ethical AI. A biased model can cause social harm, while an unsafe one can cause physical or financial damage. Leaders must ensure their teams understand how to detect and prevent both.

Bias can creep in through data, algorithms, or even assumptions made during model design. Responsible leaders enforce rigorous testing before deployment, using diverse data and fairness metrics. They also encourage teams to document decisions about data inclusion and exclusion. Transparency is the best safeguard against hidden bias.

Safety is equally important. AI systems that make decisions in finance, healthcare, or autonomous vehicles must have multiple layers of human oversight. A responsible leader never allows a system to operate without the ability for human intervention or shutdown.

Leaders should also promote red-teaming—intentionally testing AI systems for failure scenarios. This approach, now used by major technology companies, simulates attacks or misuse cases to ensure resilience.

To foster long-term responsibility, companies should invest in ongoing education. Programs like tech certifications or advanced data courses train teams to identify ethical risks before they escalate.

When ethical training becomes part of the company DNA, bias prevention turns from a defensive act into a natural habit.

How Do Leaders Build AI Ethics into Company Culture?

Culture is the true test of leadership. A company can have the best ethics policy on paper, but if employees don’t live it daily, it fails in practice. Ethical AI culture begins with awareness, transparency, and empowerment.

Leaders must make ethics part of everyday conversation. That means discussing data privacy and fairness during product meetings, celebrating responsible innovations, and recognizing employees who raise ethical concerns. When people see that speaking up is rewarded, not punished, they engage more deeply.

A strong ethical culture also depends on education. Every employee should understand at least the basics of AI ethics—how AI works, what bias means, and how to use systems responsibly. This aligns with the growing global expectation for AI literacy in the workplace.

Leaders can reinforce culture through rituals: regular ethics briefings, scenario-based workshops, or “AI office hours” where employees can ask questions. Formal frameworks like the Data Science Certification or agentic ai certification can also help teams master responsible innovation.

Ethical culture thrives when employees feel ownership. It’s not about rules imposed from the top—it’s about shared accountability across the organization.

How Do Foundation Models and Generative AI Change Leadership Responsibility?

Generative AI and large foundation models introduce new ethical challenges. Unlike traditional AI systems built for narrow tasks, these models can produce unpredictable outputs—from deepfakes to false information.

Responsible leadership requires more than monitoring—it requires anticipation. Leaders must foresee potential misuse before releasing products to the public. They should evaluate every generative model for accuracy, safety, and intellectual property compliance.

Transparency is critical. Users should know when they’re interacting with AI-generated content and what data was used to train it. Labeling, disclaimers, and invisible digital watermarks help maintain authenticity.

Another leadership priority is accountability. When an AI-generated output causes harm, who is responsible—the user, the developer, or the company? Responsible leaders establish clear internal guidelines for liability and public communication.

Finally, generative AI ethics demands human moderation. No matter how advanced the model, there must always be human review in high-risk scenarios. That human-in-the-loop approach protects both companies and customers.

Courses in technology now emphasize these new responsibilities, preparing professionals to design, monitor, and regulate generative systems with transparency and control.

How Can Blockchain and Data Integrity Support Ethical AI?

Ethical AI relies heavily on trustworthy data. Without transparency in how data is collected, stored, and used, no AI system can truly be responsible. This is where blockchain technology plays a vital role.

Blockchain offers immutable records of data transactions, ensuring that every data source used in AI can be traced and verified. For example, in supply chain AI systems, blockchain can record every data point—from origin to delivery—so that predictions or optimizations remain auditable.

In governance contexts, blockchain ensures accountability. If an AI model produces a harmful decision, leaders can trace which dataset or code change caused it. This forensic capability is invaluable for compliance and trust-building.

Professionals who complete blockchain technology courses gain the skills to design transparent systems that blend blockchain and AI, reinforcing data ethics across industries.

Combining blockchain’s traceability with AI’s intelligence forms the backbone of verifiable ethics—a system where accountability is built, not promised.

How Do Leaders Balance Innovation and Regulation?

Ethical leadership doesn’t mean slowing innovation—it means making it sustainable. The challenge for modern leaders is to move fast responsibly.

Balancing innovation with regulation starts by treating compliance as a creative constraint, not a limitation. Regulations like the EU AI Act or the NIST framework exist to prevent harm, not to stifle progress. They give companies a safe space to innovate confidently.

Responsible leaders involve compliance teams early in product design. This prevents costly redesigns later and fosters trust between engineers and legal experts. The result is ethical by design—AI products that meet both market and moral standards from day one.

Leaders must also advocate for smarter regulations. By participating in industry discussions and sharing insights with policymakers, they can shape balanced rules that encourage innovation while protecting users.

Finally, communication is key. Ethical AI requires leaders who can translate complex regulations into clear, actionable guidance for teams. The ability to interpret and simplify legal frameworks is fast becoming a core leadership skill.

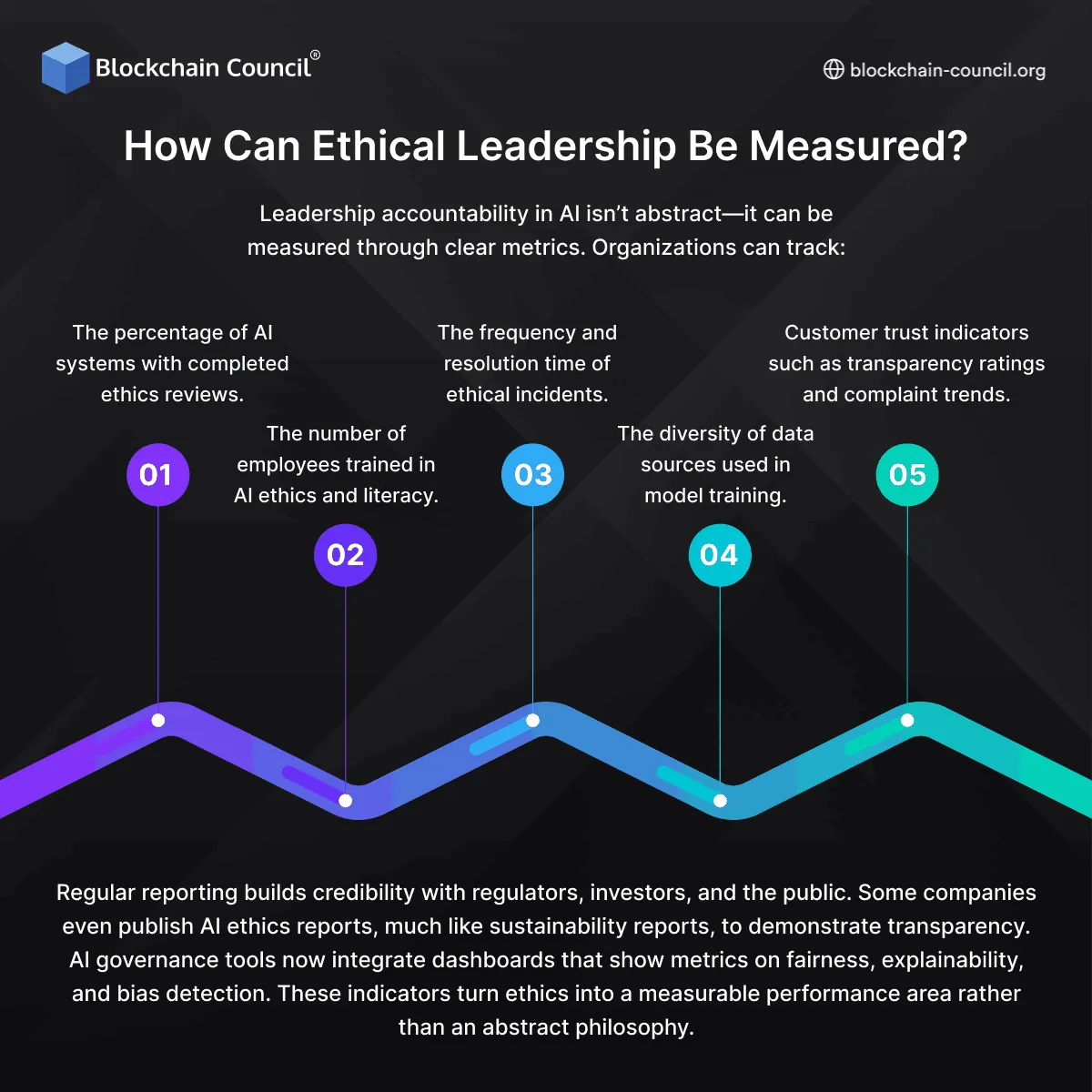

How Can Ethical Leadership Be Measured?

Leadership accountability in AI isn’t abstract—it can be measured through clear metrics. Organizations can track:

- The percentage of AI systems with completed ethics reviews.

- The number of employees trained in AI ethics and literacy.

- The frequency and resolution time of ethical incidents.

- The diversity of data sources used in model training.

- Customer trust indicators such as transparency ratings and complaint trends.

Regular reporting builds credibility with regulators, investors, and the public. Some companies even publish AI ethics reports, much like sustainability reports, to demonstrate transparency.

AI governance tools now integrate dashboards that show metrics on fairness, explainability, and bias detection. These indicators turn ethics into a measurable performance area rather than an abstract philosophy.

Leadership, after all, isn’t what you say—it’s what you measure.

How Can Responsible Leadership Shape the Future of Work?

As AI continues to reshape industries, leadership itself is evolving. The most successful leaders of the next decade will be those who combine technical fluency with moral clarity. They’ll be able to use AI for growth while protecting human dignity and autonomy.

Responsible leadership means seeing beyond profit. It’s about designing AI that empowers people, enhances creativity, and expands opportunity. It’s also about standing firm against unethical uses of technology—even when they promise short-term gain.

This new kind of leadership requires continuous learning. Executives are now enrolling in programs like the Marketing and Business Certification to understand how to integrate ethics into strategic decisions. Others build cross-functional ethics boards that include engineers, psychologists, and sociologists to capture diverse perspectives.

In the future, ethical leadership won’t be a niche—it will be the norm. Stakeholders, from investors to employees, will expect nothing less.

What Does the Future of Ethical AI Look Like?

The future of ethical AI will blend technology, governance, and empathy. Automation will continue to expand, but so will accountability. AI systems will become more explainable, auditable, and aligned with human goals.

Companies that prioritize ethics will gain competitive advantages. Customers prefer transparent brands; regulators favor compliant companies; and employees are more motivated to work for organizations that act responsibly.

We’ll also see a rise in co-regulation—a partnership between governments, businesses, and civil society to ensure AI benefits everyone. AI ethics will move from reactive checklists to proactive design principles embedded in every innovation cycle.

Ultimately, the future belongs to leaders who understand that trust is the most valuable currency in a digital world. Ethical AI builds that trust, one decision at a time.

Related Articles

View AllAI & ML

Top 10 Applications of Machine Learning

This article delves into the top 10 applications of machine learning, exploring how this technology is not just shaping industries but also redefining our daily lives. So, let’s get started!

AI & ML

AI Talent Pipeline, Here's How Schools Can Build It

Schools are under pressure to prepare students for an AI-powered world. Building an AI talent pipeline is no longer optional. It’s a necessity. From curriculum design to teacher training and real-world partnerships, schools must take clear steps to equip students with future-ready skills. This article explains how they can do that—clearly, practically, and without delay.

AI & ML

Google Releases Gemini 2.5 Deep Think

Google has officially rolled out Gemini 2.5 Deep Think, a powerful upgrade inside the Gemini app, designed to handle complex reasoning tasks with multi-agent thinking. It's available now for AI Ultra subscribers and is already making waves for how it solves difficult problems like math, coding, and iterative design.

Trending Articles

The Role of Blockchain in Ethical AI Development

How blockchain technology is being used to promote transparency and accountability in artificial intelligence systems.

AWS Career Roadmap

A step-by-step guide to building a successful career in Amazon Web Services cloud computing.

Top 5 DeFi Platforms

Explore the leading decentralized finance platforms and what makes each one unique in the evolving DeFi landscape.